By

Vlad Shvets

Stack Overflow Is Almost Invisible in AI Search

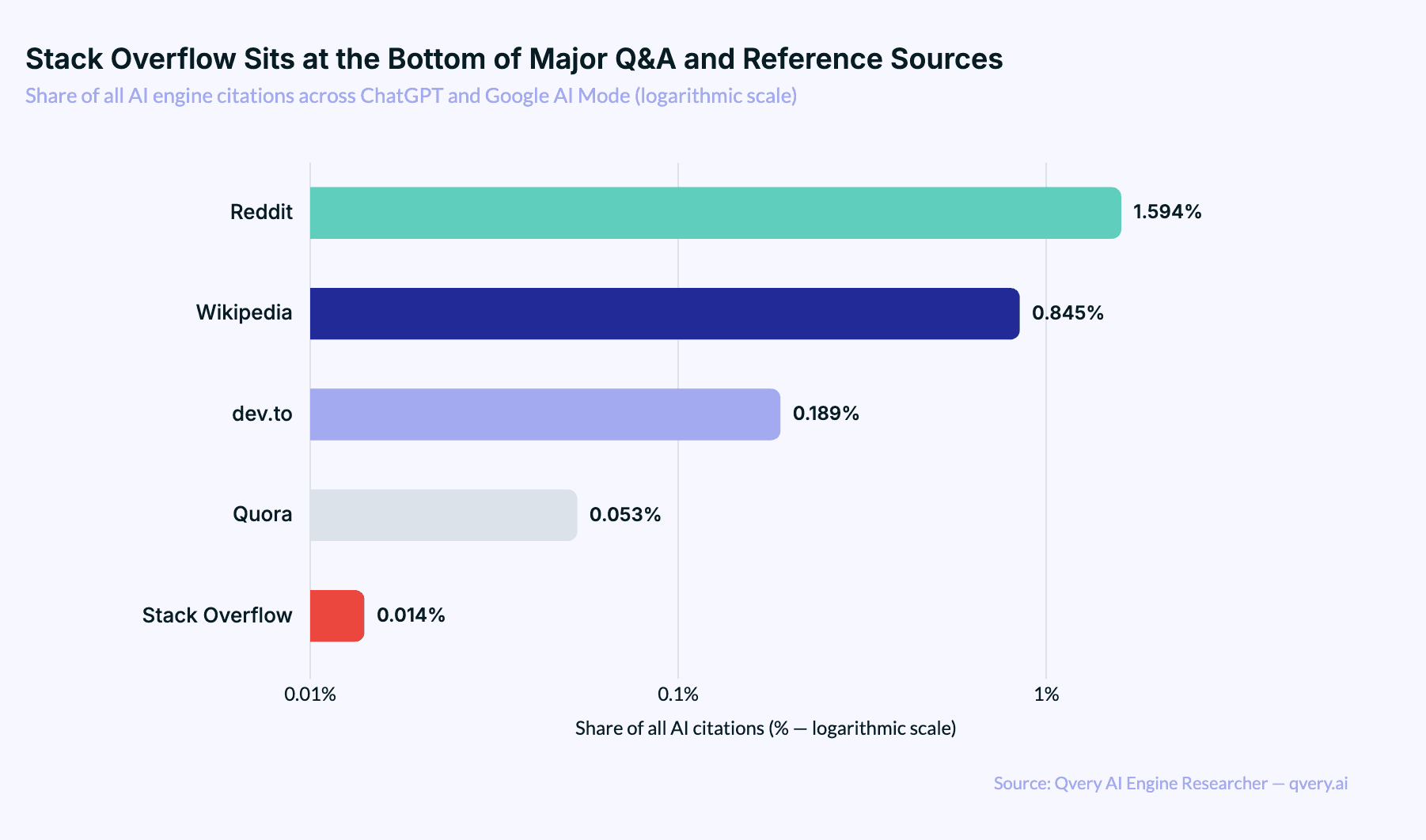

Stack Overflow accounts for just 0.014% of all AI engine citations. dev.to gets cited 14x more often despite hosting a fraction of the content. Reddit gets 117x more. Here's what the data shows about why AI engines skip the world's biggest developer Q&A site.

Stack Overflow accounts for just 0.014% of all AI engine citations. dev.to gets cited 14x more often despite hosting a fraction of the content. Reddit gets 117x more. Here's what the data shows about why AI engines skip the world's biggest developer Q&A site.

Stack Overflow accounts for just 0.014% of all AI engine citations. dev.to gets cited 14x more often despite hosting a fraction of the content. Reddit gets 117x more. Here's what the data shows about why AI engines skip the world's biggest developer Q&A site.

Most developers I know assume Stack Overflow is the bedrock of AI search visibility for technical content. It's the world's largest developer Q&A site. It has 24 million answered questions.

If AI engines were going to cite anyone for code questions, surely Stack Overflow would be the obvious first stop.

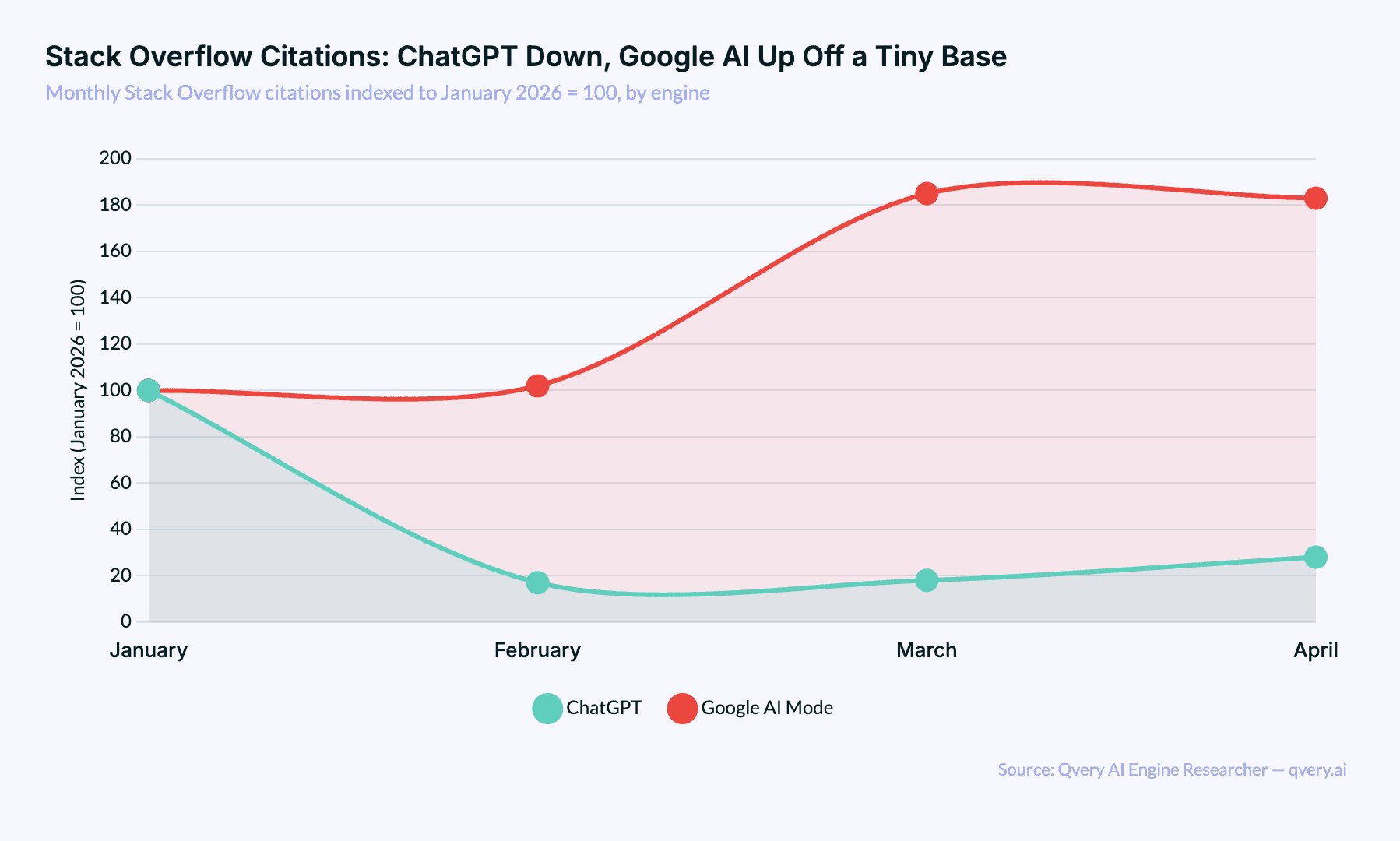

It isn't. We've been tracking AI engine citations across ChatGPT and Google AI Mode through Qvery's AI Engine Researcher, and Stack Overflow barely shows up. It accounts for 0.014% of all citations. That's one citation in every seven thousand. It ranks well outside the top fifty cited domains, behind sites that nobody would describe as a primary developer resource.

The reason isn't that Stack Overflow lost its quality. The reason is that AI engines have already read it.

Every answer, every accepted solution, every code snippet that's been on the site since 2020 is sitting inside the foundation models. There's no need to cite a source the model has already absorbed. Stack Overflow's invisibility in AI search is a side effect of being too useful for too long.

A success story, technically. Just not the success Stack Overflow would have picked.

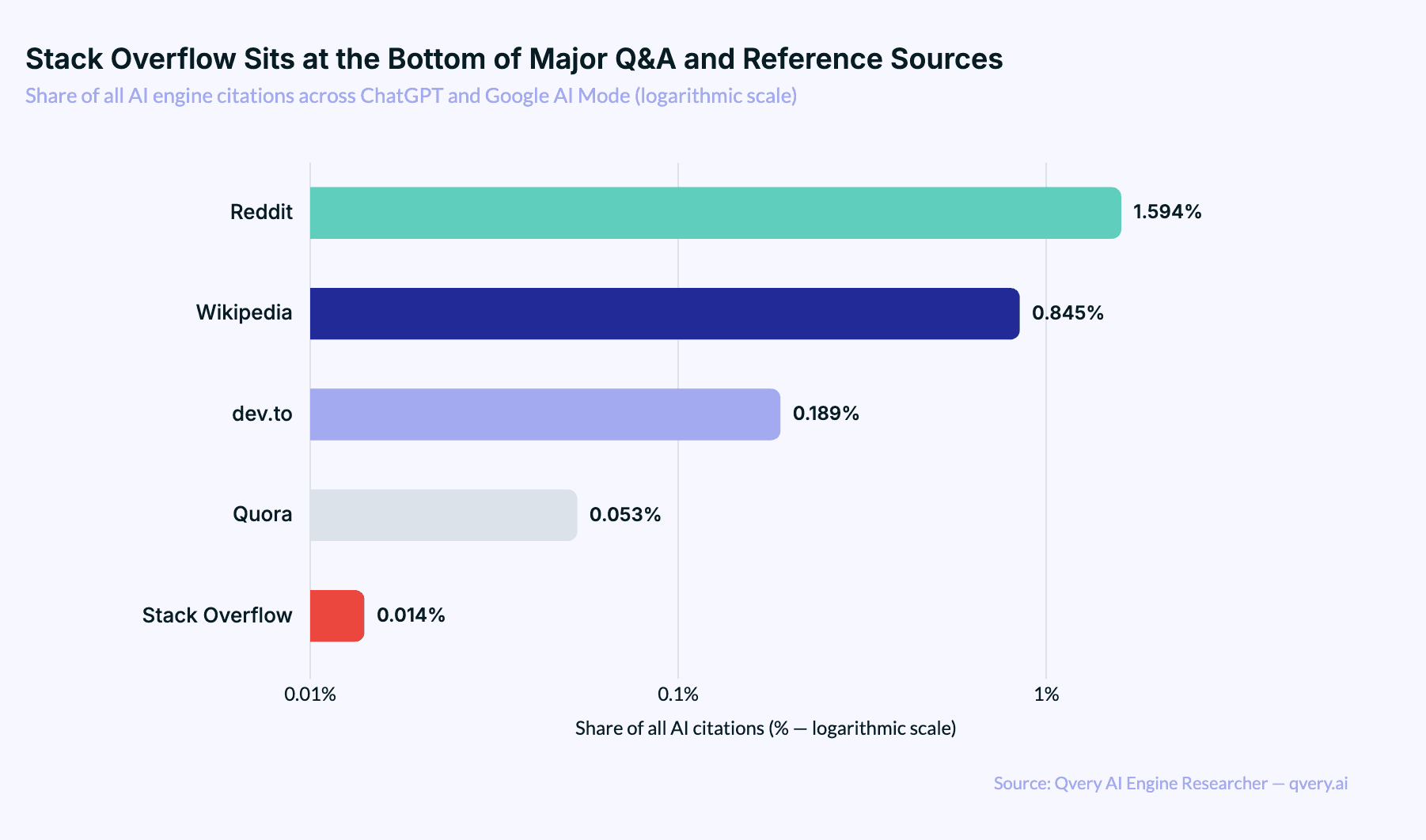

Stack Overflow Is the Smallest Major Q&A Source in AI Search

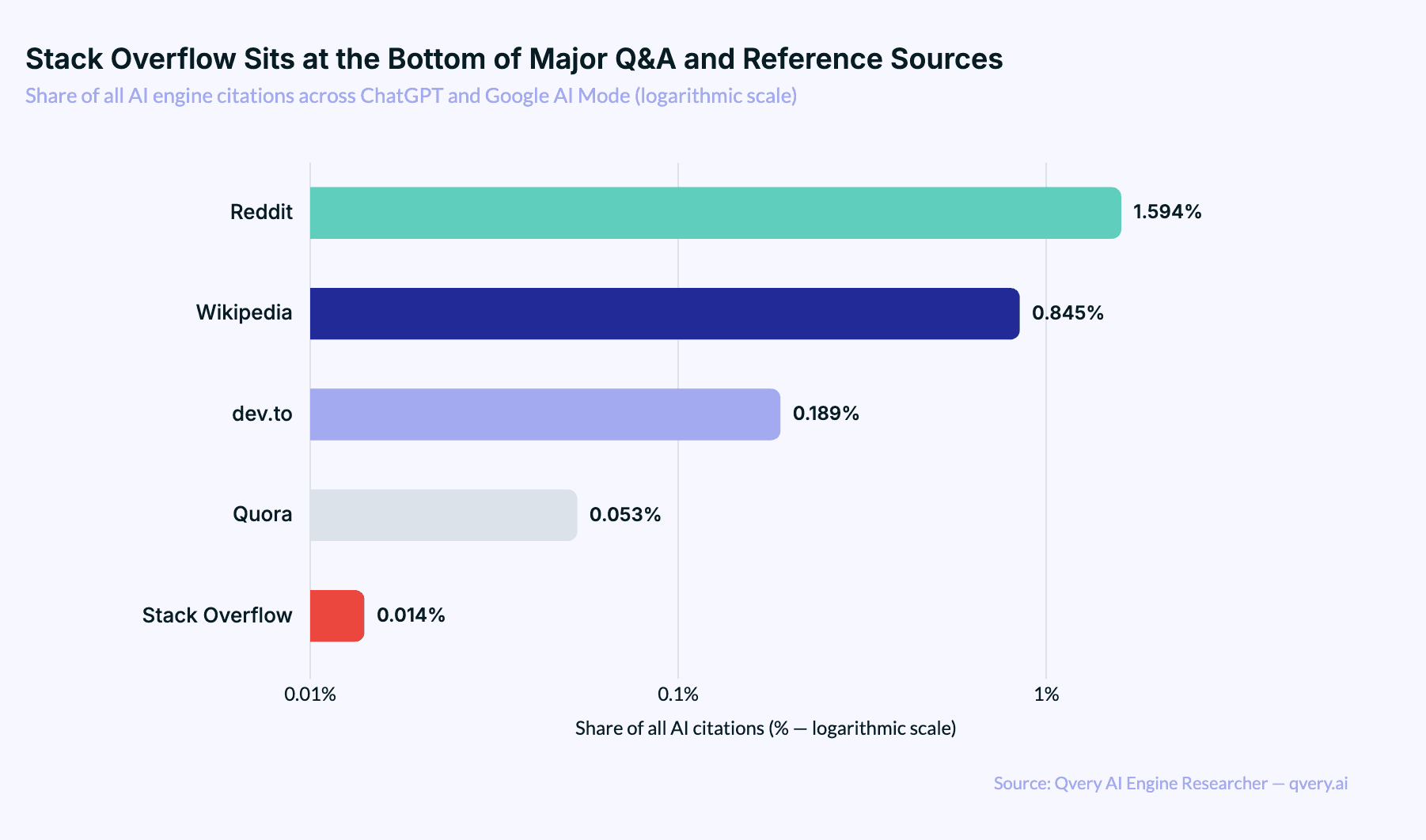

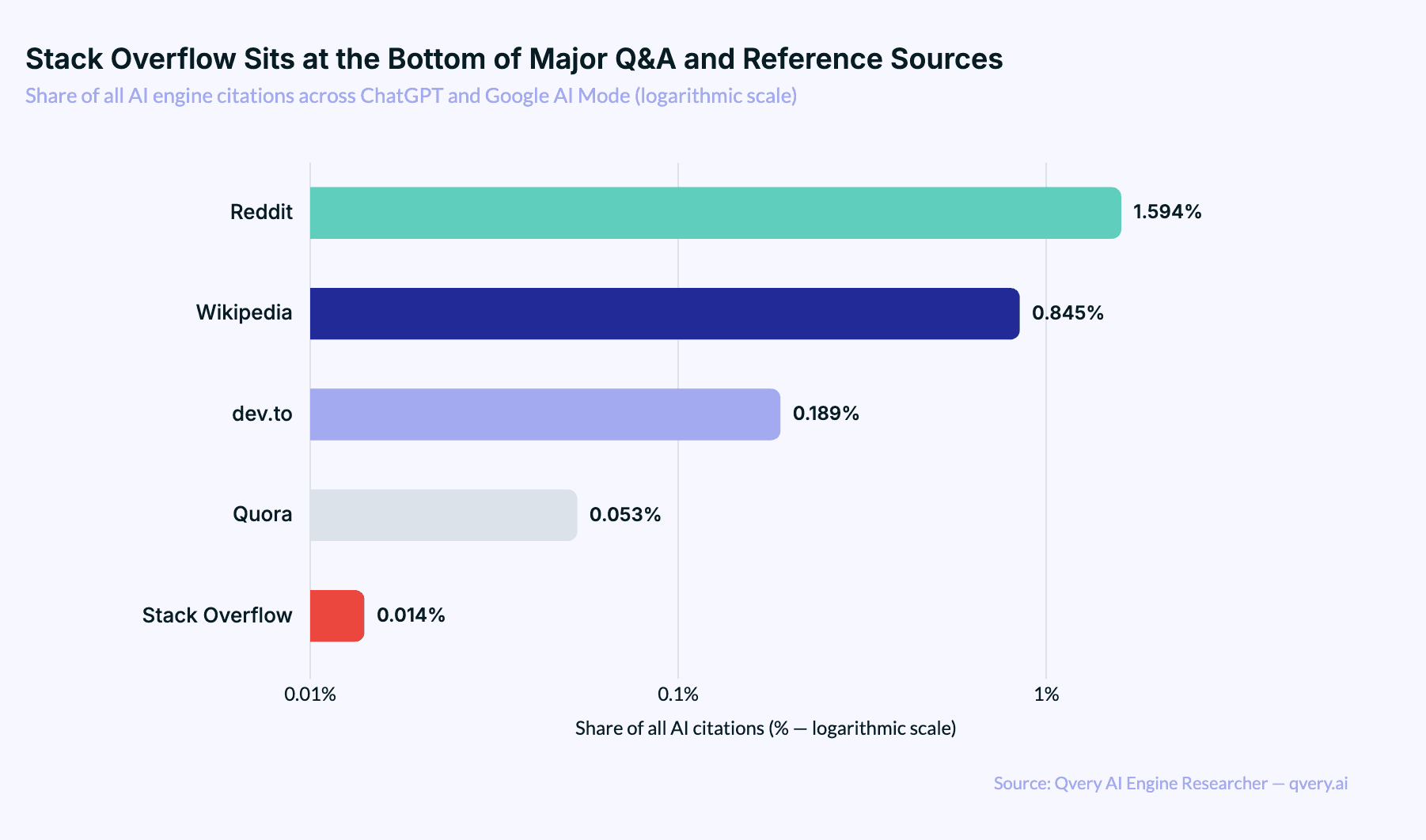

Among Q&A and developer-knowledge platforms, the gap is brutal. Reddit gets 117 times more citations than Stack Overflow. dev.to, a developer blogging platform that didn't exist before 2017, gets 14 times more. Wikipedia gets 62 times more. Even Quora, a Q&A platform that ChatGPT cites zero times, beats Stack Overflow on Google AI Mode by a factor of four.

Stack Overflow's 0.014% share of all AI engine citations is the kind of number you'd expect for a niche regional forum, not for the largest developer reference site on the open web. The volume gap is the surprise. Stack Overflow has more answered questions than the rest of UGC combined. It still loses on every comparison that matters.

dev.to Out-Cites Stack Overflow by an Order of Magnitude

The dev.to comparison is the cleanest way to see what's happening. Both platforms target the same audience. Both publish technical content. Both are open to anyone who wants to write a tutorial or share a code snippet. By any reasonable measure, Stack Overflow has more authority, more content, and more historical weight.

dev.to gets cited 14 times more often. The gap widens to 19 times on Google AI Mode specifically.

The structural difference is content age. The most-cited dev.to articles in our dataset were published after 2022. Most of the Stack Overflow pages that do get cited are five to twelve years old. AI engines treat the dev.to articles as fresh information they need to retrieve. They treat the Stack Overflow pages as content they already know (the model has already memorized the answer. Citing the original feels redundant.)

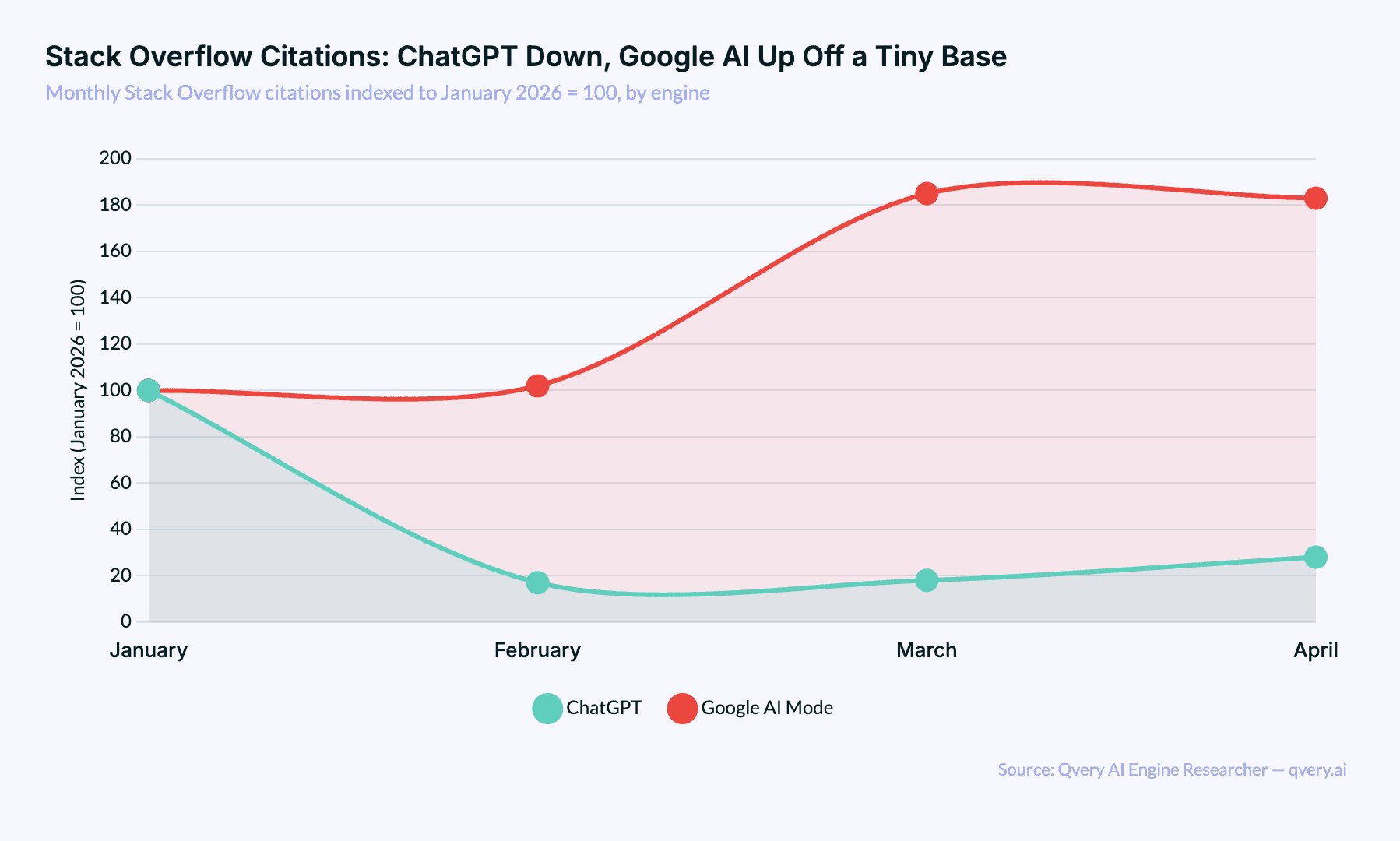

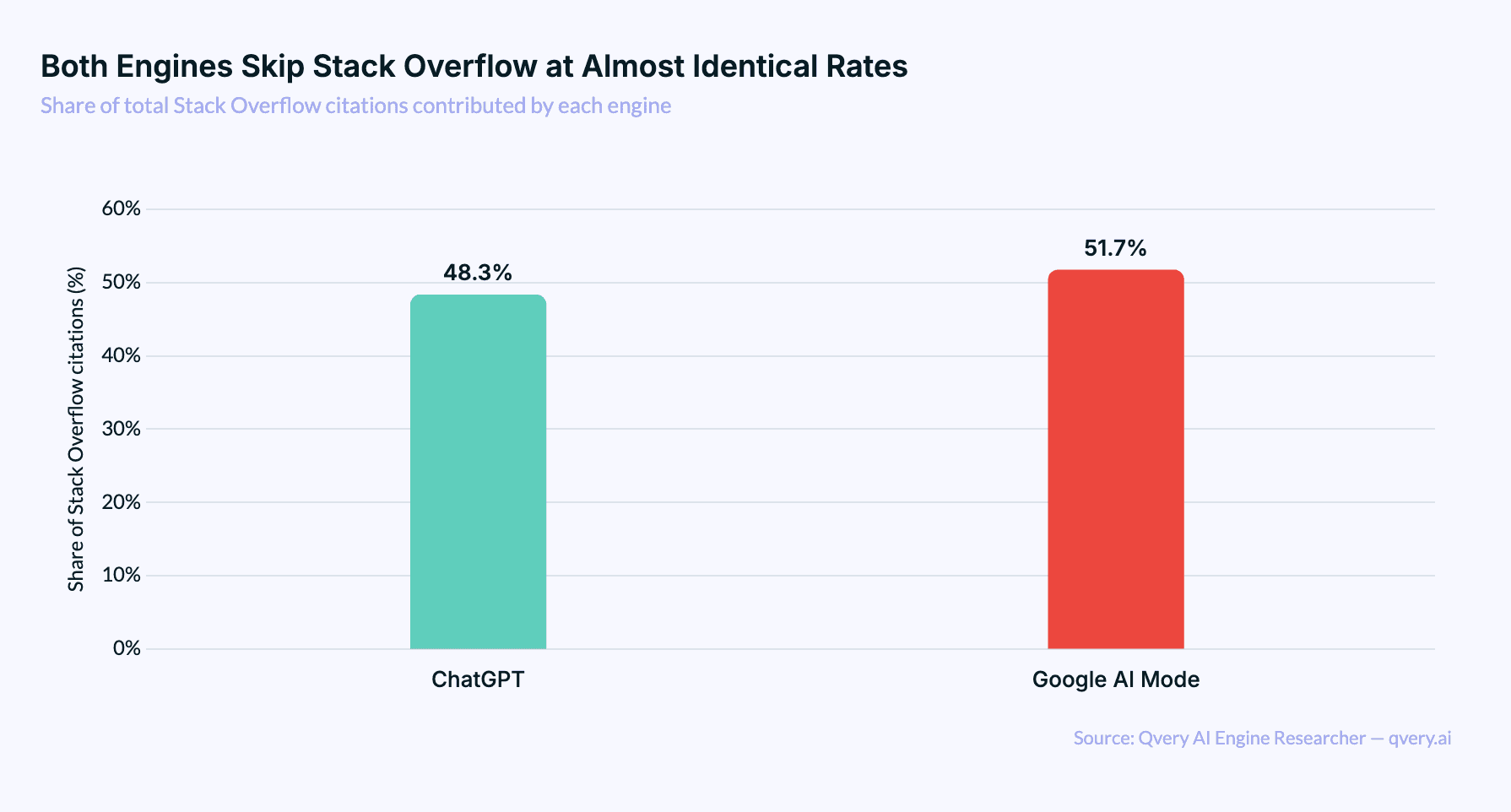

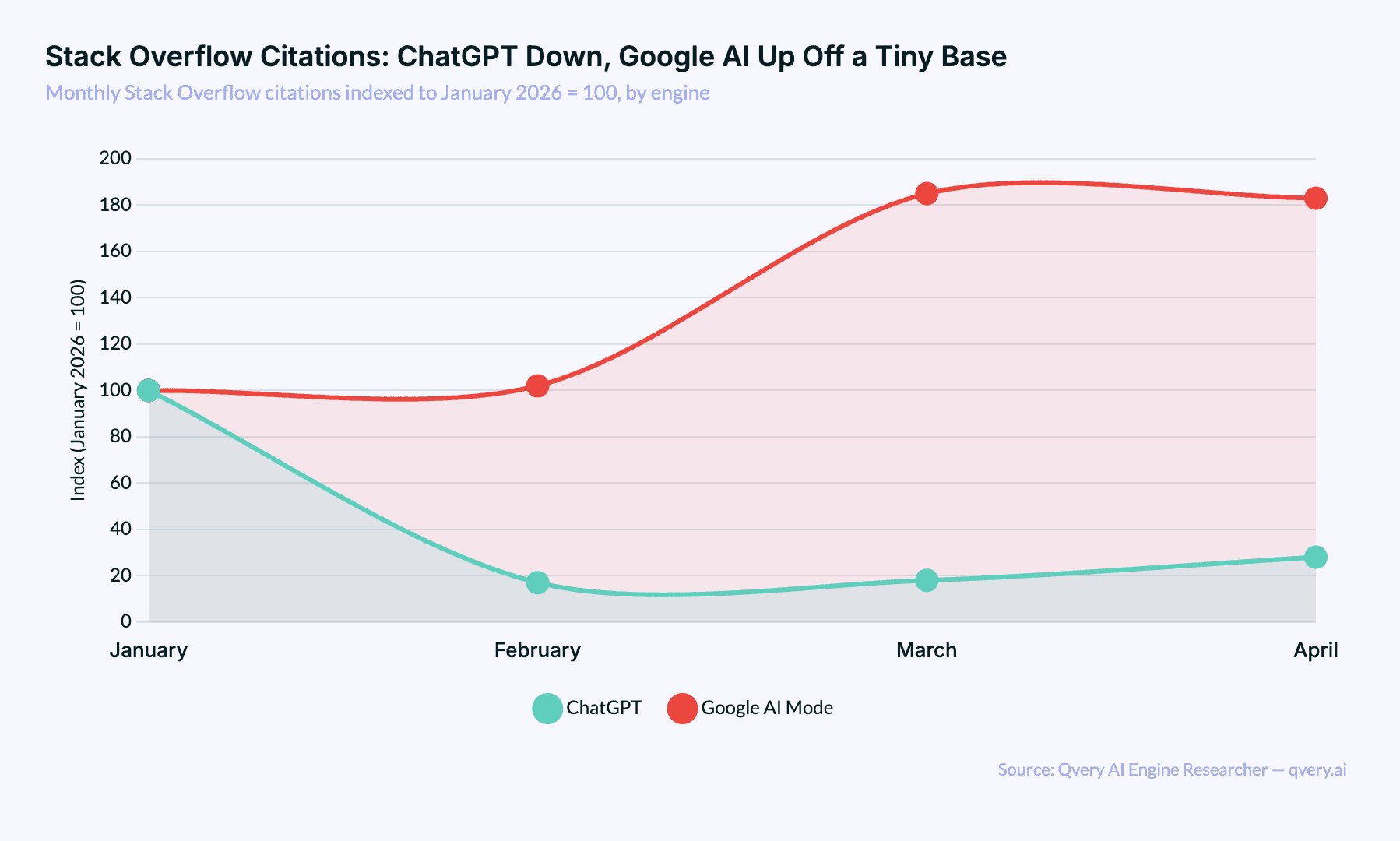

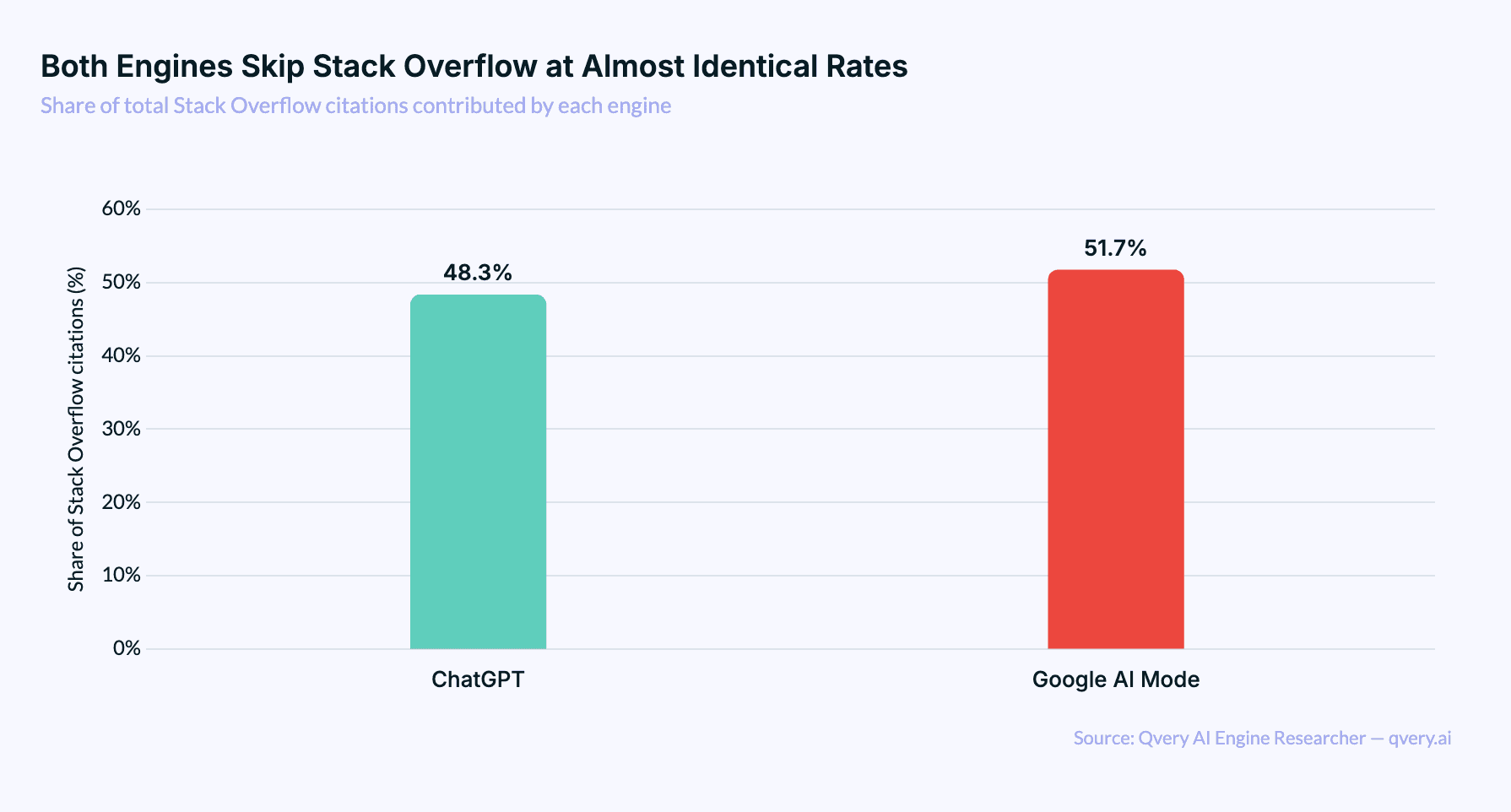

Both Engines Skip Stack Overflow at Almost Identical Rates

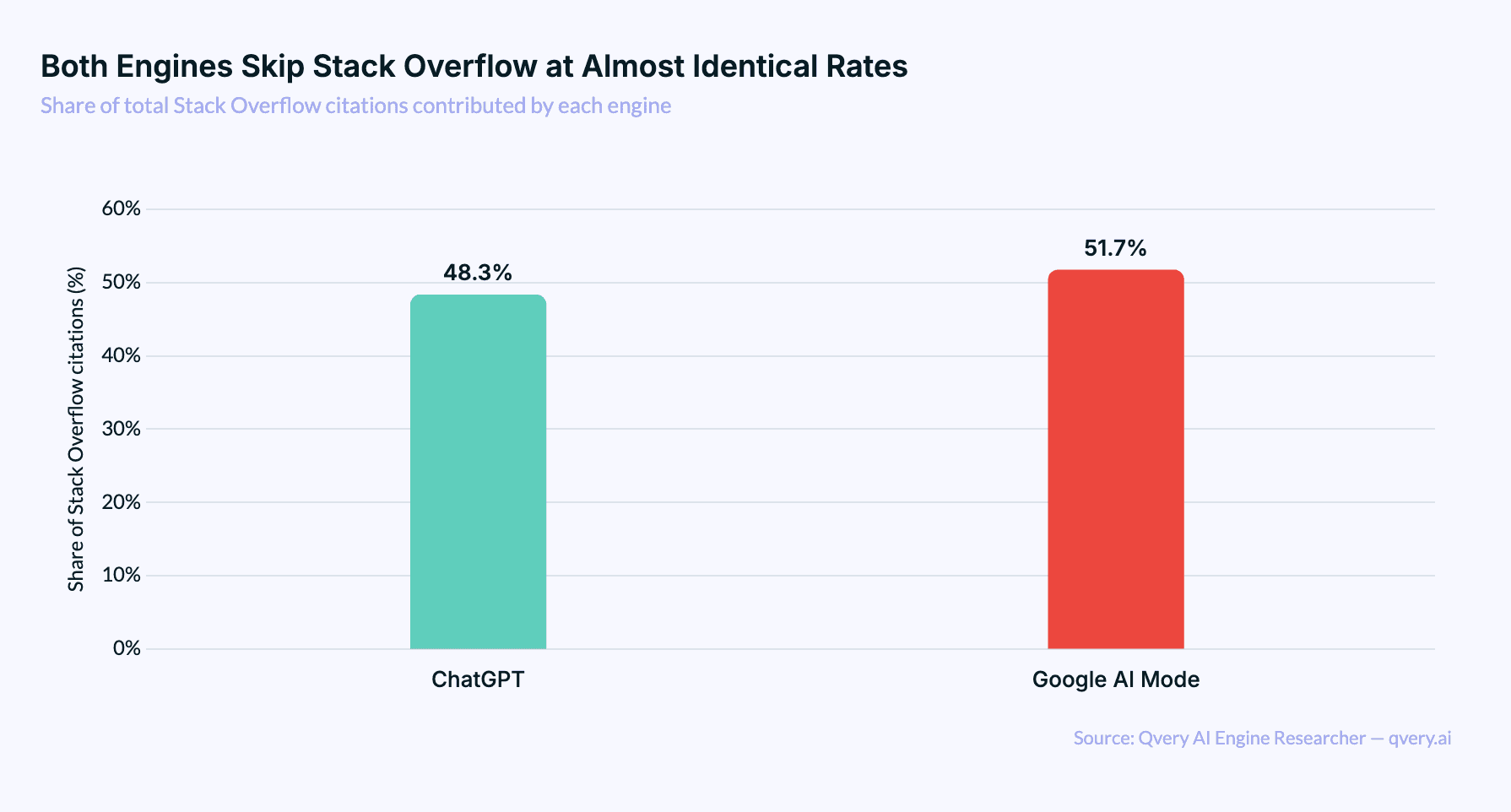

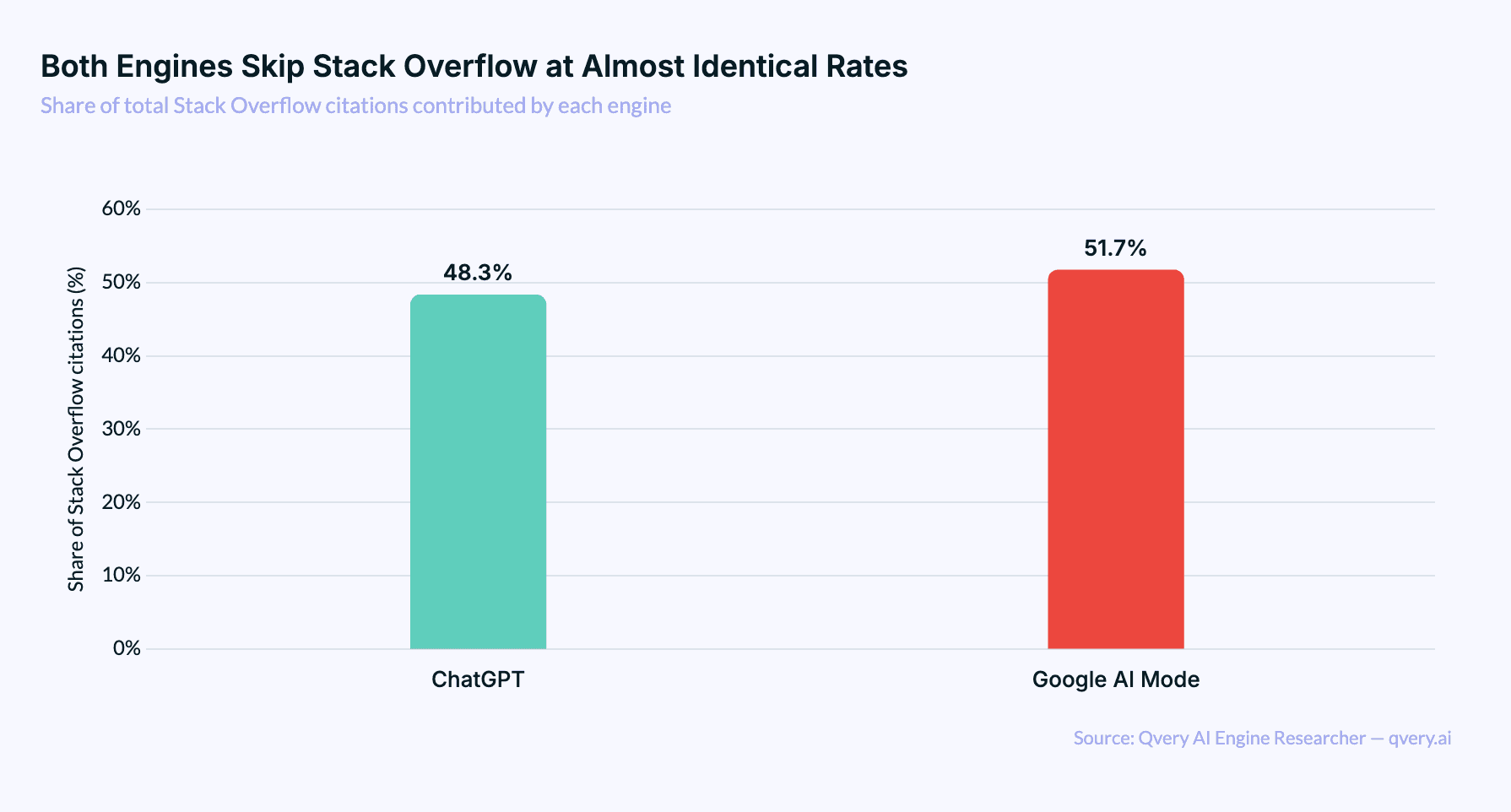

The engine split is the strangest part of this finding. For most major domains, ChatGPT and Google AI Mode disagree about something. Wikipedia is 97% ChatGPT. YouTube is 91% Google AI. Reddit is roughly even but for different reasons on each engine.

Stack Overflow is the rare case where both engines have the same opinion. Google AI Mode produces 51.7% of Stack Overflow citations. ChatGPT produces 48.3%. The split is so balanced that it tells you neither engine treats Stack Overflow as a meaningful priority. They're both citing it occasionally, when nothing better turns up.

Compare that to Stack Overflow's share within each engine. ChatGPT cites Stack Overflow in 0.018% of its citations. Google AI Mode cites it in 0.011%. Microscopic, on both sides.

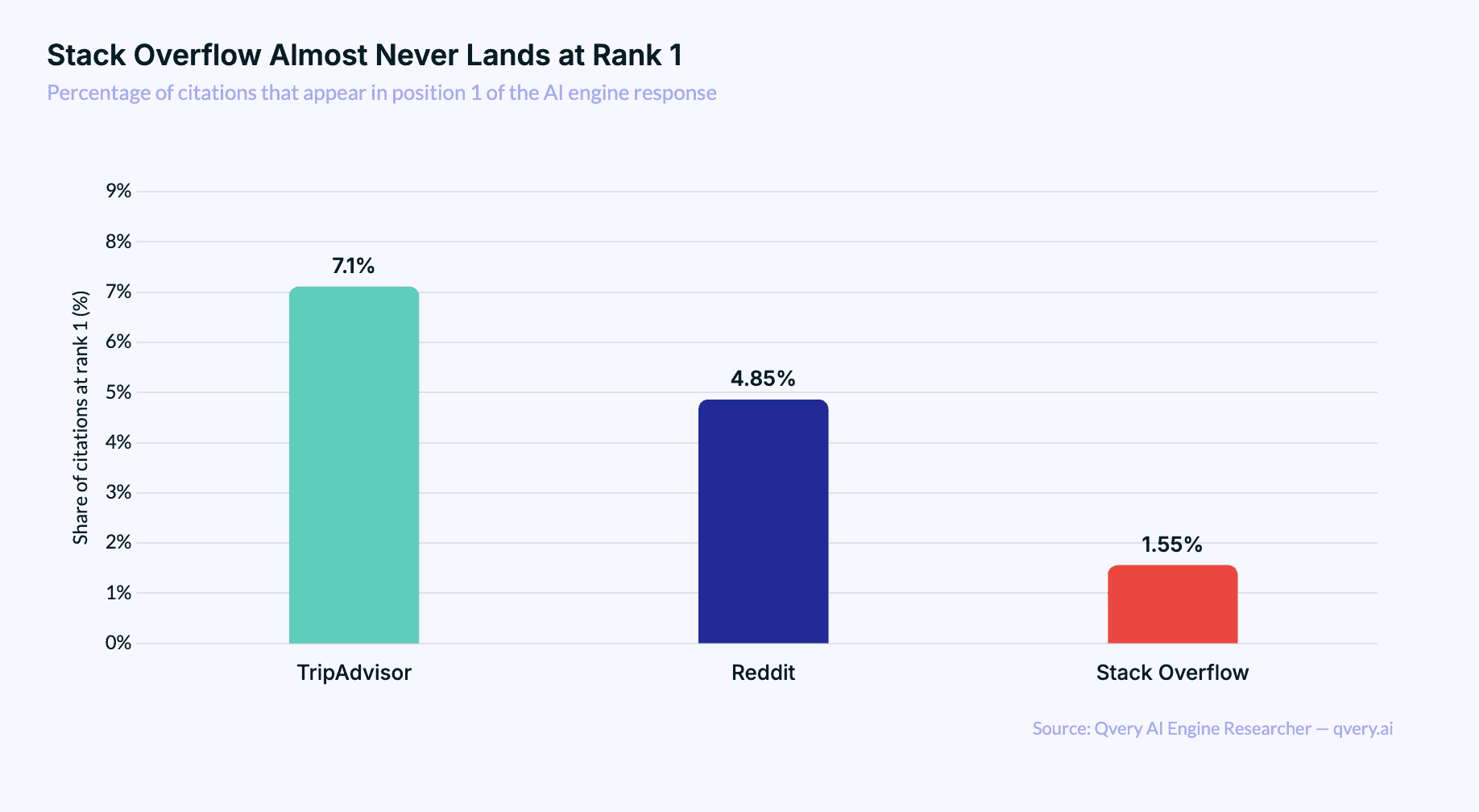

When Stack Overflow Is Cited, It Lands Deep in the List

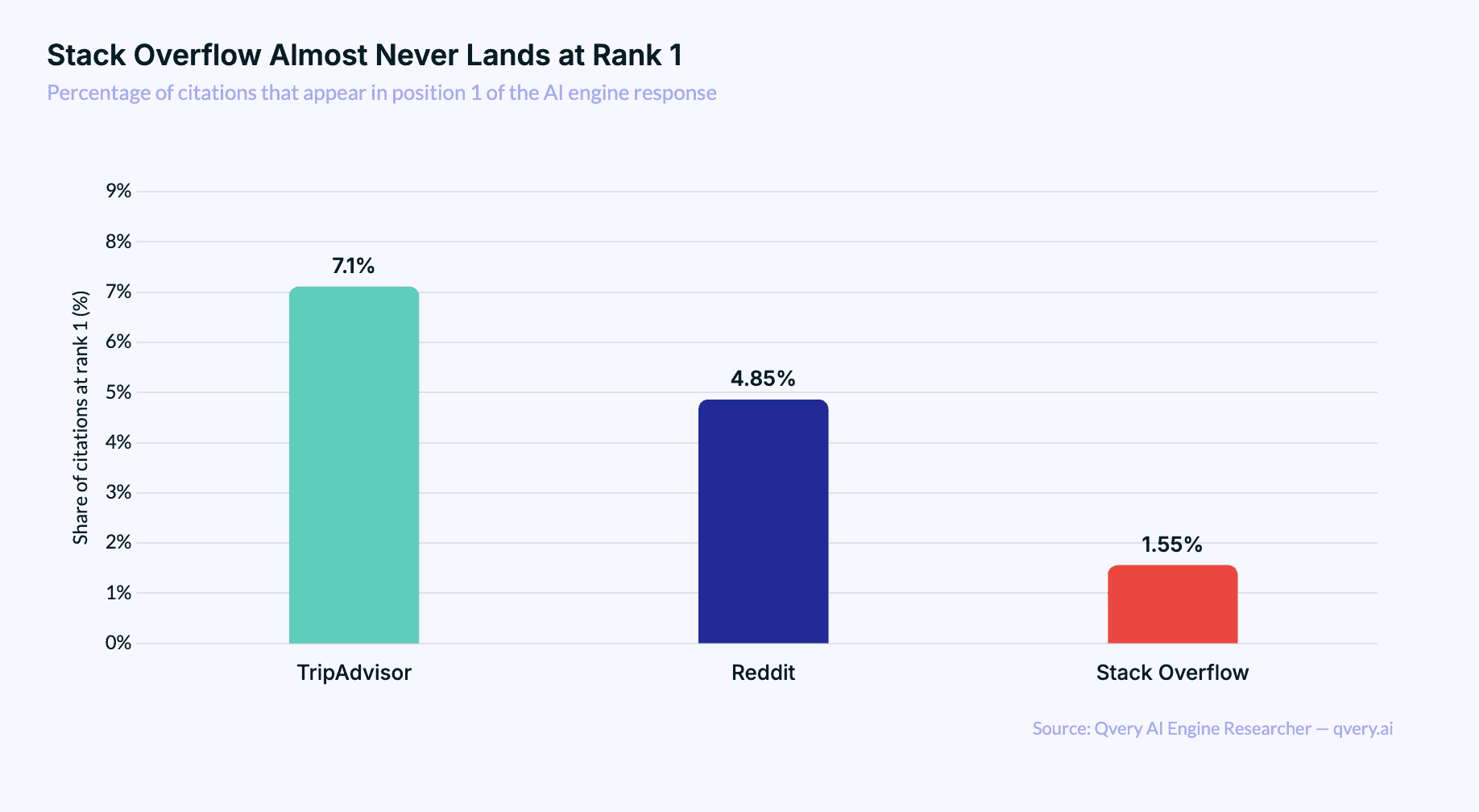

The rare times Stack Overflow does appear in an AI response, it's buried. Average citation rank on ChatGPT: 19.69. Average on Google AI Mode: 13.08. For comparison, Wikipedia averages 6.99 on ChatGPT, and Reddit averages 9.69. Stack Overflow shows up around the same point in the citation list where most readers have already stopped scrolling.

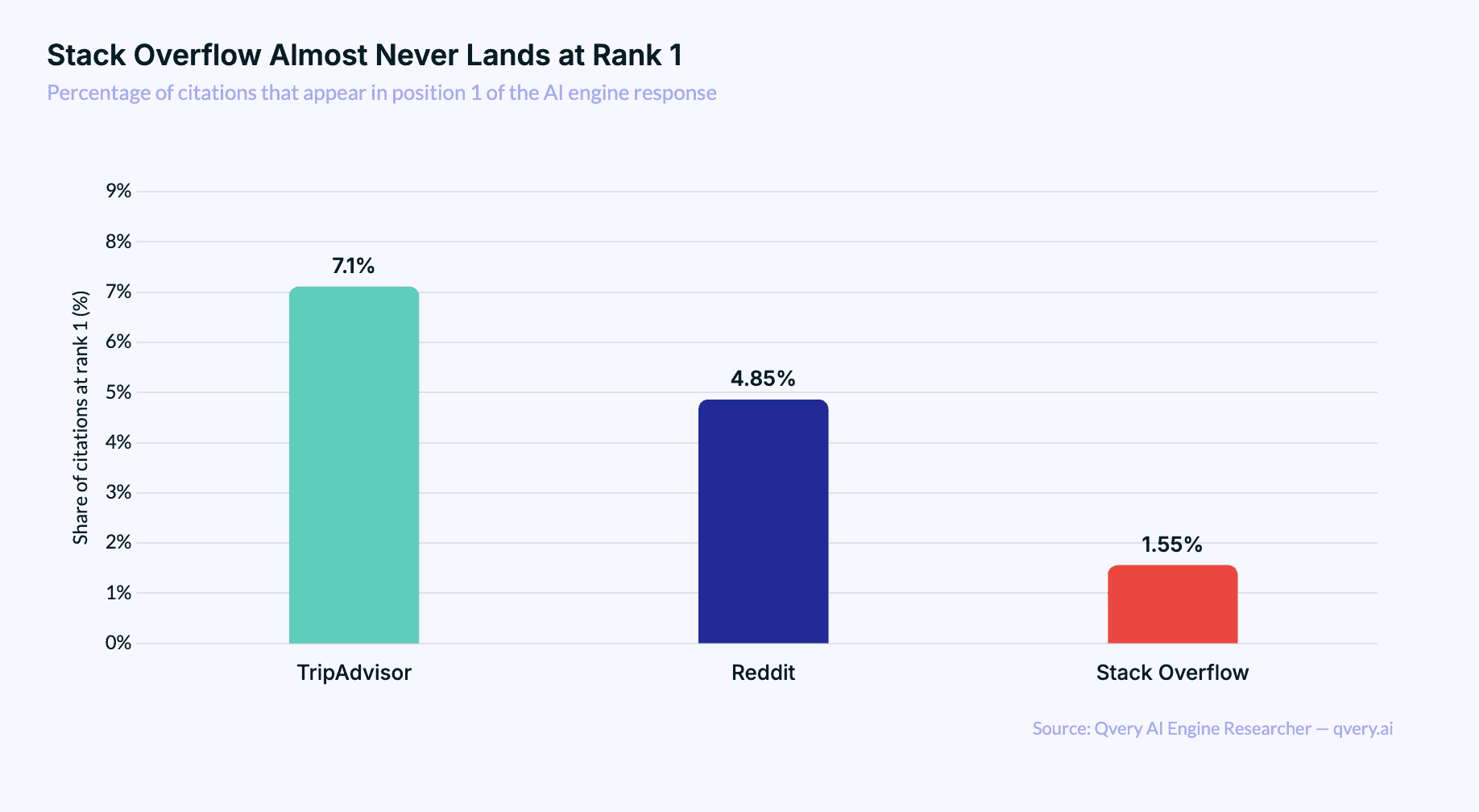

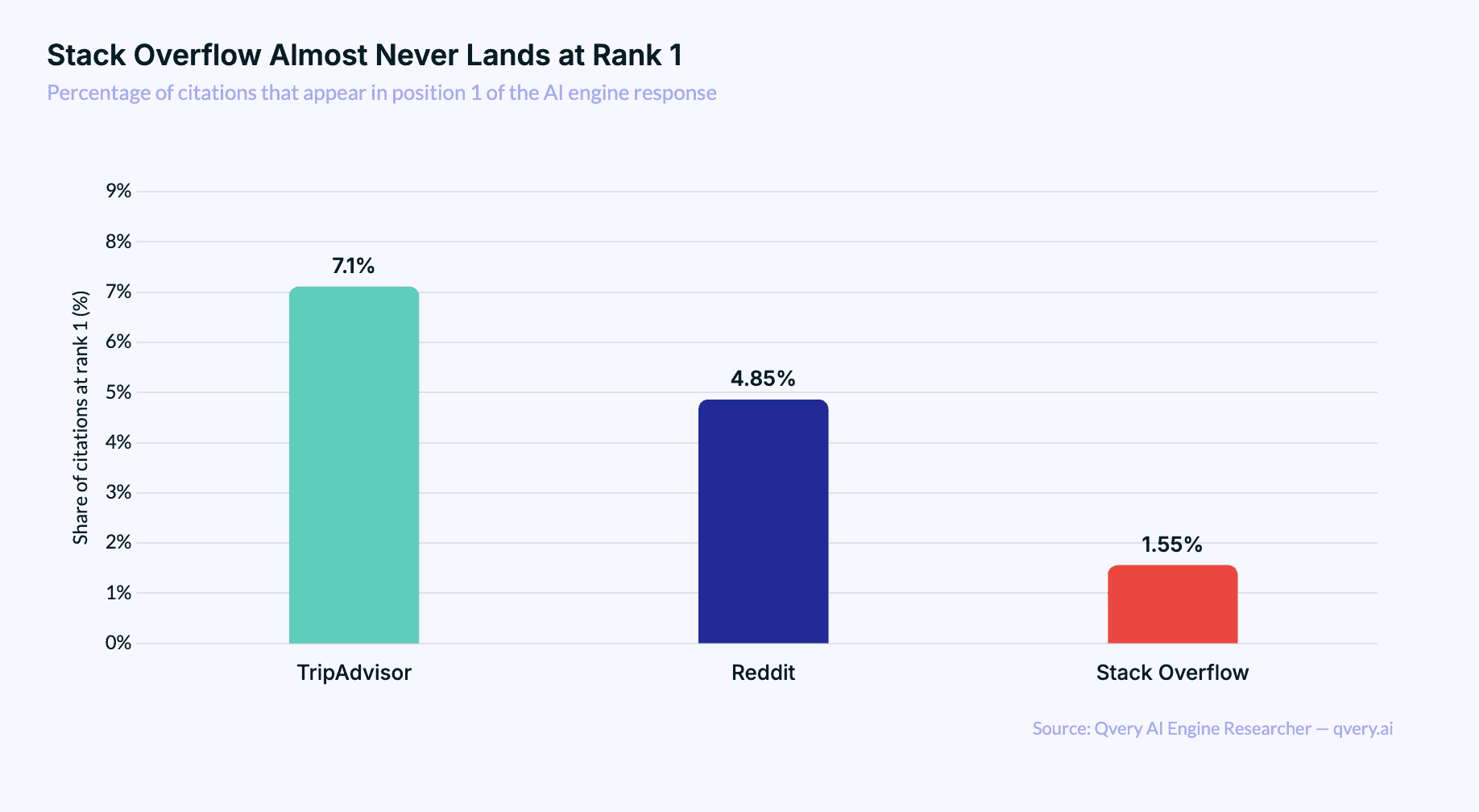

The position-1 rate is even sharper. Out of every Stack Overflow citation in our dataset, only 1.55% land at the top of the citation list. Reddit's position-1 rate is 4.85%. TripAdvisor's is 7.10%. Stack Overflow is barely above the noise floor.

The top-3 rate is 4.9%.

Top-5 is 14.2%.

Top-10 is 29.3%.

The Code-Snippet Sweet Spot Is the Only Place Stack Overflow Wins

Stack Overflow does still earn citations occasionally. The pattern is narrow. The pages that get cited are technical Q&A threads with very specific code-level questions where no equivalent answer exists anywhere else. JavaScript link rewriting. PDF generation library comparisons. Plotly dashboard exports. Boilerplate avoidance for a particular framework.

The single most-cited Stack Overflow URL in our dataset is a 12-year-old thread on automatically converting external links to affiliate links. That one URL alone accounts for more than 10% of every Stack Overflow citation we've recorded. The next four most-cited Stack Overflow URLs combined don't match it.

Stack Overflow earns AI citations only when it has the kind of hyper-narrow code answer that doesn't exist on Reddit, dev.to, or Medium. Everything else gets routed elsewhere.

This pattern explains why Stack Overflow citations cluster so tightly around developer-tooling topics: form builders, affiliate code, chart and graph libraries, PDF and HTML export, design tools. There's almost nothing in the dataset about programming languages or frameworks broadly.

AI engines don't go to Stack Overflow for "how should I learn React in 2026?" — they go to Reddit. They only go to Stack Overflow when they need a specific snippet that's been buried in a thread for a decade.

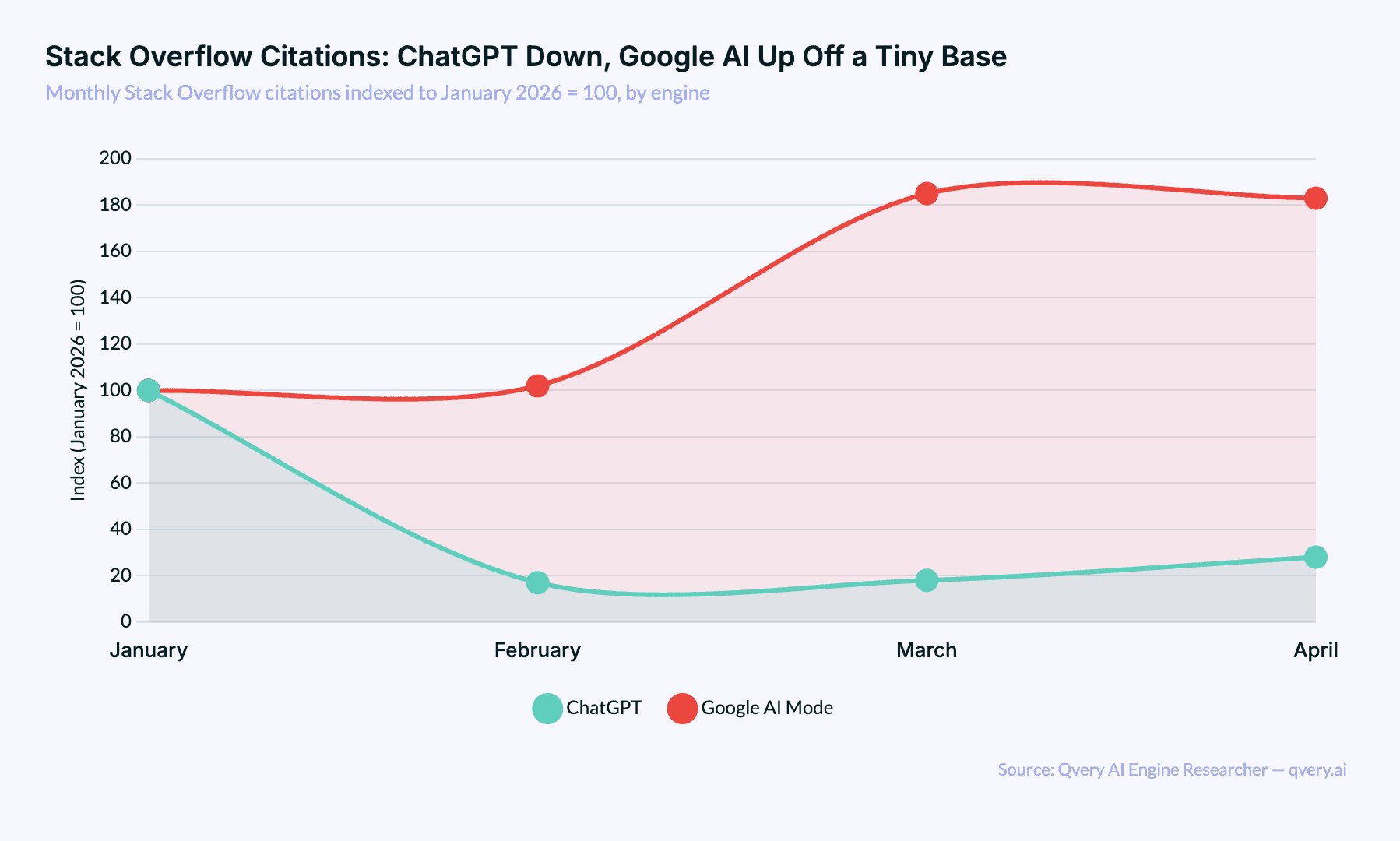

Stack Overflow's Database Is Already Inside the Models

The simplest explanation for what's happening is also the most consequential one for any developer-content platform: AI engines aren't ignoring Stack Overflow. They've absorbed it.

Every major foundation model has had access to Stack Overflow's data dumps for years. The site explicitly licenses its content under Creative Commons. OpenAI, Google, Anthropic, and every other model trainer has used Stack Overflow as a primary source for code reasoning since at least 2020. The site is sitting inside the models, not outside them. When you ask ChatGPT how to write a SQL JOIN, it doesn't need to retrieve a Stack Overflow page. It already wrote the answer ten million times in training.

dev.to and Reddit don't have that problem. Reddit's data deal with OpenAI happened in 2024, and most of the cited Reddit threads in our dataset post-date the major training cutoffs. dev.to is younger and was less heavily used as training data. Both platforms have content that AI engines need to retrieve because they don't already have it baked in.

This is not going to reverse. Stack Overflow's training-data presence in foundation models grows every release cycle, not shrinks. The fresher Stack Overflow content from 2024 onward will eventually get absorbed too.

For brands building developer-facing content today, the lesson is uncomfortable. Investing in Stack Overflow answers as an AI visibility play is investing in citations that will not happen.

The three pillars of AI engine visibility still apply: your own website, third-party mentions, and UGC. Stack Overflow doesn't fit cleanly into any of them. It's a fourth category, and it's the category AI engines have already finished reading.

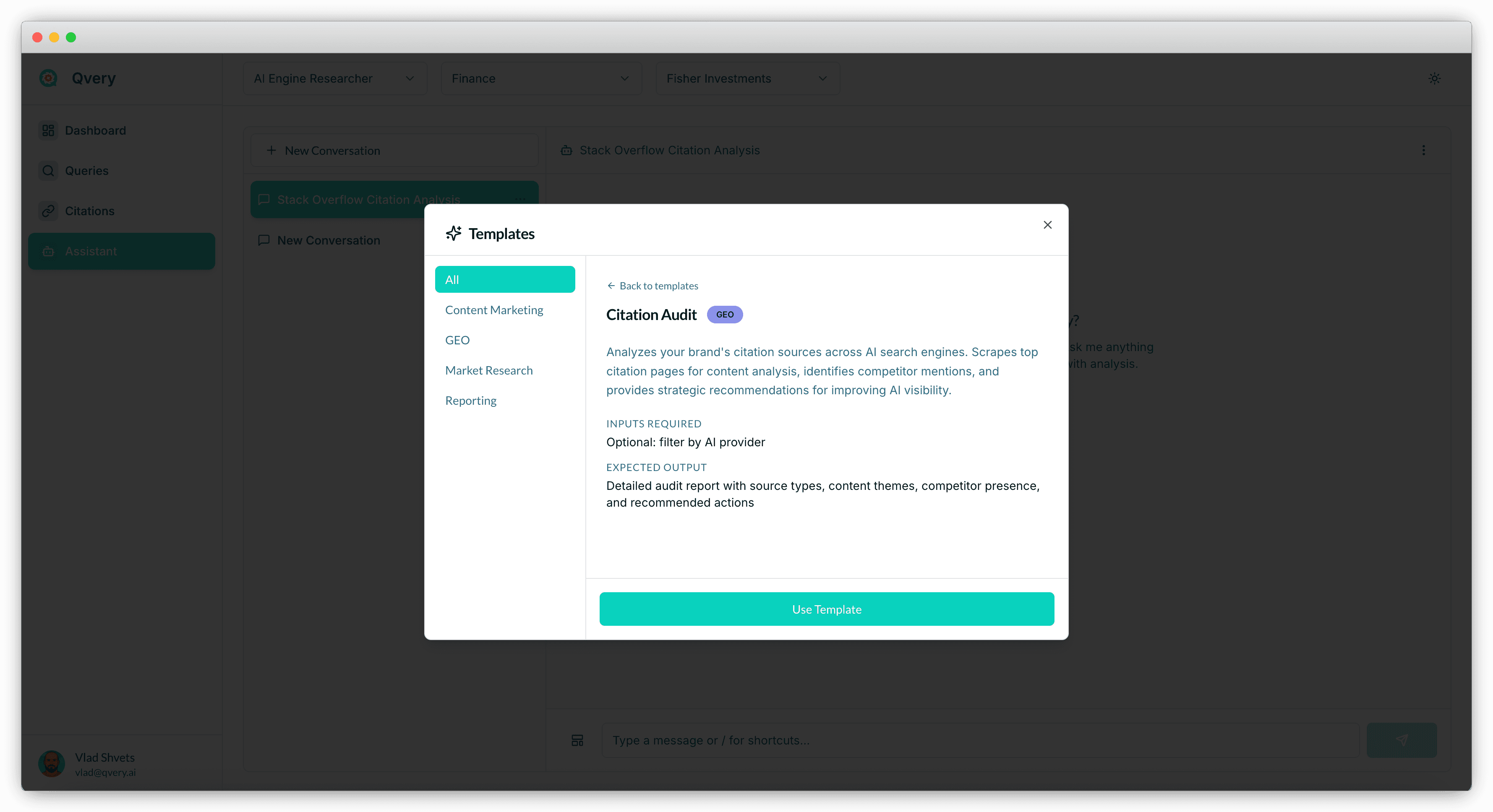

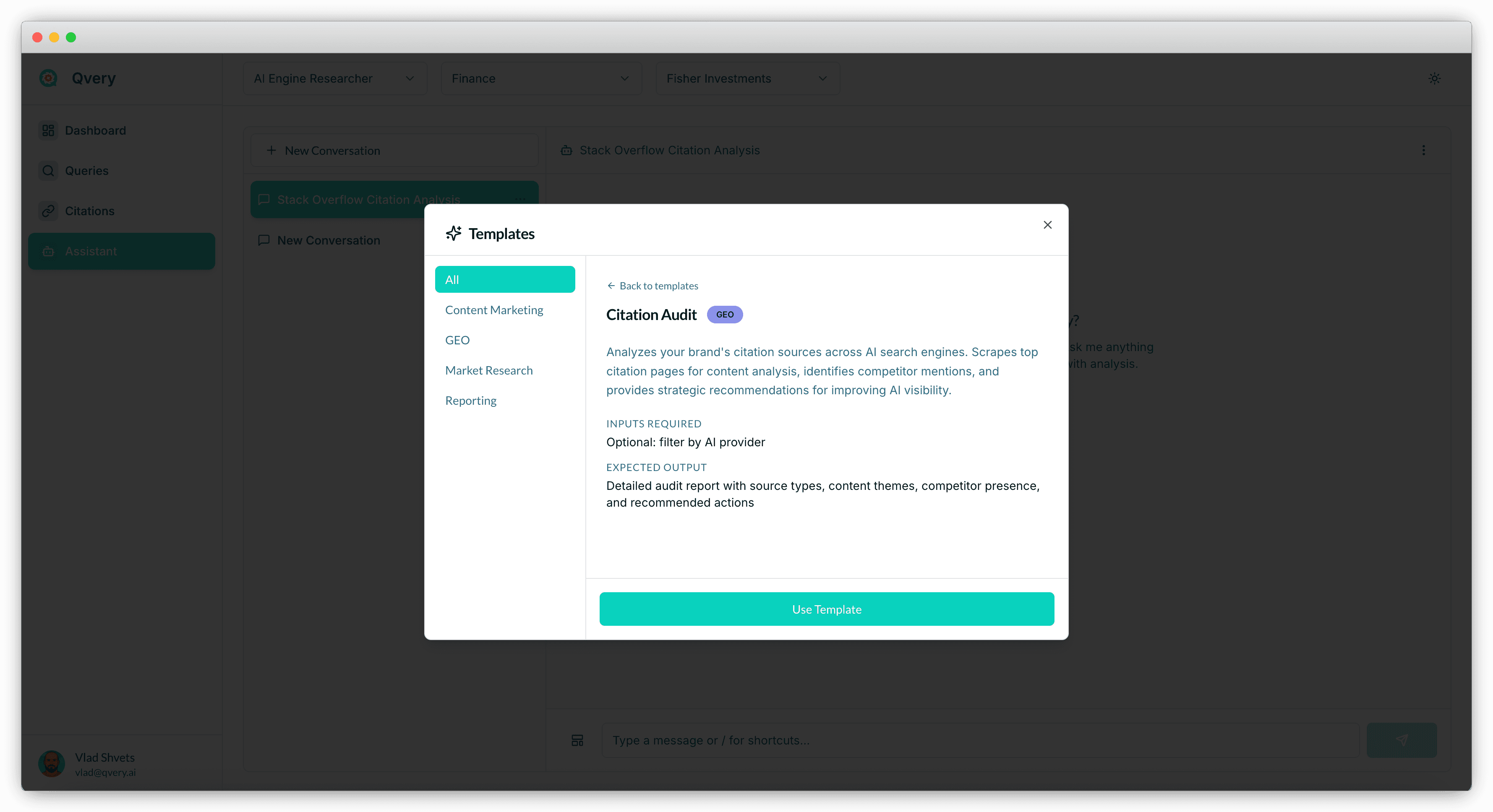

Check Your Stack Overflow Citation Footprint With Qvery Assistant

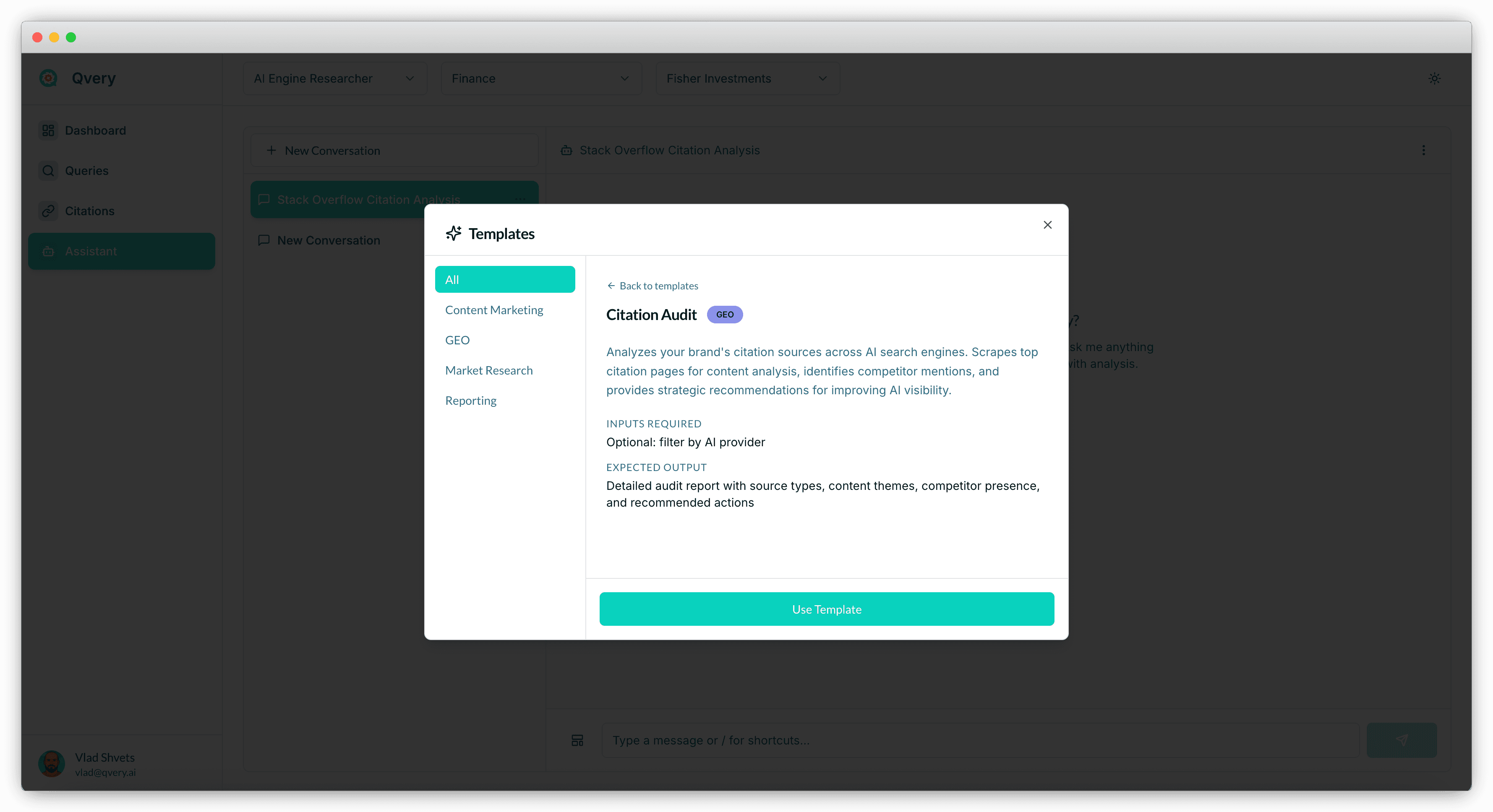

We ran this analysis across our entire dataset to surface the patterns. The next step is doing it for your brand specifically, and that's exactly what Qvery Assistant is built for.

Qvery Assistant is the AI agent built into your Qvery account. It has full context on every query you track, every citation you've earned, every brand mention recorded for you and your competitors. You interact with it in two ways:

Pick a template. Templates are pre-built multi-agent workflows. Run an AI Visibility Report for the past 30 days, a Citation Gap Analysis against your top three competitors, or a Competitor Deep Dive on whoever is outperforming you. The Assistant handles the orchestration. You get a structured deliverable.

Ask any question in plain English. "How does my Stack Overflow citation footprint compare to my competitors?" "Which of my topics surface Stack Overflow at all?" "Where am I missing developer-source citations entirely?" The Assistant queries your data and answers in seconds.

You'll know in seconds whether your brand looks like the average pattern in this article, whether you're outperforming it, or whether something is breaking against you. Sign up for Qvery to start.

Most developers I know assume Stack Overflow is the bedrock of AI search visibility for technical content. It's the world's largest developer Q&A site. It has 24 million answered questions.

If AI engines were going to cite anyone for code questions, surely Stack Overflow would be the obvious first stop.

It isn't. We've been tracking AI engine citations across ChatGPT and Google AI Mode through Qvery's AI Engine Researcher, and Stack Overflow barely shows up. It accounts for 0.014% of all citations. That's one citation in every seven thousand. It ranks well outside the top fifty cited domains, behind sites that nobody would describe as a primary developer resource.

The reason isn't that Stack Overflow lost its quality. The reason is that AI engines have already read it.

Every answer, every accepted solution, every code snippet that's been on the site since 2020 is sitting inside the foundation models. There's no need to cite a source the model has already absorbed. Stack Overflow's invisibility in AI search is a side effect of being too useful for too long.

A success story, technically. Just not the success Stack Overflow would have picked.

Stack Overflow Is the Smallest Major Q&A Source in AI Search

Among Q&A and developer-knowledge platforms, the gap is brutal. Reddit gets 117 times more citations than Stack Overflow. dev.to, a developer blogging platform that didn't exist before 2017, gets 14 times more. Wikipedia gets 62 times more. Even Quora, a Q&A platform that ChatGPT cites zero times, beats Stack Overflow on Google AI Mode by a factor of four.

Stack Overflow's 0.014% share of all AI engine citations is the kind of number you'd expect for a niche regional forum, not for the largest developer reference site on the open web. The volume gap is the surprise. Stack Overflow has more answered questions than the rest of UGC combined. It still loses on every comparison that matters.

dev.to Out-Cites Stack Overflow by an Order of Magnitude

The dev.to comparison is the cleanest way to see what's happening. Both platforms target the same audience. Both publish technical content. Both are open to anyone who wants to write a tutorial or share a code snippet. By any reasonable measure, Stack Overflow has more authority, more content, and more historical weight.

dev.to gets cited 14 times more often. The gap widens to 19 times on Google AI Mode specifically.

The structural difference is content age. The most-cited dev.to articles in our dataset were published after 2022. Most of the Stack Overflow pages that do get cited are five to twelve years old. AI engines treat the dev.to articles as fresh information they need to retrieve. They treat the Stack Overflow pages as content they already know (the model has already memorized the answer. Citing the original feels redundant.)

Both Engines Skip Stack Overflow at Almost Identical Rates

The engine split is the strangest part of this finding. For most major domains, ChatGPT and Google AI Mode disagree about something. Wikipedia is 97% ChatGPT. YouTube is 91% Google AI. Reddit is roughly even but for different reasons on each engine.

Stack Overflow is the rare case where both engines have the same opinion. Google AI Mode produces 51.7% of Stack Overflow citations. ChatGPT produces 48.3%. The split is so balanced that it tells you neither engine treats Stack Overflow as a meaningful priority. They're both citing it occasionally, when nothing better turns up.

Compare that to Stack Overflow's share within each engine. ChatGPT cites Stack Overflow in 0.018% of its citations. Google AI Mode cites it in 0.011%. Microscopic, on both sides.

When Stack Overflow Is Cited, It Lands Deep in the List

The rare times Stack Overflow does appear in an AI response, it's buried. Average citation rank on ChatGPT: 19.69. Average on Google AI Mode: 13.08. For comparison, Wikipedia averages 6.99 on ChatGPT, and Reddit averages 9.69. Stack Overflow shows up around the same point in the citation list where most readers have already stopped scrolling.

The position-1 rate is even sharper. Out of every Stack Overflow citation in our dataset, only 1.55% land at the top of the citation list. Reddit's position-1 rate is 4.85%. TripAdvisor's is 7.10%. Stack Overflow is barely above the noise floor.

The top-3 rate is 4.9%.

Top-5 is 14.2%.

Top-10 is 29.3%.

The Code-Snippet Sweet Spot Is the Only Place Stack Overflow Wins

Stack Overflow does still earn citations occasionally. The pattern is narrow. The pages that get cited are technical Q&A threads with very specific code-level questions where no equivalent answer exists anywhere else. JavaScript link rewriting. PDF generation library comparisons. Plotly dashboard exports. Boilerplate avoidance for a particular framework.

The single most-cited Stack Overflow URL in our dataset is a 12-year-old thread on automatically converting external links to affiliate links. That one URL alone accounts for more than 10% of every Stack Overflow citation we've recorded. The next four most-cited Stack Overflow URLs combined don't match it.

Stack Overflow earns AI citations only when it has the kind of hyper-narrow code answer that doesn't exist on Reddit, dev.to, or Medium. Everything else gets routed elsewhere.

This pattern explains why Stack Overflow citations cluster so tightly around developer-tooling topics: form builders, affiliate code, chart and graph libraries, PDF and HTML export, design tools. There's almost nothing in the dataset about programming languages or frameworks broadly.

AI engines don't go to Stack Overflow for "how should I learn React in 2026?" — they go to Reddit. They only go to Stack Overflow when they need a specific snippet that's been buried in a thread for a decade.

Stack Overflow's Database Is Already Inside the Models

The simplest explanation for what's happening is also the most consequential one for any developer-content platform: AI engines aren't ignoring Stack Overflow. They've absorbed it.

Every major foundation model has had access to Stack Overflow's data dumps for years. The site explicitly licenses its content under Creative Commons. OpenAI, Google, Anthropic, and every other model trainer has used Stack Overflow as a primary source for code reasoning since at least 2020. The site is sitting inside the models, not outside them. When you ask ChatGPT how to write a SQL JOIN, it doesn't need to retrieve a Stack Overflow page. It already wrote the answer ten million times in training.

dev.to and Reddit don't have that problem. Reddit's data deal with OpenAI happened in 2024, and most of the cited Reddit threads in our dataset post-date the major training cutoffs. dev.to is younger and was less heavily used as training data. Both platforms have content that AI engines need to retrieve because they don't already have it baked in.

This is not going to reverse. Stack Overflow's training-data presence in foundation models grows every release cycle, not shrinks. The fresher Stack Overflow content from 2024 onward will eventually get absorbed too.

For brands building developer-facing content today, the lesson is uncomfortable. Investing in Stack Overflow answers as an AI visibility play is investing in citations that will not happen.

The three pillars of AI engine visibility still apply: your own website, third-party mentions, and UGC. Stack Overflow doesn't fit cleanly into any of them. It's a fourth category, and it's the category AI engines have already finished reading.

Check Your Stack Overflow Citation Footprint With Qvery Assistant

We ran this analysis across our entire dataset to surface the patterns. The next step is doing it for your brand specifically, and that's exactly what Qvery Assistant is built for.

Qvery Assistant is the AI agent built into your Qvery account. It has full context on every query you track, every citation you've earned, every brand mention recorded for you and your competitors. You interact with it in two ways:

Pick a template. Templates are pre-built multi-agent workflows. Run an AI Visibility Report for the past 30 days, a Citation Gap Analysis against your top three competitors, or a Competitor Deep Dive on whoever is outperforming you. The Assistant handles the orchestration. You get a structured deliverable.

Ask any question in plain English. "How does my Stack Overflow citation footprint compare to my competitors?" "Which of my topics surface Stack Overflow at all?" "Where am I missing developer-source citations entirely?" The Assistant queries your data and answers in seconds.

You'll know in seconds whether your brand looks like the average pattern in this article, whether you're outperforming it, or whether something is breaking against you. Sign up for Qvery to start.

Most developers I know assume Stack Overflow is the bedrock of AI search visibility for technical content. It's the world's largest developer Q&A site. It has 24 million answered questions.

If AI engines were going to cite anyone for code questions, surely Stack Overflow would be the obvious first stop.

It isn't. We've been tracking AI engine citations across ChatGPT and Google AI Mode through Qvery's AI Engine Researcher, and Stack Overflow barely shows up. It accounts for 0.014% of all citations. That's one citation in every seven thousand. It ranks well outside the top fifty cited domains, behind sites that nobody would describe as a primary developer resource.

The reason isn't that Stack Overflow lost its quality. The reason is that AI engines have already read it.

Every answer, every accepted solution, every code snippet that's been on the site since 2020 is sitting inside the foundation models. There's no need to cite a source the model has already absorbed. Stack Overflow's invisibility in AI search is a side effect of being too useful for too long.

A success story, technically. Just not the success Stack Overflow would have picked.

Stack Overflow Is the Smallest Major Q&A Source in AI Search

Among Q&A and developer-knowledge platforms, the gap is brutal. Reddit gets 117 times more citations than Stack Overflow. dev.to, a developer blogging platform that didn't exist before 2017, gets 14 times more. Wikipedia gets 62 times more. Even Quora, a Q&A platform that ChatGPT cites zero times, beats Stack Overflow on Google AI Mode by a factor of four.

Stack Overflow's 0.014% share of all AI engine citations is the kind of number you'd expect for a niche regional forum, not for the largest developer reference site on the open web. The volume gap is the surprise. Stack Overflow has more answered questions than the rest of UGC combined. It still loses on every comparison that matters.

dev.to Out-Cites Stack Overflow by an Order of Magnitude

The dev.to comparison is the cleanest way to see what's happening. Both platforms target the same audience. Both publish technical content. Both are open to anyone who wants to write a tutorial or share a code snippet. By any reasonable measure, Stack Overflow has more authority, more content, and more historical weight.

dev.to gets cited 14 times more often. The gap widens to 19 times on Google AI Mode specifically.

The structural difference is content age. The most-cited dev.to articles in our dataset were published after 2022. Most of the Stack Overflow pages that do get cited are five to twelve years old. AI engines treat the dev.to articles as fresh information they need to retrieve. They treat the Stack Overflow pages as content they already know (the model has already memorized the answer. Citing the original feels redundant.)

Both Engines Skip Stack Overflow at Almost Identical Rates

The engine split is the strangest part of this finding. For most major domains, ChatGPT and Google AI Mode disagree about something. Wikipedia is 97% ChatGPT. YouTube is 91% Google AI. Reddit is roughly even but for different reasons on each engine.

Stack Overflow is the rare case where both engines have the same opinion. Google AI Mode produces 51.7% of Stack Overflow citations. ChatGPT produces 48.3%. The split is so balanced that it tells you neither engine treats Stack Overflow as a meaningful priority. They're both citing it occasionally, when nothing better turns up.

Compare that to Stack Overflow's share within each engine. ChatGPT cites Stack Overflow in 0.018% of its citations. Google AI Mode cites it in 0.011%. Microscopic, on both sides.

When Stack Overflow Is Cited, It Lands Deep in the List

The rare times Stack Overflow does appear in an AI response, it's buried. Average citation rank on ChatGPT: 19.69. Average on Google AI Mode: 13.08. For comparison, Wikipedia averages 6.99 on ChatGPT, and Reddit averages 9.69. Stack Overflow shows up around the same point in the citation list where most readers have already stopped scrolling.

The position-1 rate is even sharper. Out of every Stack Overflow citation in our dataset, only 1.55% land at the top of the citation list. Reddit's position-1 rate is 4.85%. TripAdvisor's is 7.10%. Stack Overflow is barely above the noise floor.

The top-3 rate is 4.9%.

Top-5 is 14.2%.

Top-10 is 29.3%.

The Code-Snippet Sweet Spot Is the Only Place Stack Overflow Wins

Stack Overflow does still earn citations occasionally. The pattern is narrow. The pages that get cited are technical Q&A threads with very specific code-level questions where no equivalent answer exists anywhere else. JavaScript link rewriting. PDF generation library comparisons. Plotly dashboard exports. Boilerplate avoidance for a particular framework.

The single most-cited Stack Overflow URL in our dataset is a 12-year-old thread on automatically converting external links to affiliate links. That one URL alone accounts for more than 10% of every Stack Overflow citation we've recorded. The next four most-cited Stack Overflow URLs combined don't match it.

Stack Overflow earns AI citations only when it has the kind of hyper-narrow code answer that doesn't exist on Reddit, dev.to, or Medium. Everything else gets routed elsewhere.

This pattern explains why Stack Overflow citations cluster so tightly around developer-tooling topics: form builders, affiliate code, chart and graph libraries, PDF and HTML export, design tools. There's almost nothing in the dataset about programming languages or frameworks broadly.

AI engines don't go to Stack Overflow for "how should I learn React in 2026?" — they go to Reddit. They only go to Stack Overflow when they need a specific snippet that's been buried in a thread for a decade.

Stack Overflow's Database Is Already Inside the Models

The simplest explanation for what's happening is also the most consequential one for any developer-content platform: AI engines aren't ignoring Stack Overflow. They've absorbed it.

Every major foundation model has had access to Stack Overflow's data dumps for years. The site explicitly licenses its content under Creative Commons. OpenAI, Google, Anthropic, and every other model trainer has used Stack Overflow as a primary source for code reasoning since at least 2020. The site is sitting inside the models, not outside them. When you ask ChatGPT how to write a SQL JOIN, it doesn't need to retrieve a Stack Overflow page. It already wrote the answer ten million times in training.

dev.to and Reddit don't have that problem. Reddit's data deal with OpenAI happened in 2024, and most of the cited Reddit threads in our dataset post-date the major training cutoffs. dev.to is younger and was less heavily used as training data. Both platforms have content that AI engines need to retrieve because they don't already have it baked in.

This is not going to reverse. Stack Overflow's training-data presence in foundation models grows every release cycle, not shrinks. The fresher Stack Overflow content from 2024 onward will eventually get absorbed too.

For brands building developer-facing content today, the lesson is uncomfortable. Investing in Stack Overflow answers as an AI visibility play is investing in citations that will not happen.

The three pillars of AI engine visibility still apply: your own website, third-party mentions, and UGC. Stack Overflow doesn't fit cleanly into any of them. It's a fourth category, and it's the category AI engines have already finished reading.

Check Your Stack Overflow Citation Footprint With Qvery Assistant

We ran this analysis across our entire dataset to surface the patterns. The next step is doing it for your brand specifically, and that's exactly what Qvery Assistant is built for.

Qvery Assistant is the AI agent built into your Qvery account. It has full context on every query you track, every citation you've earned, every brand mention recorded for you and your competitors. You interact with it in two ways:

Pick a template. Templates are pre-built multi-agent workflows. Run an AI Visibility Report for the past 30 days, a Citation Gap Analysis against your top three competitors, or a Competitor Deep Dive on whoever is outperforming you. The Assistant handles the orchestration. You get a structured deliverable.

Ask any question in plain English. "How does my Stack Overflow citation footprint compare to my competitors?" "Which of my topics surface Stack Overflow at all?" "Where am I missing developer-source citations entirely?" The Assistant queries your data and answers in seconds.

You'll know in seconds whether your brand looks like the average pattern in this article, whether you're outperforming it, or whether something is breaking against you. Sign up for Qvery to start.

Most developers I know assume Stack Overflow is the bedrock of AI search visibility for technical content. It's the world's largest developer Q&A site. It has 24 million answered questions.

If AI engines were going to cite anyone for code questions, surely Stack Overflow would be the obvious first stop.

It isn't. We've been tracking AI engine citations across ChatGPT and Google AI Mode through Qvery's AI Engine Researcher, and Stack Overflow barely shows up. It accounts for 0.014% of all citations. That's one citation in every seven thousand. It ranks well outside the top fifty cited domains, behind sites that nobody would describe as a primary developer resource.

The reason isn't that Stack Overflow lost its quality. The reason is that AI engines have already read it.

Every answer, every accepted solution, every code snippet that's been on the site since 2020 is sitting inside the foundation models. There's no need to cite a source the model has already absorbed. Stack Overflow's invisibility in AI search is a side effect of being too useful for too long.

A success story, technically. Just not the success Stack Overflow would have picked.

Stack Overflow Is the Smallest Major Q&A Source in AI Search

Among Q&A and developer-knowledge platforms, the gap is brutal. Reddit gets 117 times more citations than Stack Overflow. dev.to, a developer blogging platform that didn't exist before 2017, gets 14 times more. Wikipedia gets 62 times more. Even Quora, a Q&A platform that ChatGPT cites zero times, beats Stack Overflow on Google AI Mode by a factor of four.

Stack Overflow's 0.014% share of all AI engine citations is the kind of number you'd expect for a niche regional forum, not for the largest developer reference site on the open web. The volume gap is the surprise. Stack Overflow has more answered questions than the rest of UGC combined. It still loses on every comparison that matters.

dev.to Out-Cites Stack Overflow by an Order of Magnitude

The dev.to comparison is the cleanest way to see what's happening. Both platforms target the same audience. Both publish technical content. Both are open to anyone who wants to write a tutorial or share a code snippet. By any reasonable measure, Stack Overflow has more authority, more content, and more historical weight.

dev.to gets cited 14 times more often. The gap widens to 19 times on Google AI Mode specifically.

The structural difference is content age. The most-cited dev.to articles in our dataset were published after 2022. Most of the Stack Overflow pages that do get cited are five to twelve years old. AI engines treat the dev.to articles as fresh information they need to retrieve. They treat the Stack Overflow pages as content they already know (the model has already memorized the answer. Citing the original feels redundant.)

Both Engines Skip Stack Overflow at Almost Identical Rates

The engine split is the strangest part of this finding. For most major domains, ChatGPT and Google AI Mode disagree about something. Wikipedia is 97% ChatGPT. YouTube is 91% Google AI. Reddit is roughly even but for different reasons on each engine.

Stack Overflow is the rare case where both engines have the same opinion. Google AI Mode produces 51.7% of Stack Overflow citations. ChatGPT produces 48.3%. The split is so balanced that it tells you neither engine treats Stack Overflow as a meaningful priority. They're both citing it occasionally, when nothing better turns up.

Compare that to Stack Overflow's share within each engine. ChatGPT cites Stack Overflow in 0.018% of its citations. Google AI Mode cites it in 0.011%. Microscopic, on both sides.

When Stack Overflow Is Cited, It Lands Deep in the List

The rare times Stack Overflow does appear in an AI response, it's buried. Average citation rank on ChatGPT: 19.69. Average on Google AI Mode: 13.08. For comparison, Wikipedia averages 6.99 on ChatGPT, and Reddit averages 9.69. Stack Overflow shows up around the same point in the citation list where most readers have already stopped scrolling.

The position-1 rate is even sharper. Out of every Stack Overflow citation in our dataset, only 1.55% land at the top of the citation list. Reddit's position-1 rate is 4.85%. TripAdvisor's is 7.10%. Stack Overflow is barely above the noise floor.

The top-3 rate is 4.9%.

Top-5 is 14.2%.

Top-10 is 29.3%.

The Code-Snippet Sweet Spot Is the Only Place Stack Overflow Wins

Stack Overflow does still earn citations occasionally. The pattern is narrow. The pages that get cited are technical Q&A threads with very specific code-level questions where no equivalent answer exists anywhere else. JavaScript link rewriting. PDF generation library comparisons. Plotly dashboard exports. Boilerplate avoidance for a particular framework.

The single most-cited Stack Overflow URL in our dataset is a 12-year-old thread on automatically converting external links to affiliate links. That one URL alone accounts for more than 10% of every Stack Overflow citation we've recorded. The next four most-cited Stack Overflow URLs combined don't match it.

Stack Overflow earns AI citations only when it has the kind of hyper-narrow code answer that doesn't exist on Reddit, dev.to, or Medium. Everything else gets routed elsewhere.

This pattern explains why Stack Overflow citations cluster so tightly around developer-tooling topics: form builders, affiliate code, chart and graph libraries, PDF and HTML export, design tools. There's almost nothing in the dataset about programming languages or frameworks broadly.

AI engines don't go to Stack Overflow for "how should I learn React in 2026?" — they go to Reddit. They only go to Stack Overflow when they need a specific snippet that's been buried in a thread for a decade.

Stack Overflow's Database Is Already Inside the Models

The simplest explanation for what's happening is also the most consequential one for any developer-content platform: AI engines aren't ignoring Stack Overflow. They've absorbed it.

Every major foundation model has had access to Stack Overflow's data dumps for years. The site explicitly licenses its content under Creative Commons. OpenAI, Google, Anthropic, and every other model trainer has used Stack Overflow as a primary source for code reasoning since at least 2020. The site is sitting inside the models, not outside them. When you ask ChatGPT how to write a SQL JOIN, it doesn't need to retrieve a Stack Overflow page. It already wrote the answer ten million times in training.

dev.to and Reddit don't have that problem. Reddit's data deal with OpenAI happened in 2024, and most of the cited Reddit threads in our dataset post-date the major training cutoffs. dev.to is younger and was less heavily used as training data. Both platforms have content that AI engines need to retrieve because they don't already have it baked in.

This is not going to reverse. Stack Overflow's training-data presence in foundation models grows every release cycle, not shrinks. The fresher Stack Overflow content from 2024 onward will eventually get absorbed too.

For brands building developer-facing content today, the lesson is uncomfortable. Investing in Stack Overflow answers as an AI visibility play is investing in citations that will not happen.

The three pillars of AI engine visibility still apply: your own website, third-party mentions, and UGC. Stack Overflow doesn't fit cleanly into any of them. It's a fourth category, and it's the category AI engines have already finished reading.

Check Your Stack Overflow Citation Footprint With Qvery Assistant

We ran this analysis across our entire dataset to surface the patterns. The next step is doing it for your brand specifically, and that's exactly what Qvery Assistant is built for.

Qvery Assistant is the AI agent built into your Qvery account. It has full context on every query you track, every citation you've earned, every brand mention recorded for you and your competitors. You interact with it in two ways:

Pick a template. Templates are pre-built multi-agent workflows. Run an AI Visibility Report for the past 30 days, a Citation Gap Analysis against your top three competitors, or a Competitor Deep Dive on whoever is outperforming you. The Assistant handles the orchestration. You get a structured deliverable.

Ask any question in plain English. "How does my Stack Overflow citation footprint compare to my competitors?" "Which of my topics surface Stack Overflow at all?" "Where am I missing developer-source citations entirely?" The Assistant queries your data and answers in seconds.

You'll know in seconds whether your brand looks like the average pattern in this article, whether you're outperforming it, or whether something is breaking against you. Sign up for Qvery to start.

© 2026 Qvery AI OÜ