By

Vlad Shvets

Listicles Are the Most Cited Content Type in AI Search

Listicles account for 45.8% of all classifiable AI engine citations. We analyzed our proprietary data to find out why, how they compare across industries, and what this means for your content strategy.

Listicles account for 45.8% of all classifiable AI engine citations. We analyzed our proprietary data to find out why, how they compare across industries, and what this means for your content strategy.

Listicles account for 45.8% of all classifiable AI engine citations. We analyzed our proprietary data to find out why, how they compare across industries, and what this means for your content strategy.

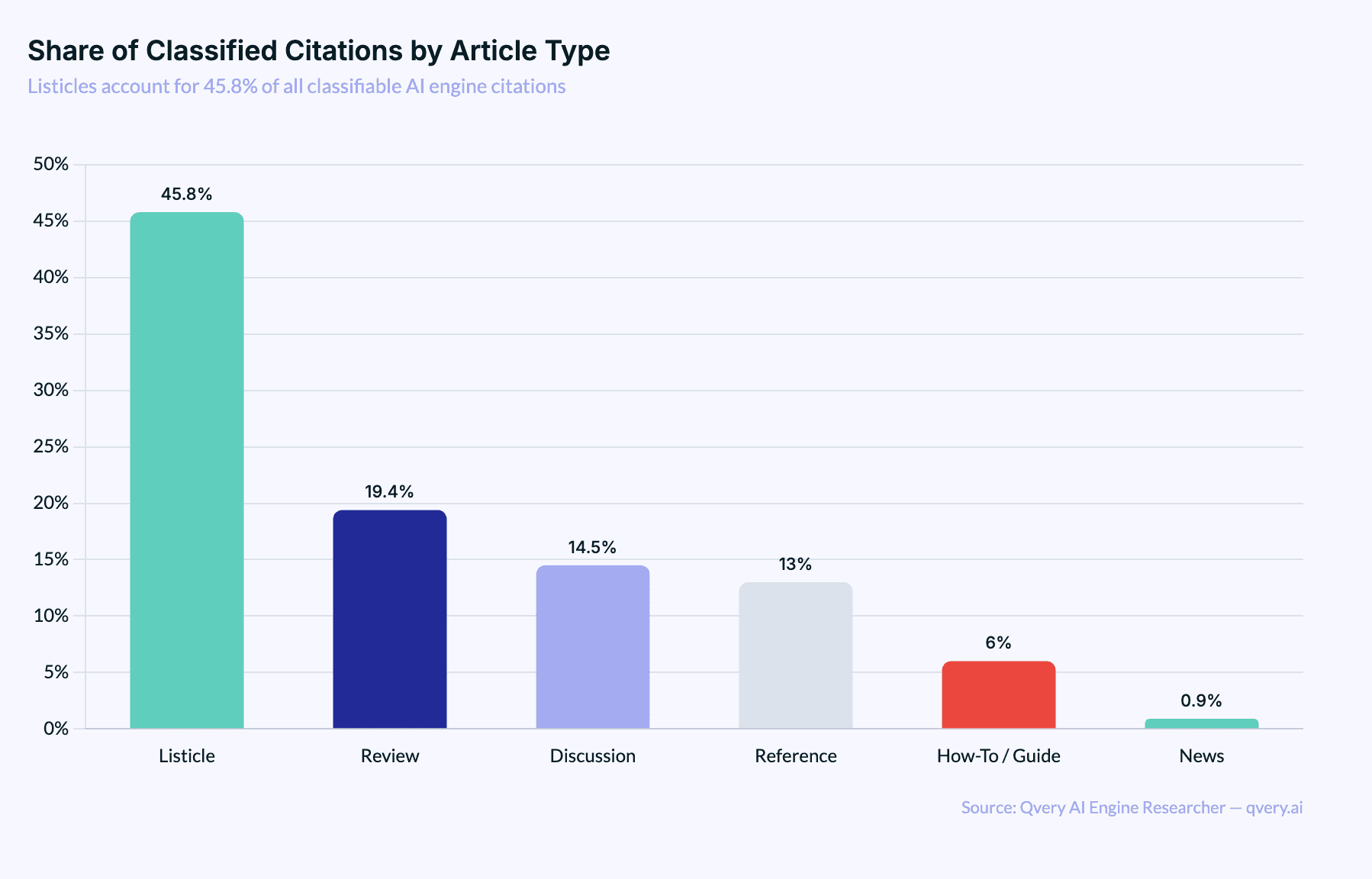

Of all the content formats you could write, listicles are the one AI search engines cite the most. Not tutorials. Not reviews. Not in-depth how-to guides. Listicles.

The "Top 10 Best Whatever" posts that content marketers have been writing since 2009 are, apparently, exactly what ChatGPT and Google AI Mode want to reference when they answer a question.

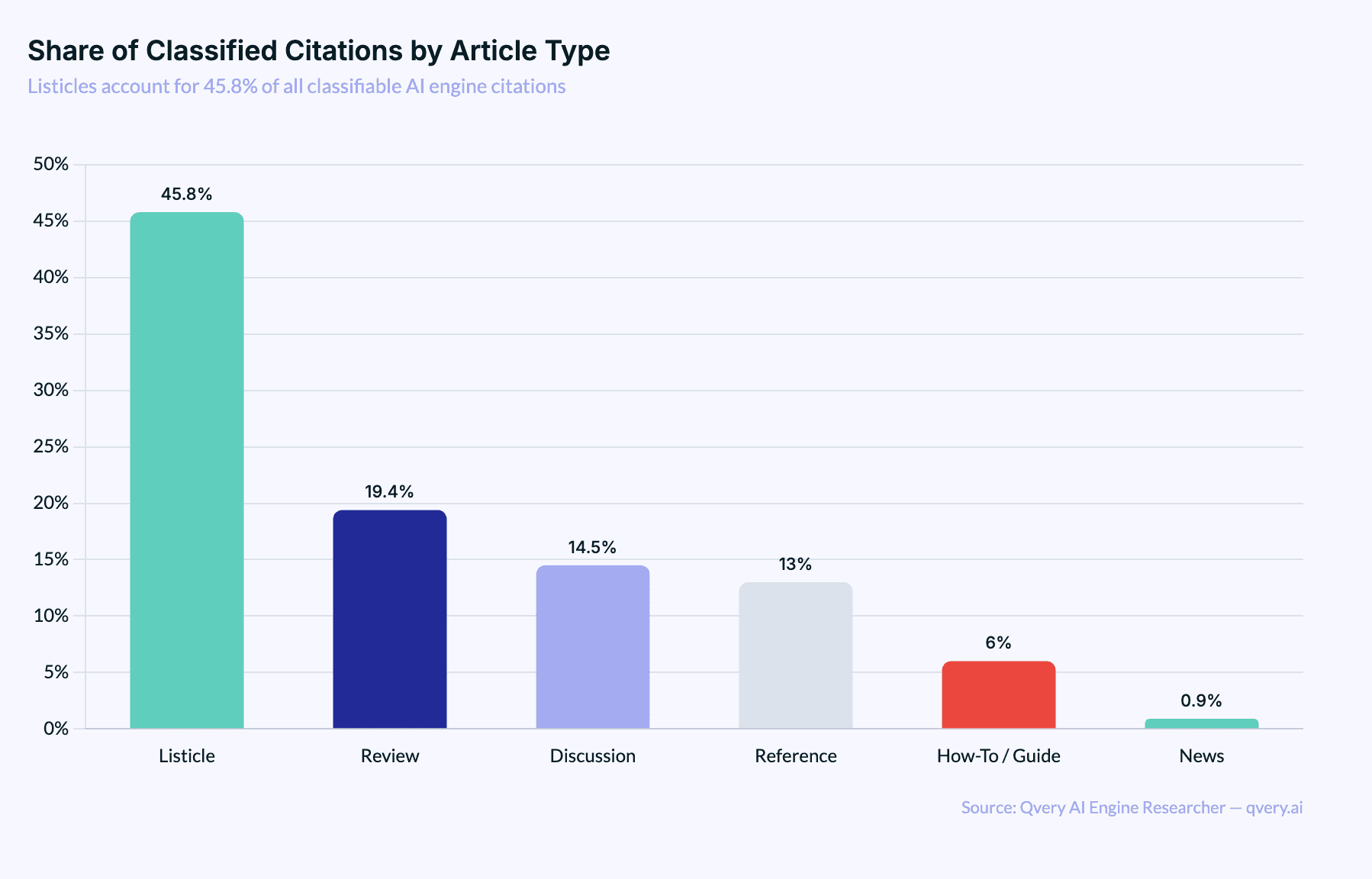

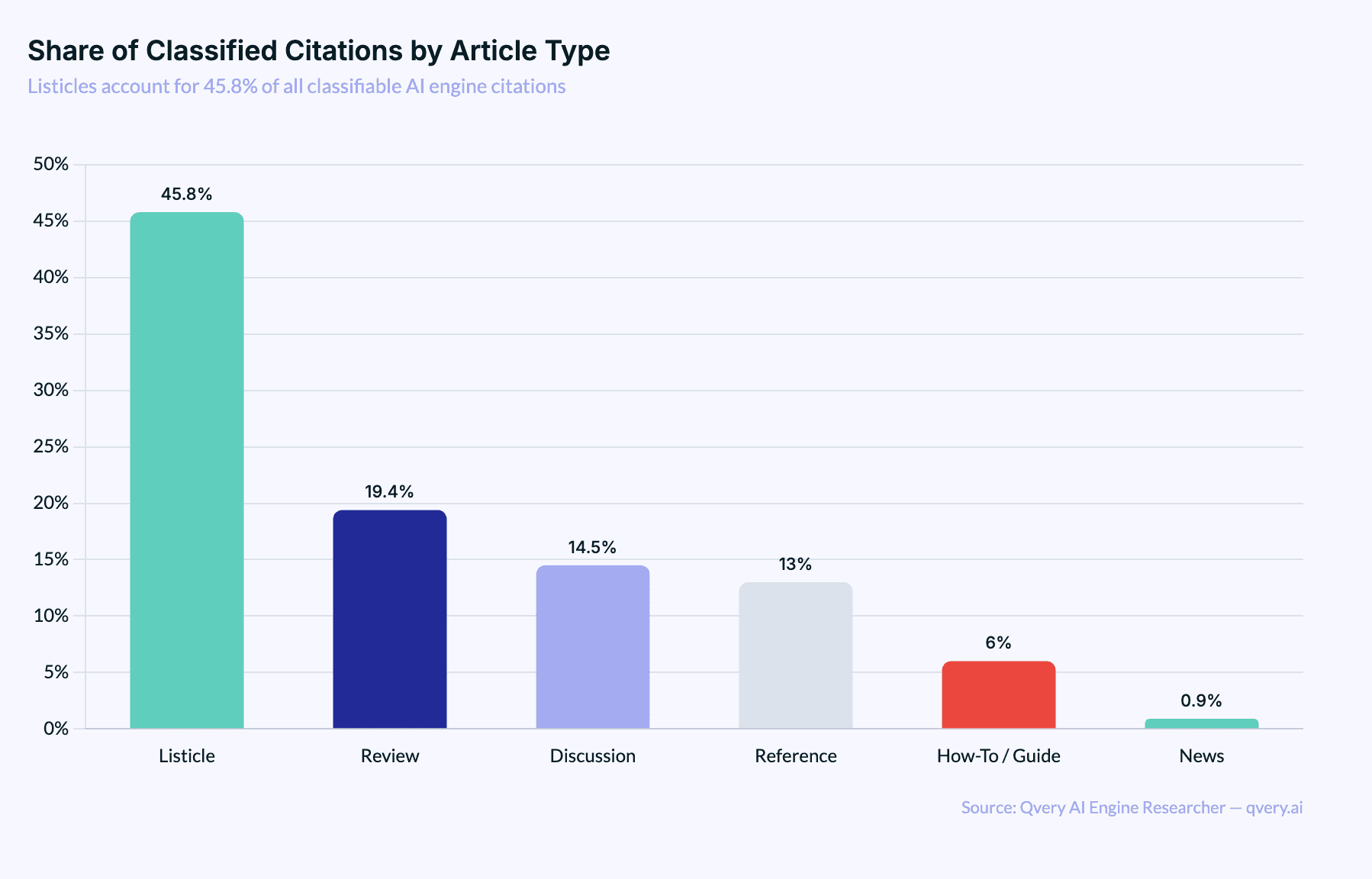

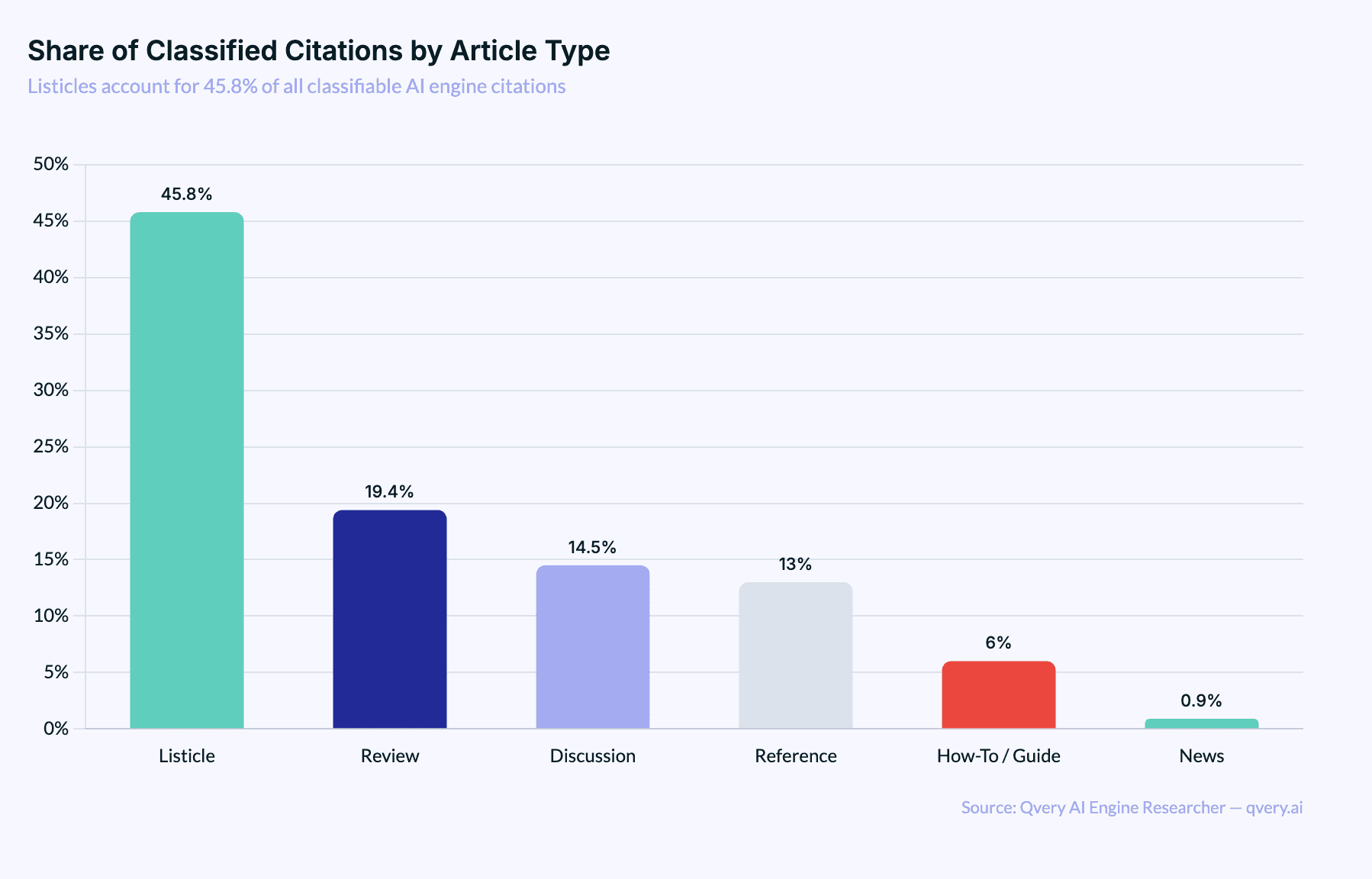

We analyzed our proprietary citation data at Qvery across both ChatGPT and Google AI Mode, classified every citation by article type based on title patterns, and found something that surprised even us: listicles account for 45.8% of all classifiable AI engine citations. That's not a slight edge. That's nearly half.

The format your content strategy intern was told is "outdated" is the single most referenced content type in AI search. It beats reviews by 2.4x. It beats how-to guides by 7.6x. It beats news articles by 50x. (Awkward.)

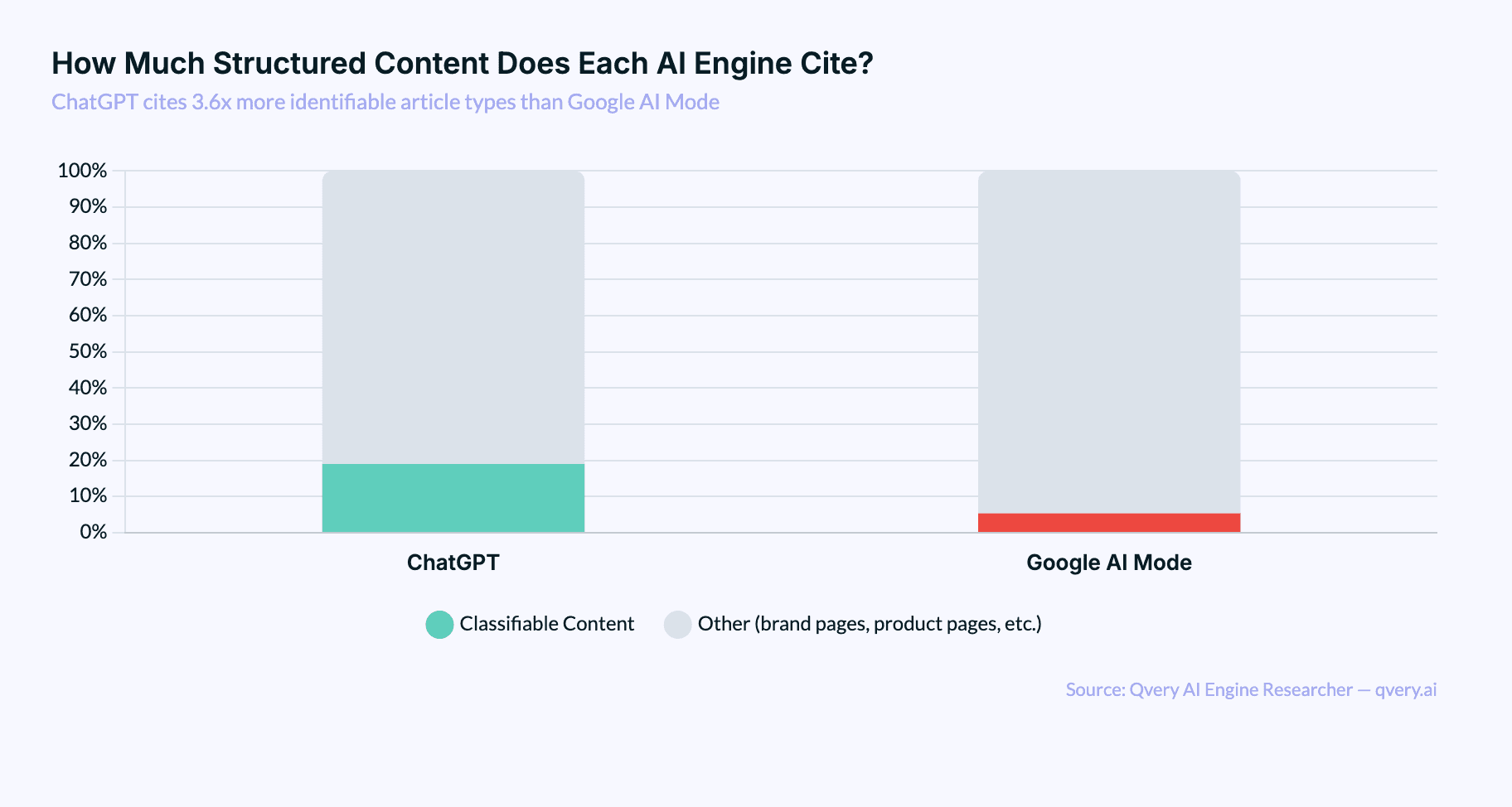

Before we go further: our classification system uses title pattern matching to identify article types. Roughly 12% of all citations match known patterns (Listicle, Review, Reference, Discussion, How-To/Guide, News). The other 88% are brand pages, product pages, and niche content that don't follow recognizable title patterns. Everything in this post refers to that classifiable 12%, which represents hundreds of thousands of individual citations.

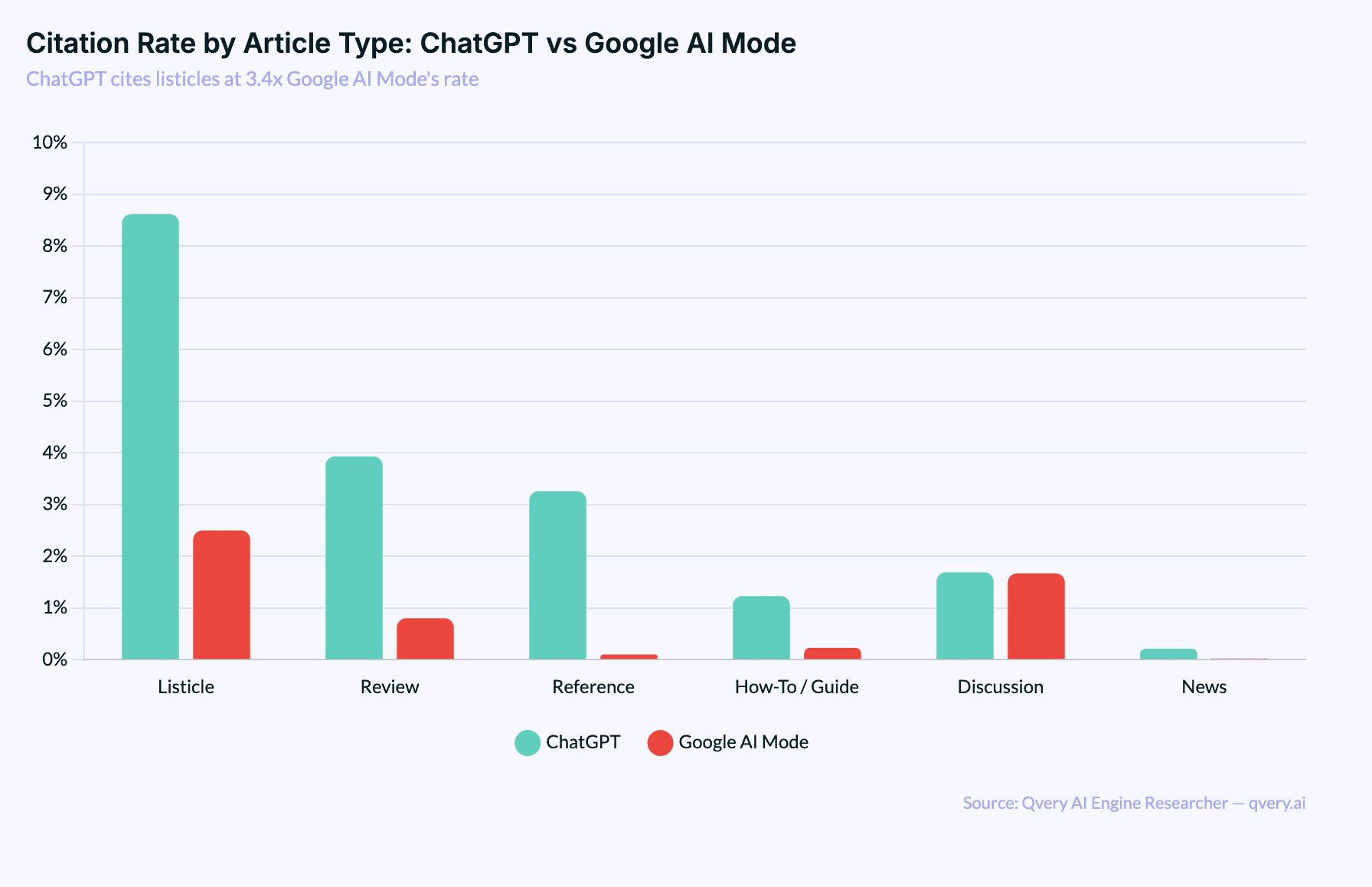

ChatGPT Is a Listicle Recommendation Engine

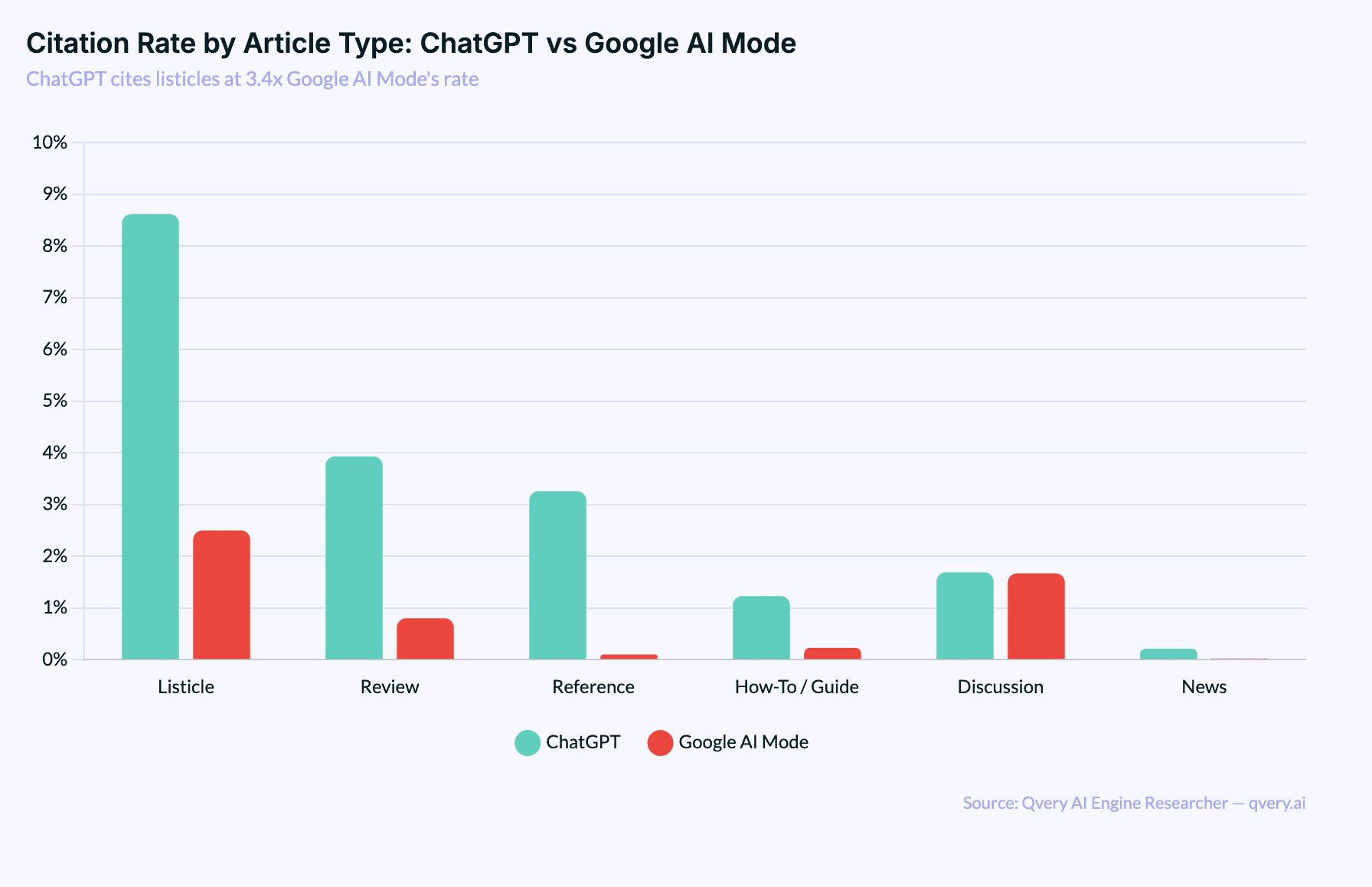

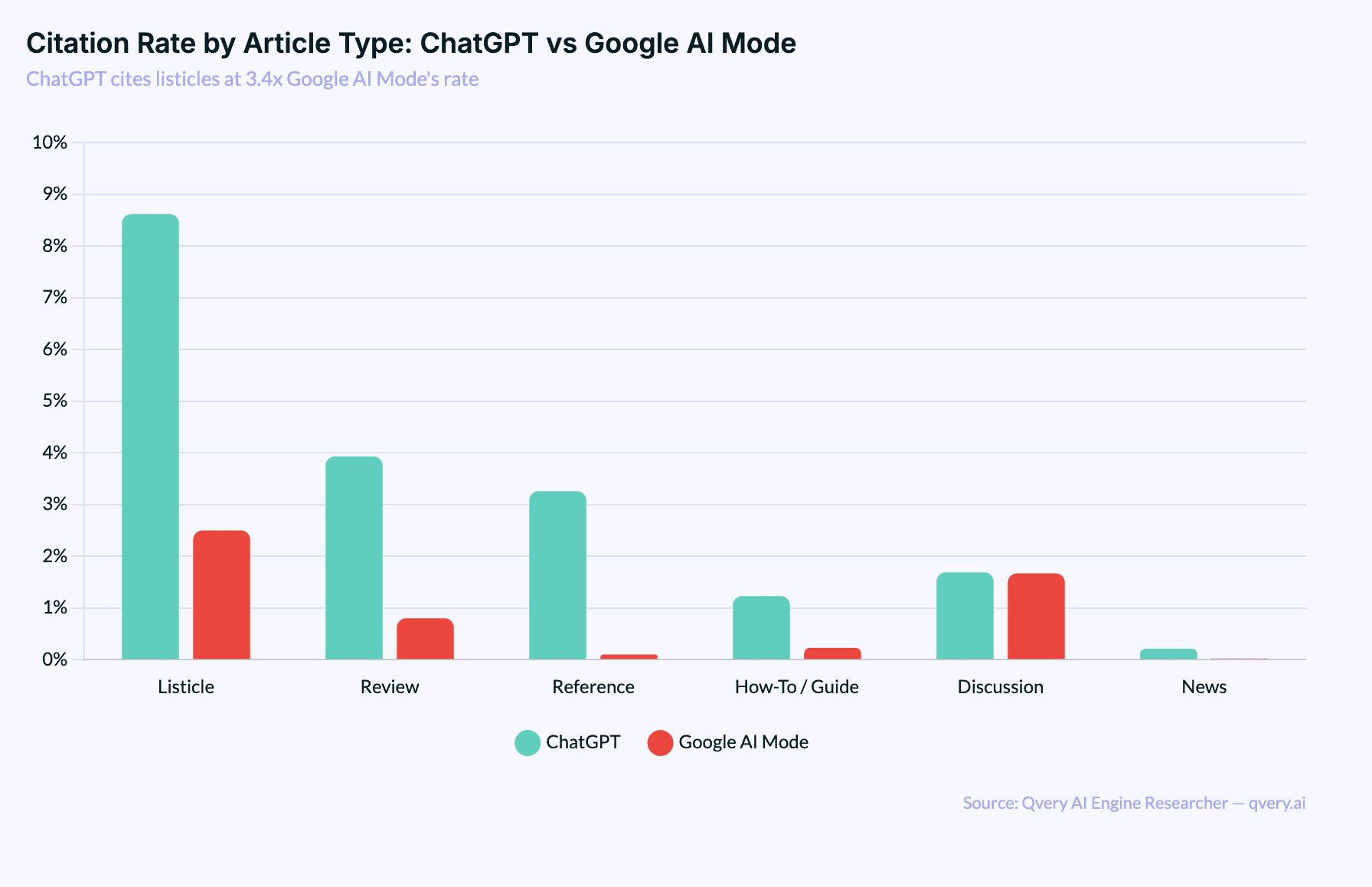

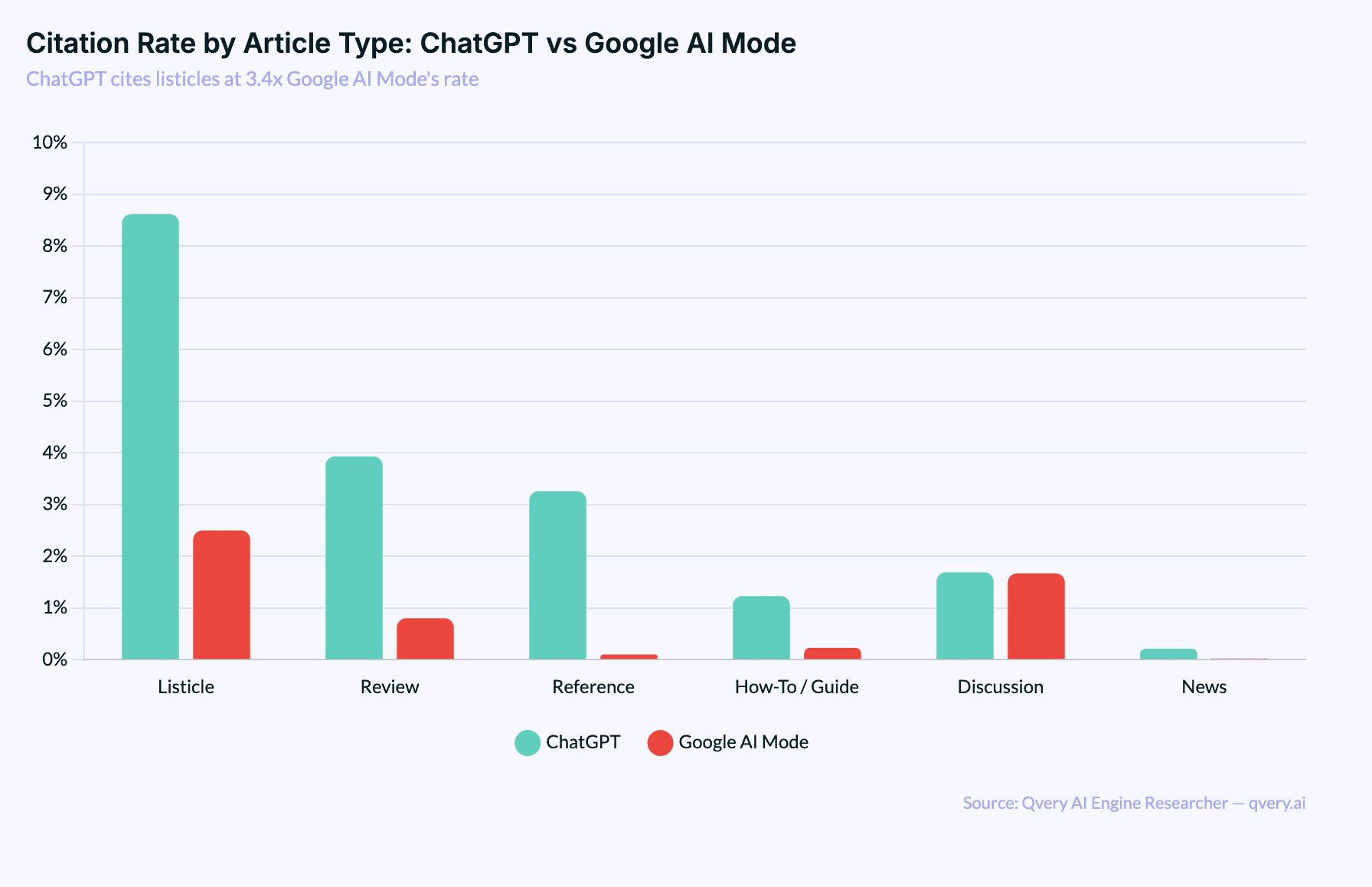

The platform split on this is wild. ChatGPT cites listicles at 8.62% of all its citations. Google AI Mode? Just 2.50%. That's a 3.4x difference.

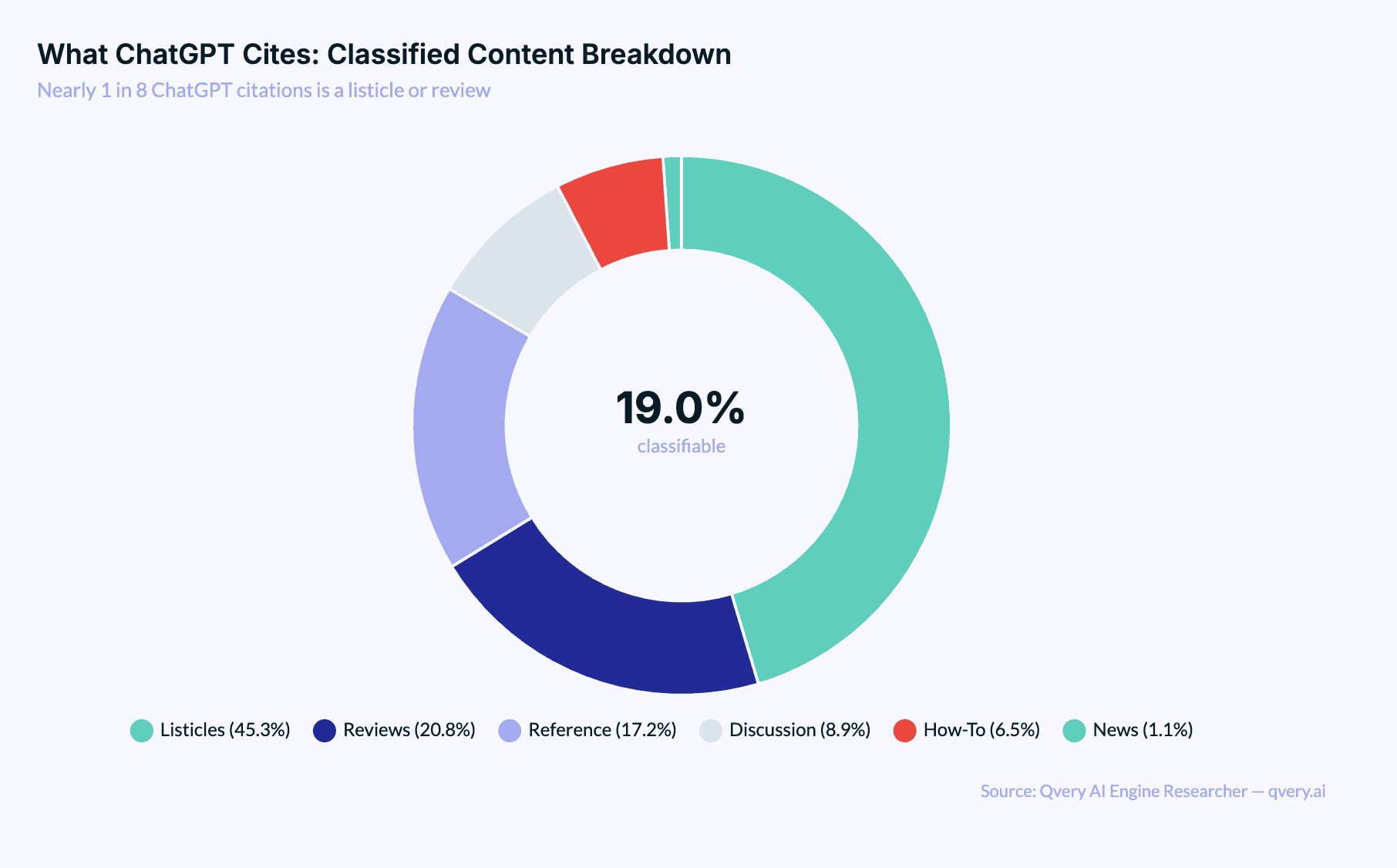

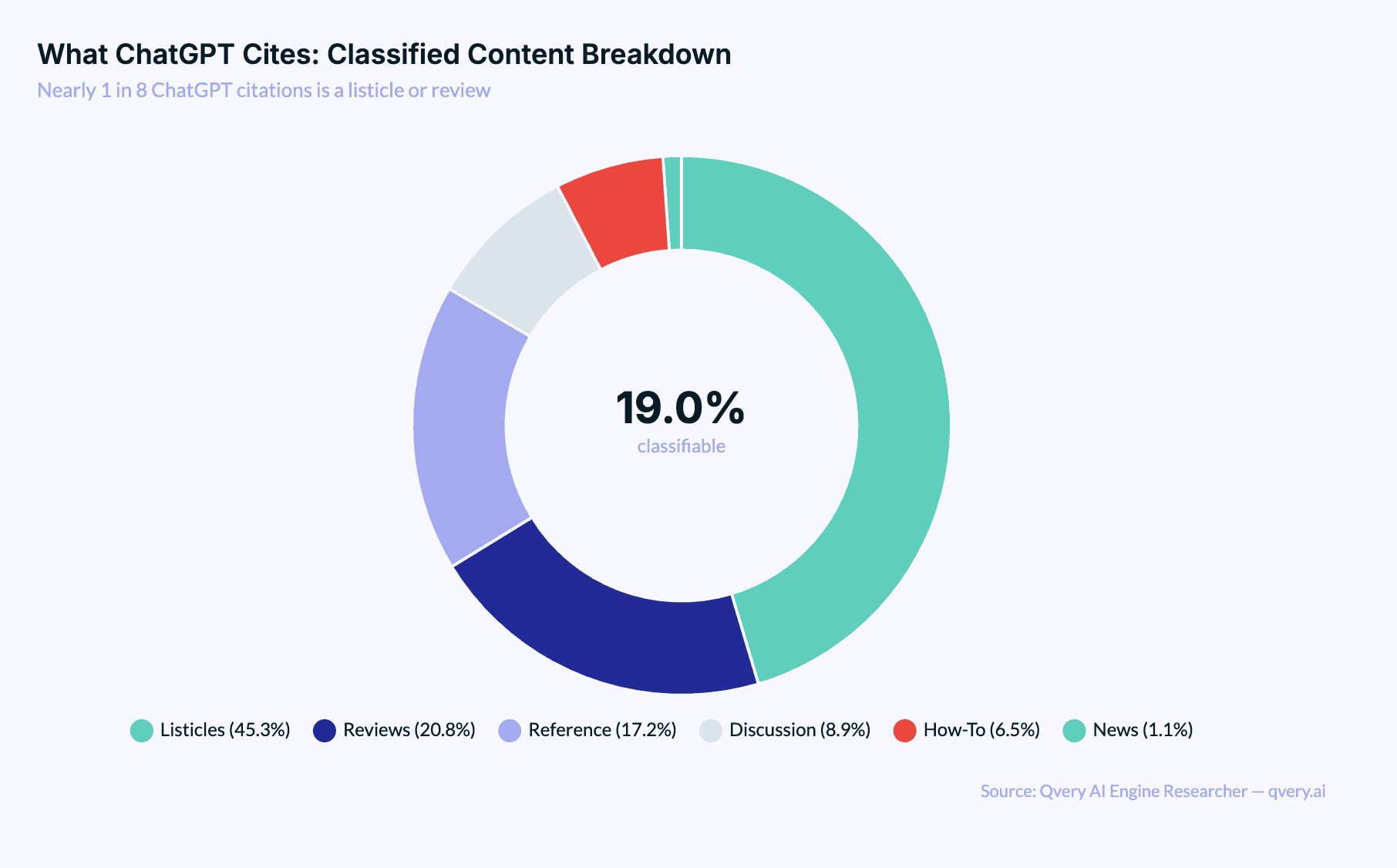

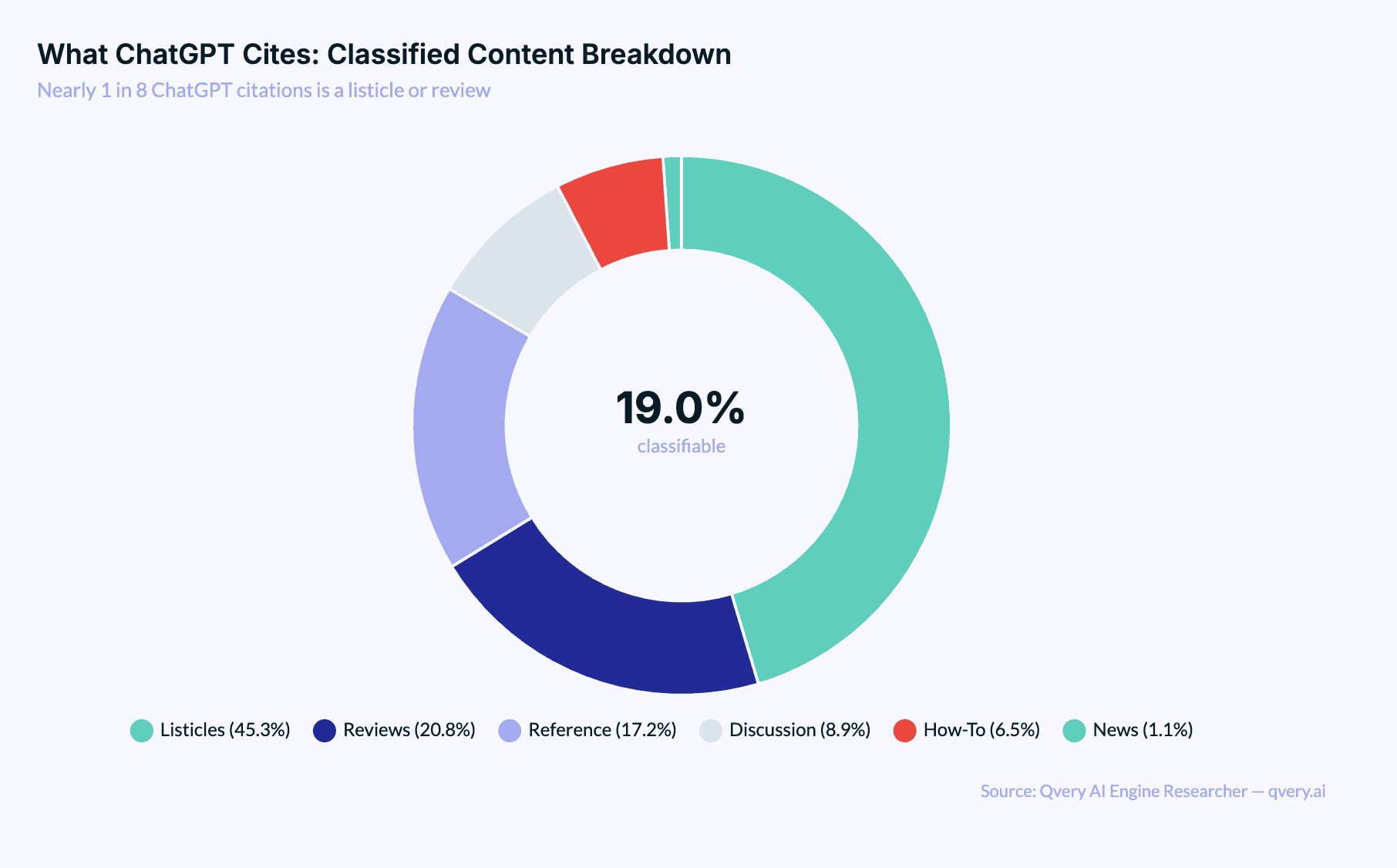

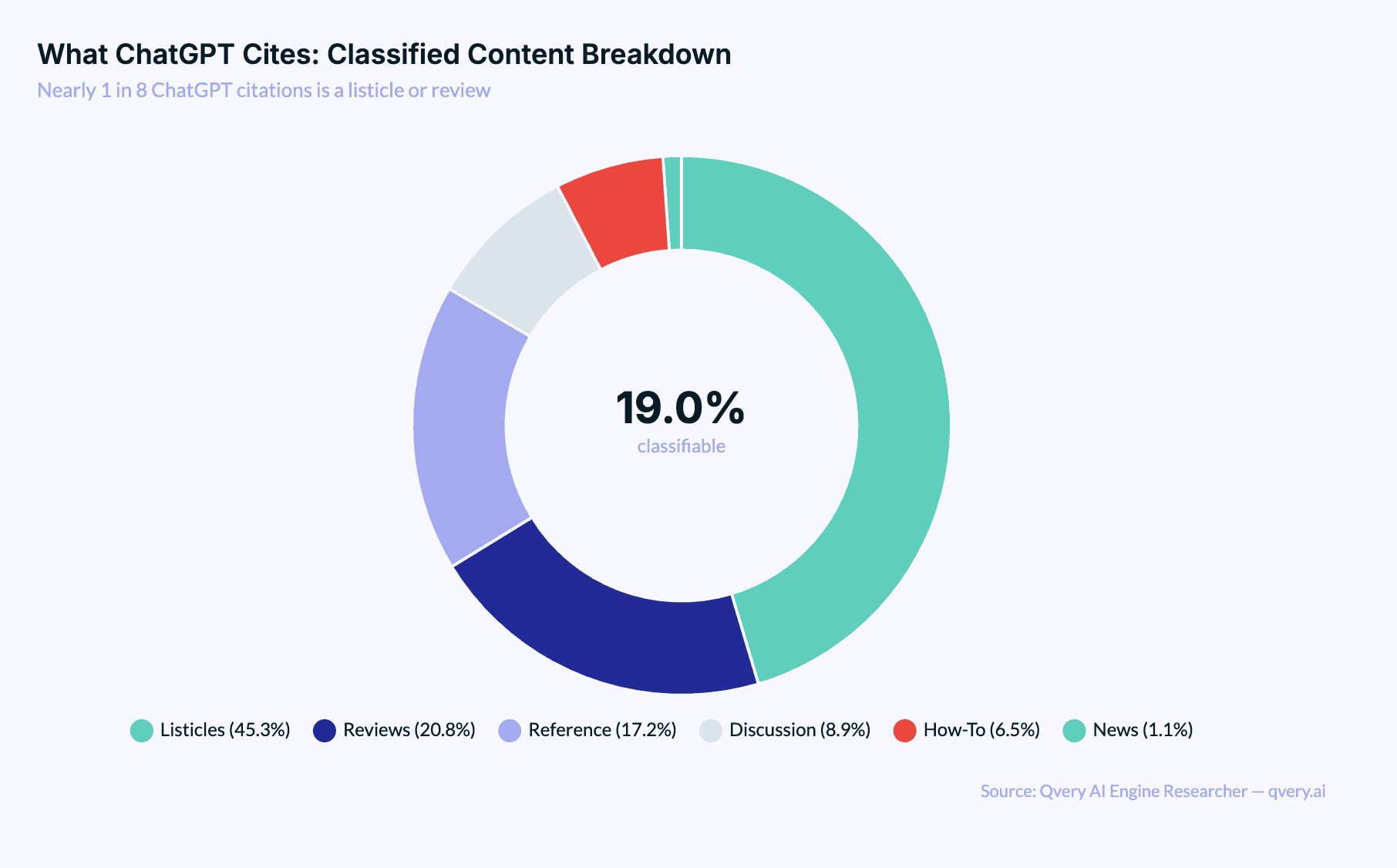

It gets more extreme. ChatGPT produces 74.8% of all listicle citations despite generating only about 43% of total citation volume. Nearly 1 in 11 ChatGPT citations is a listicle. Add reviews to the mix and you get 12.49% of all ChatGPT citations being listicles or reviews. That's 1 in 8.

Stack on reference content and how-to guides and you reach 17.04% of all ChatGPT citations being structured, classifiable editorial content. ChatGPT isn't just a search engine. It's fundamentally a recommendation engine that loves curated, ranked content.

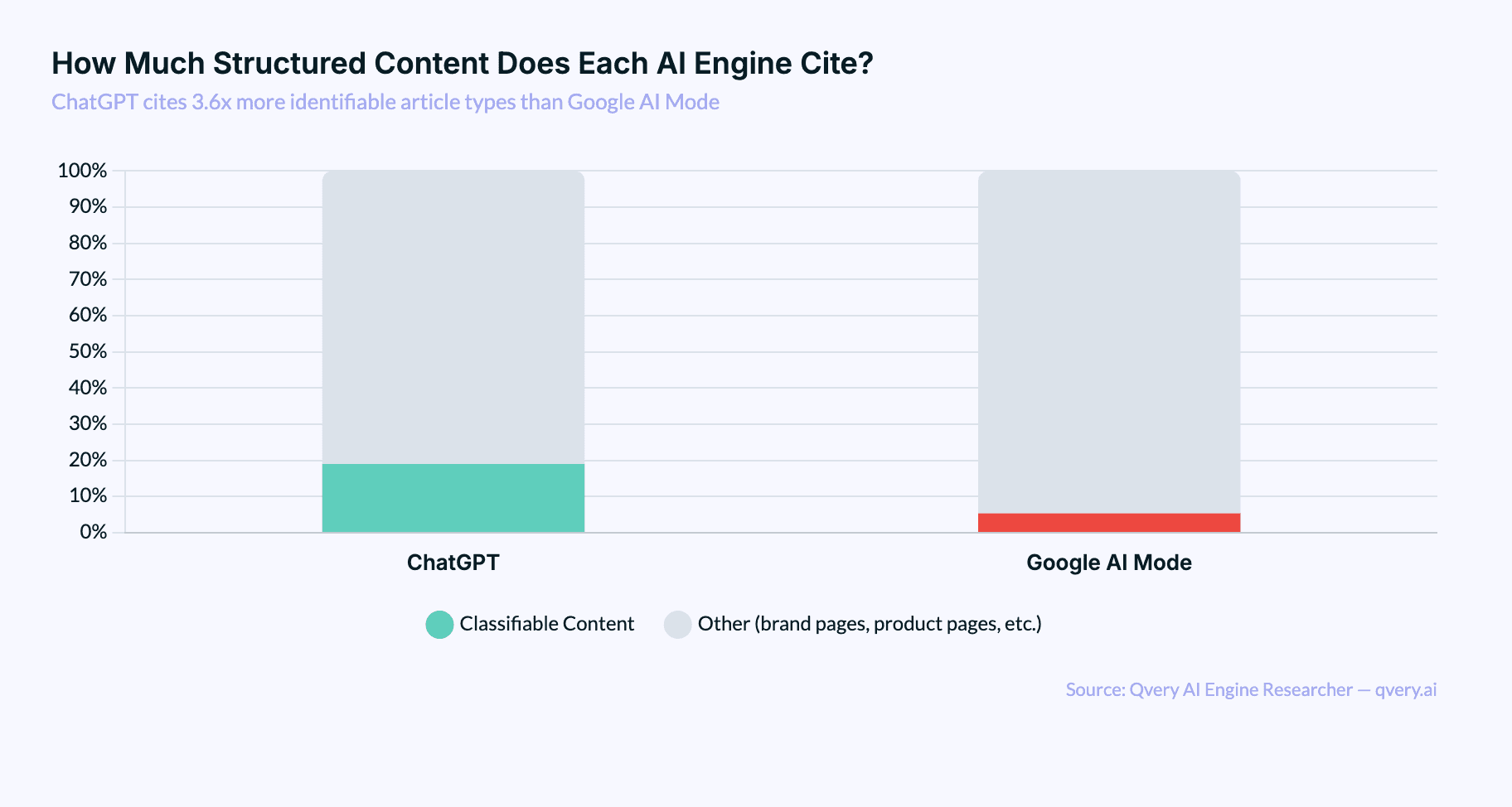

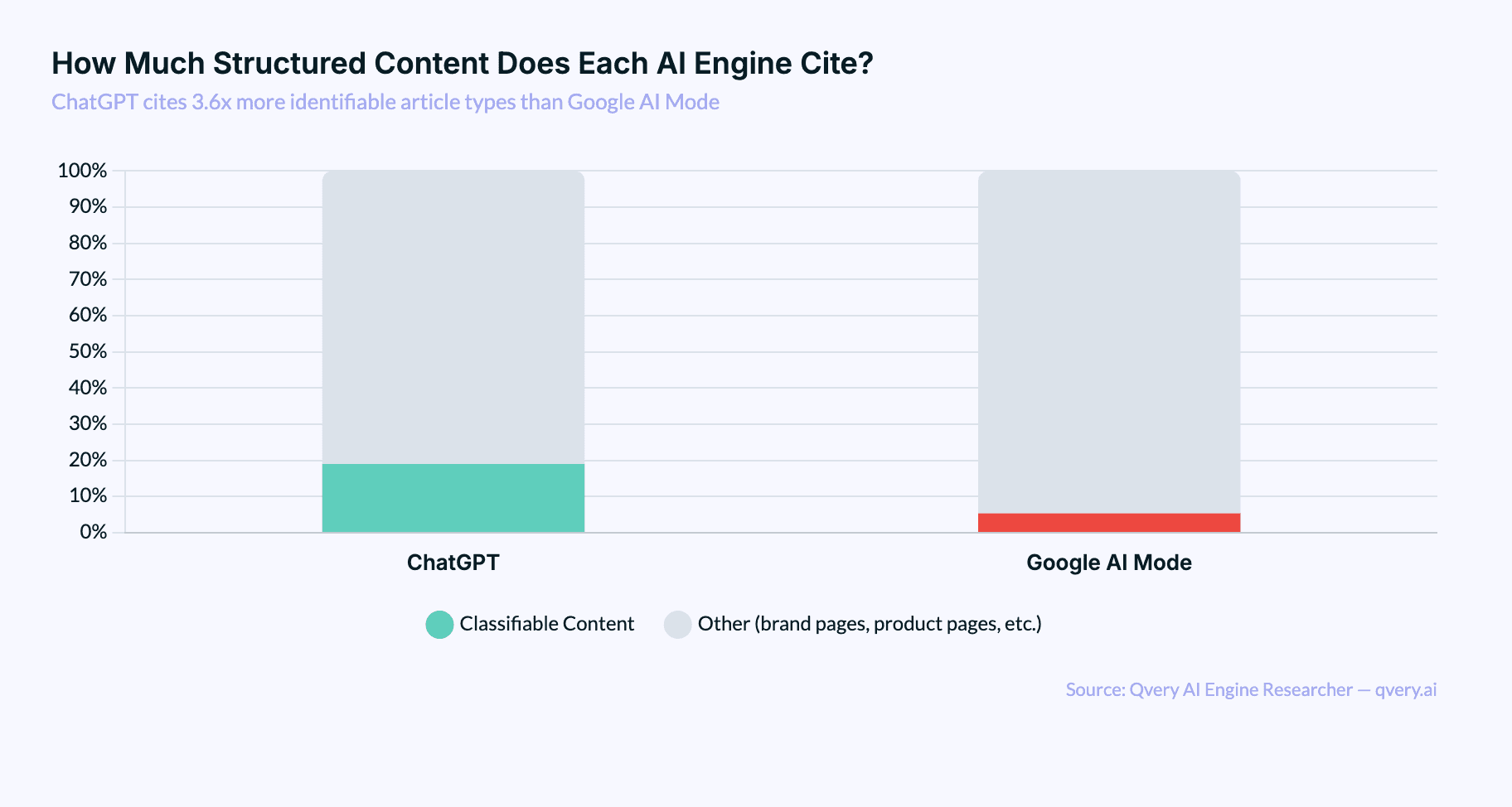

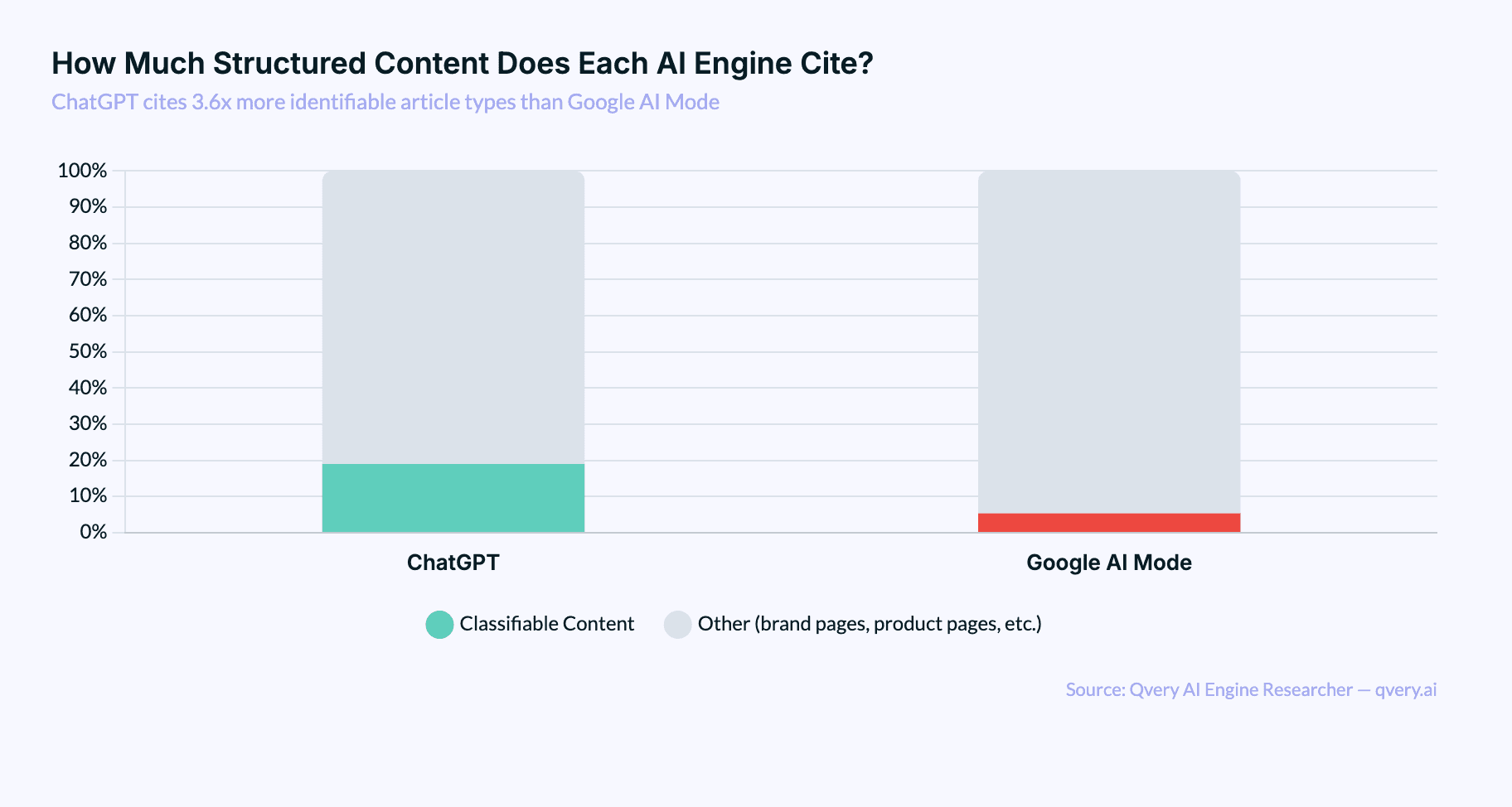

Google AI Mode takes the opposite approach. It prefers to synthesize ranked lists itself rather than cite external ones. Only 5.3% of Google AI citations match any classifiable article type, compared to ChatGPT's 19.0%. Google AI Mode has its own opinions, apparently, and doesn't need yours.

How Every Content Format Stacks Up

Here's the full breakdown of classifiable content types, ranked by share:

Listicles: 45.8% of classified content, 5.33% of all citations. The undisputed champion. "Top 10," "Best 5," "X Alternatives" titles dominate. ChatGPT drives 74.8% of the volume.

Reviews: 19.4% of classified, 2.25% of all. Comparisons, pricing breakdowns, "vs" articles, pros-and-cons content. ChatGPT drives 80.9%. Listicles are 2.4x more cited than reviews.

Discussion: 14.5% of classified, 1.68% of all. Reddit, Quora, StackOverflow. The only type that's roughly balanced between platforms: ChatGPT at 46.6%, Google AI at 53.4%.

Reference: ~13% of classified, ~1.5% of all. Wikipedia, "what is" articles, definitions. Almost exclusively a ChatGPT behavior. 96% of reference citations come from ChatGPT. Google AI barely touches encyclopedic content because it synthesizes definitions from its own knowledge graph.

How-To / Guide: 6.0% of classified, 0.69% of all. Tutorials, step-by-step content. ChatGPT cites these at 5.3x Google AI's rate. Instructional content is valued, just not as much as listicles.

News: 0.9% of classified, 0.11% of all. The rarest type. AI engines overwhelmingly prefer evergreen content. Breaking news is basically invisible.

The pattern is clear: both ChatGPT and Google AI Mode agree that listicles are their #1 classifiable type (45.3% on ChatGPT, 47.0% on Google AI). The difference is that ChatGPT actually cites structured content 3.6x more often.

Listicles Win on Volume, Not Position

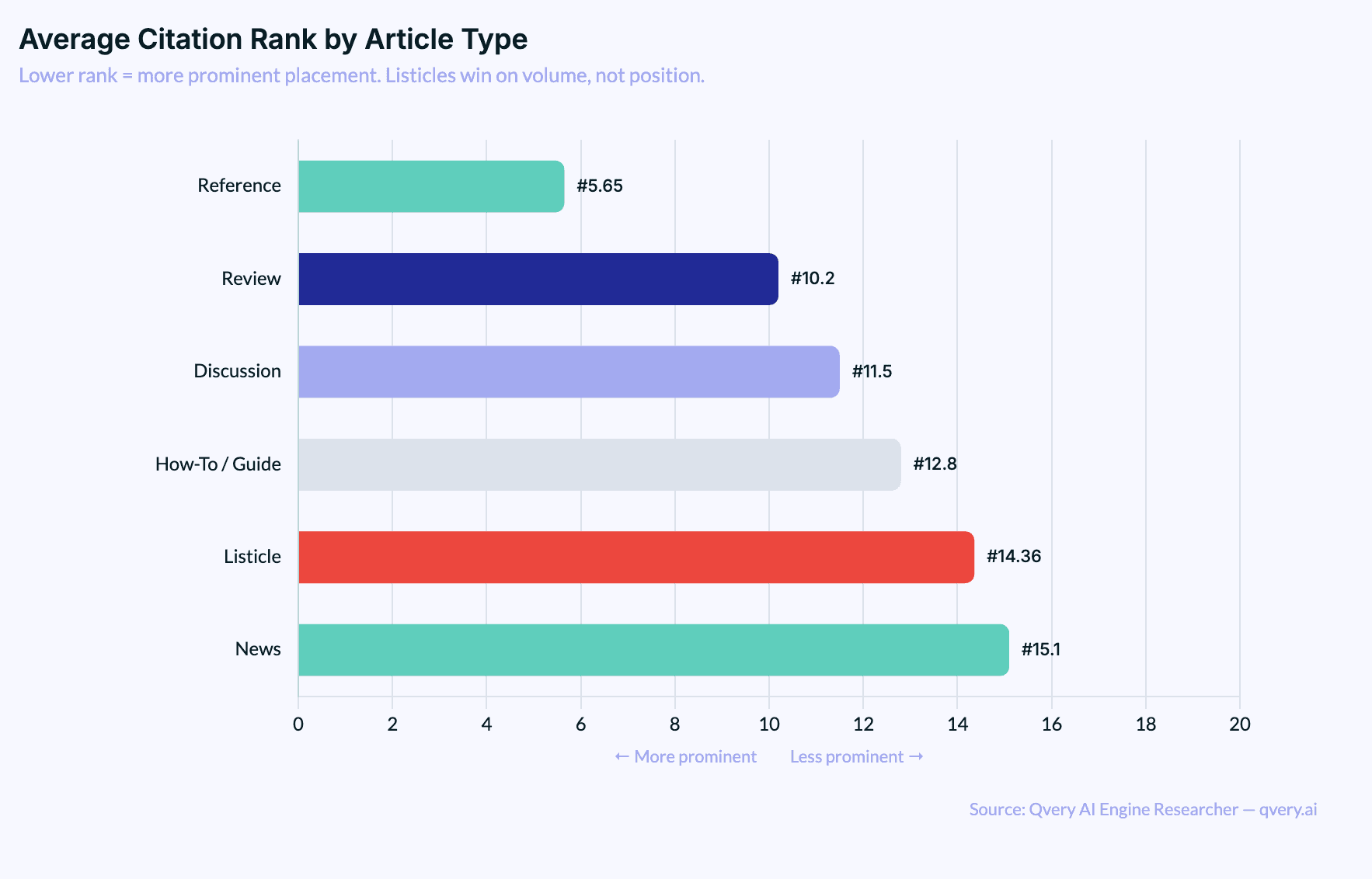

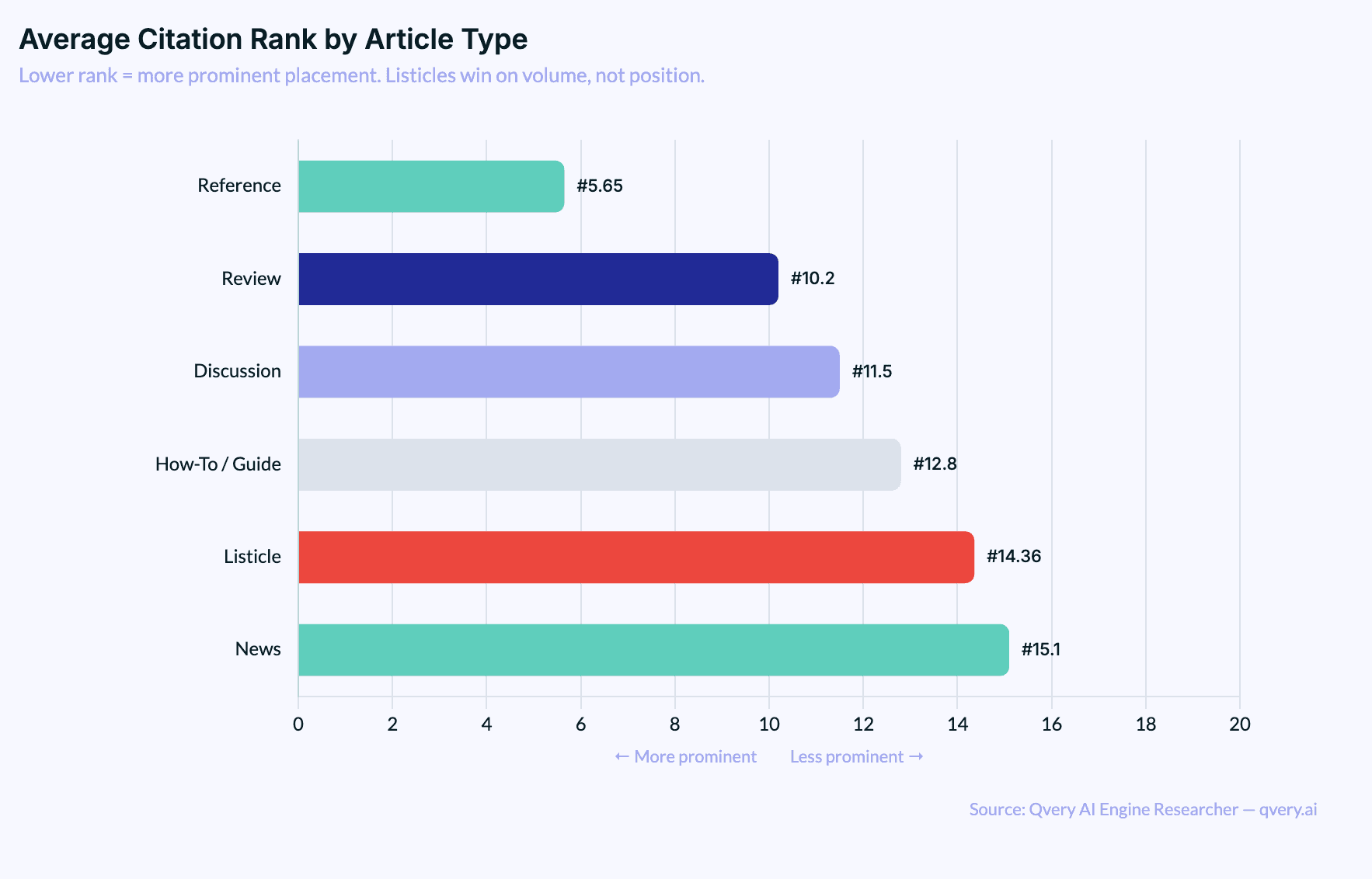

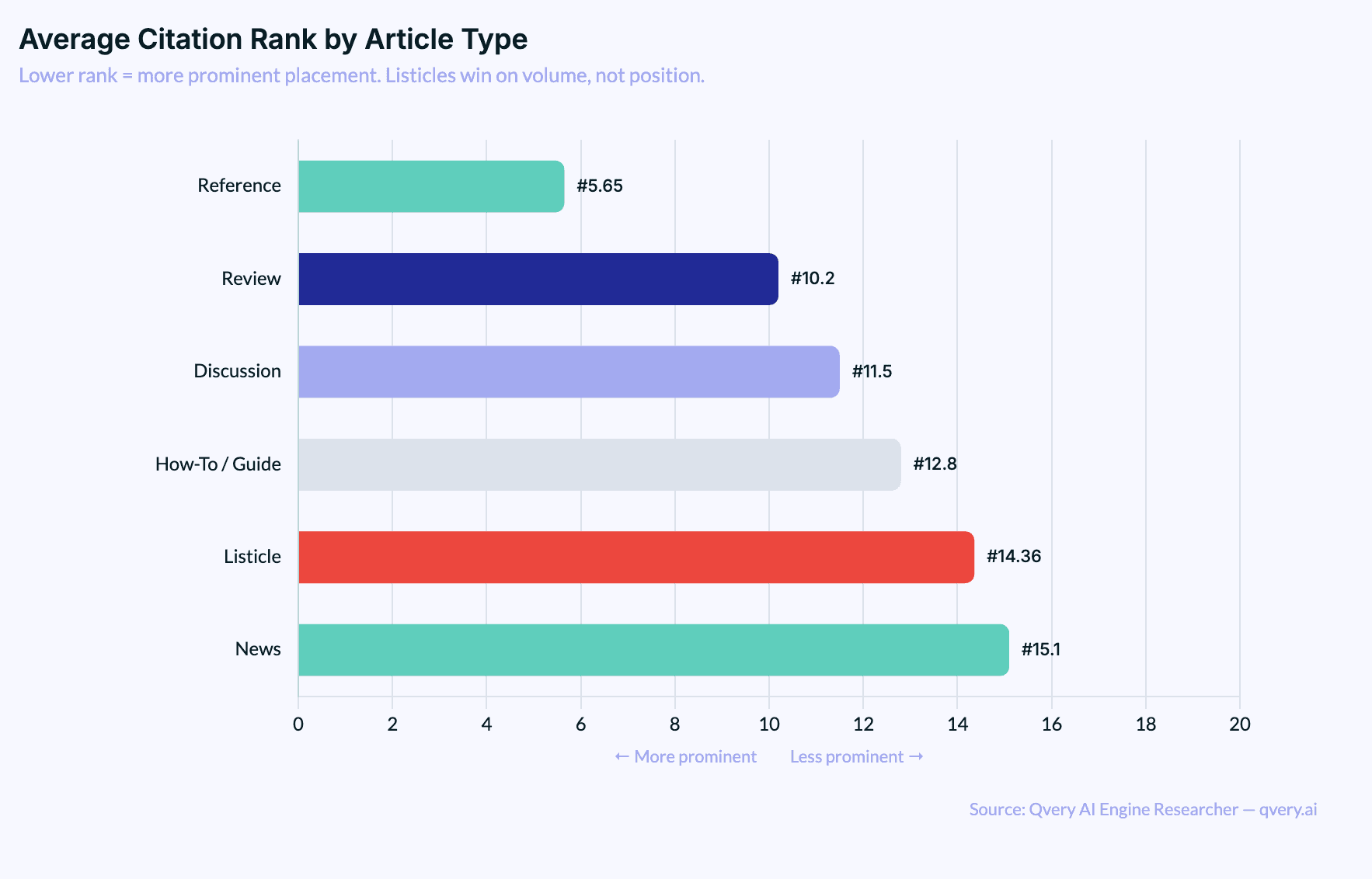

Here's where the nuance lives. Listicles are the most cited content type. They are not the most prominently cited.

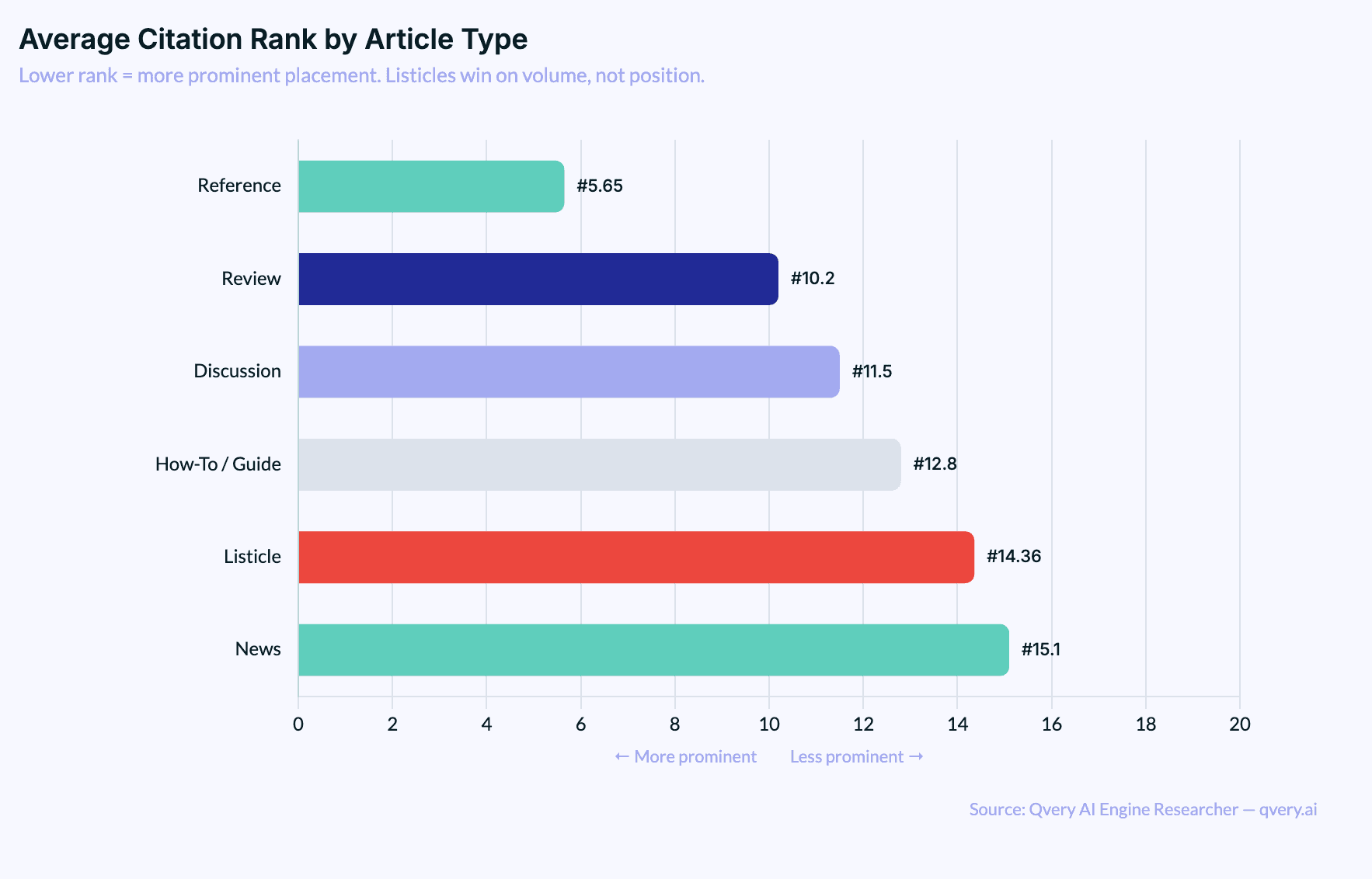

The average citation rank for listicles is 14.36 (median 13.0). That puts them in positions 8-18 of citation lists, which is solidly mid-pack. Compare that to reference content, which has an average rank of 5.65, meaning Wikipedia and definition pages consistently land near the top of responses.

This distinction matters. Listicles are a volume play. They show up everywhere, across a wide range of queries, reinforcing your brand's presence across the topics that matter to your category. Reference content is narrower but carries a different kind of weight: it gets cited when AI engines need to establish facts and definitions.

Think of it like this: reference content is the friend who shows up to three parties a year but everyone knows their name. Listicles are the friend who shows up to every party and talks to everyone in the room. Both build relationships. One goes deep. The other goes wide.

The point isn't that one position in the citation list is better than another. The point is that listicles get you into more conversations. And in AI search, being part of the conversation is the first thing that matters.

The Industry Gap Changes Everything

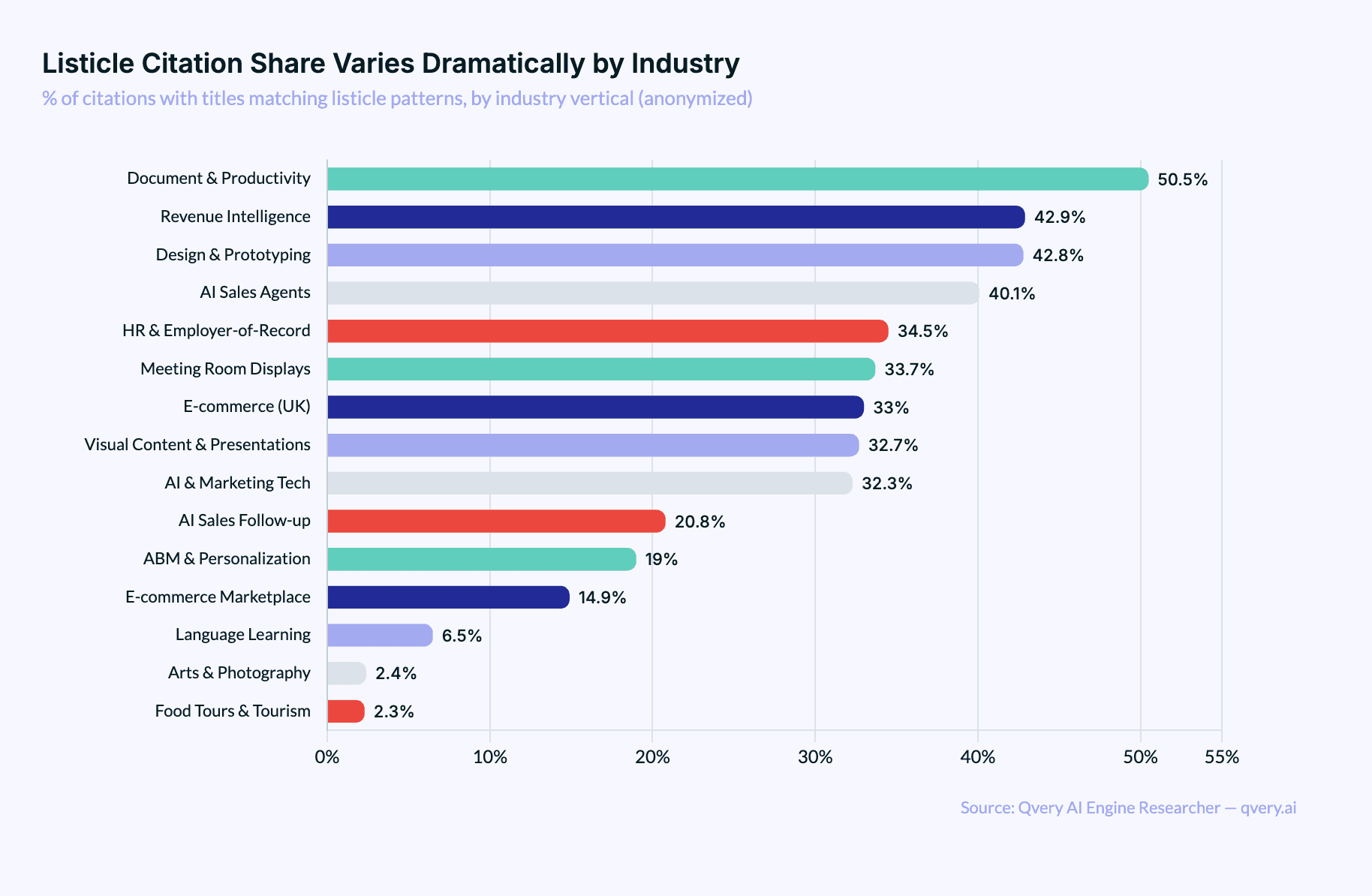

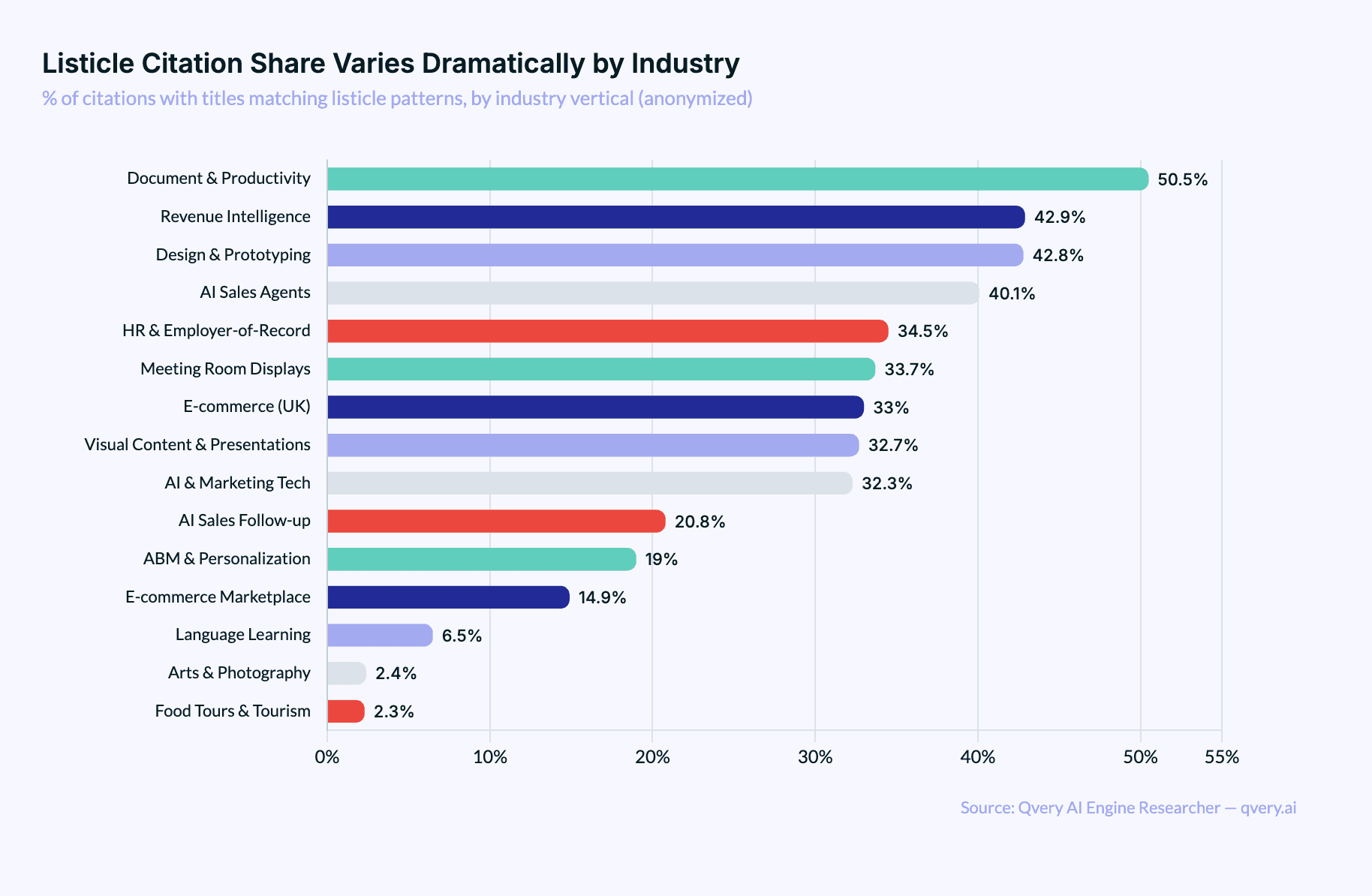

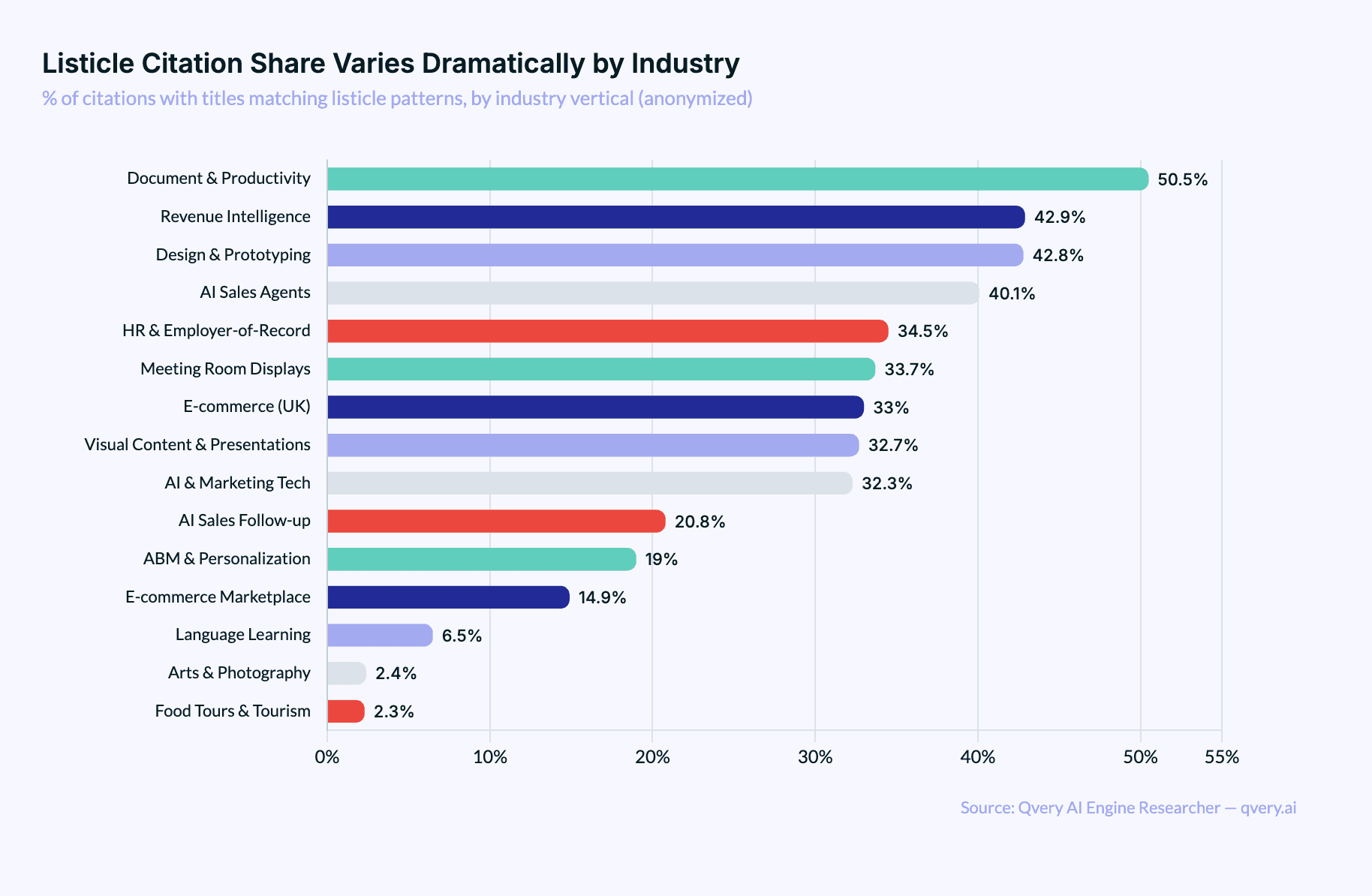

Citation patterns don't behave the same across industries.

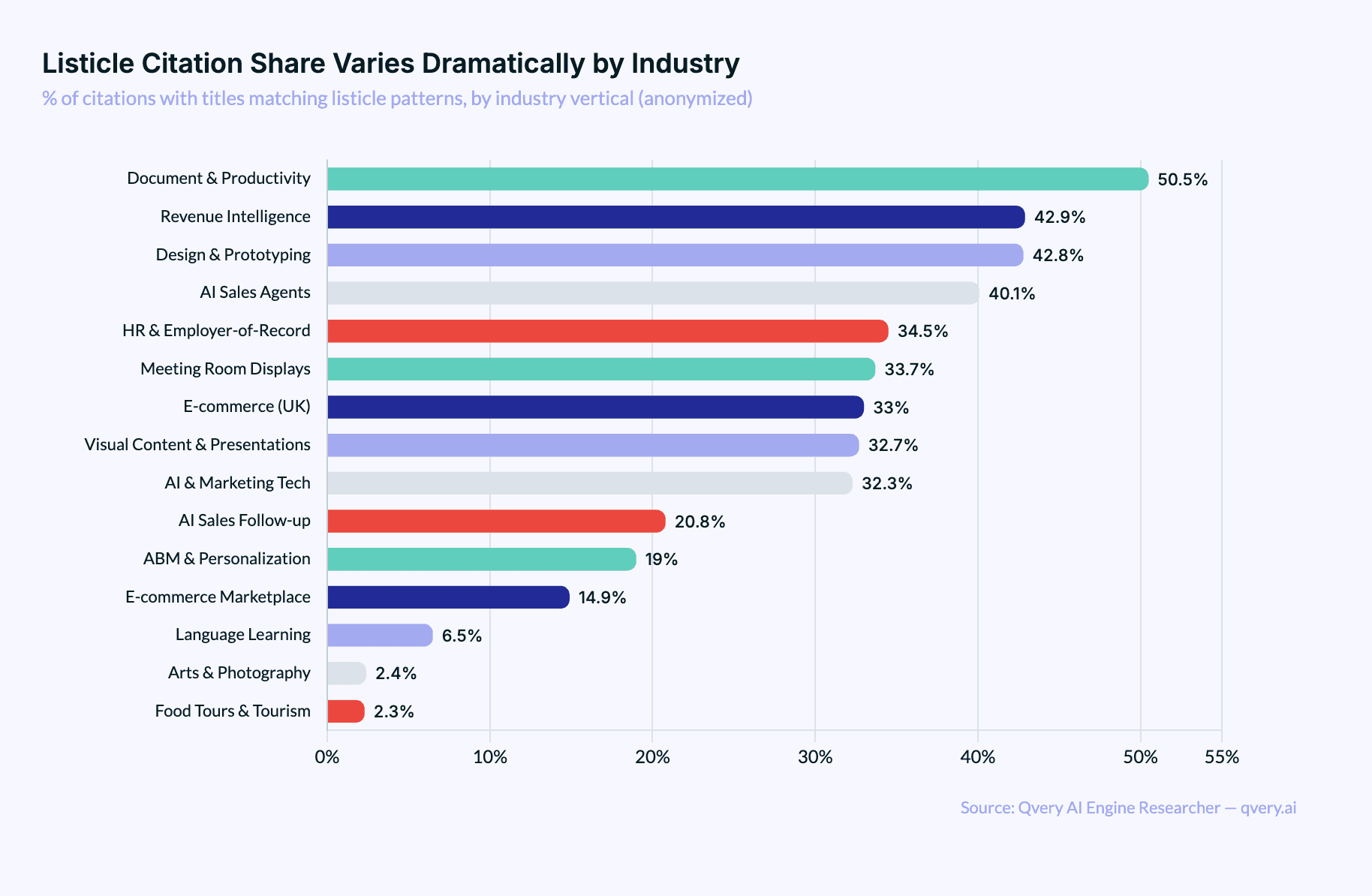

In document and productivity tools industry, over 50% of all classifiable citations are listicles. In revenue intelligence and design and prototyping, it's above 42%. AI sales tools, HR/EOR platforms, e-commerce, and visual content tools all sit in the 30-40% range.

Then it falls off a cliff. Language learning drops to 6.5%. Arts and photography and food tours are at 2.3-2.4%. That's a 22x difference between the highest and lowest industries.

The pattern is clear: industries where buyers compare options get more listicles cited.

If your customers ask "what's the best X for Y?" AI engines look for ranked lists to build their answer. SaaS, productivity tools, and e-commerce are comparison-heavy categories. Food tours and arts aren't.

The implication for your content strategy is direct. If you're in a comparison-heavy vertical (SaaS, e-commerce, productivity), listicles on your own blog and on third-party sites are high-leverage because AI engines are actively looking for ranked content in your category. If you're in travel, tourism, or the arts, your citation mix will be dominated by review platforms, local directories, and social media instead. The format that works depends on the questions your buyers ask.

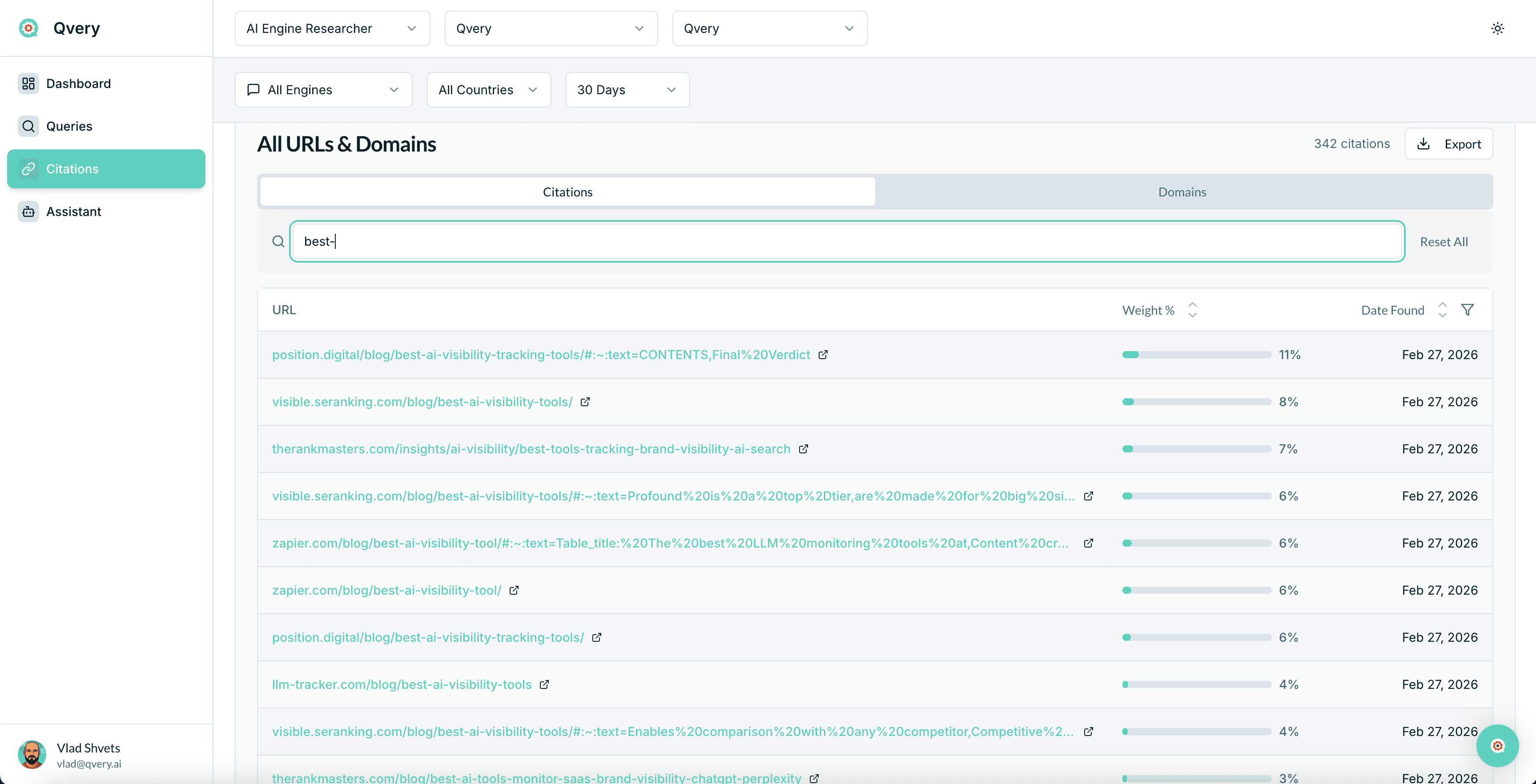

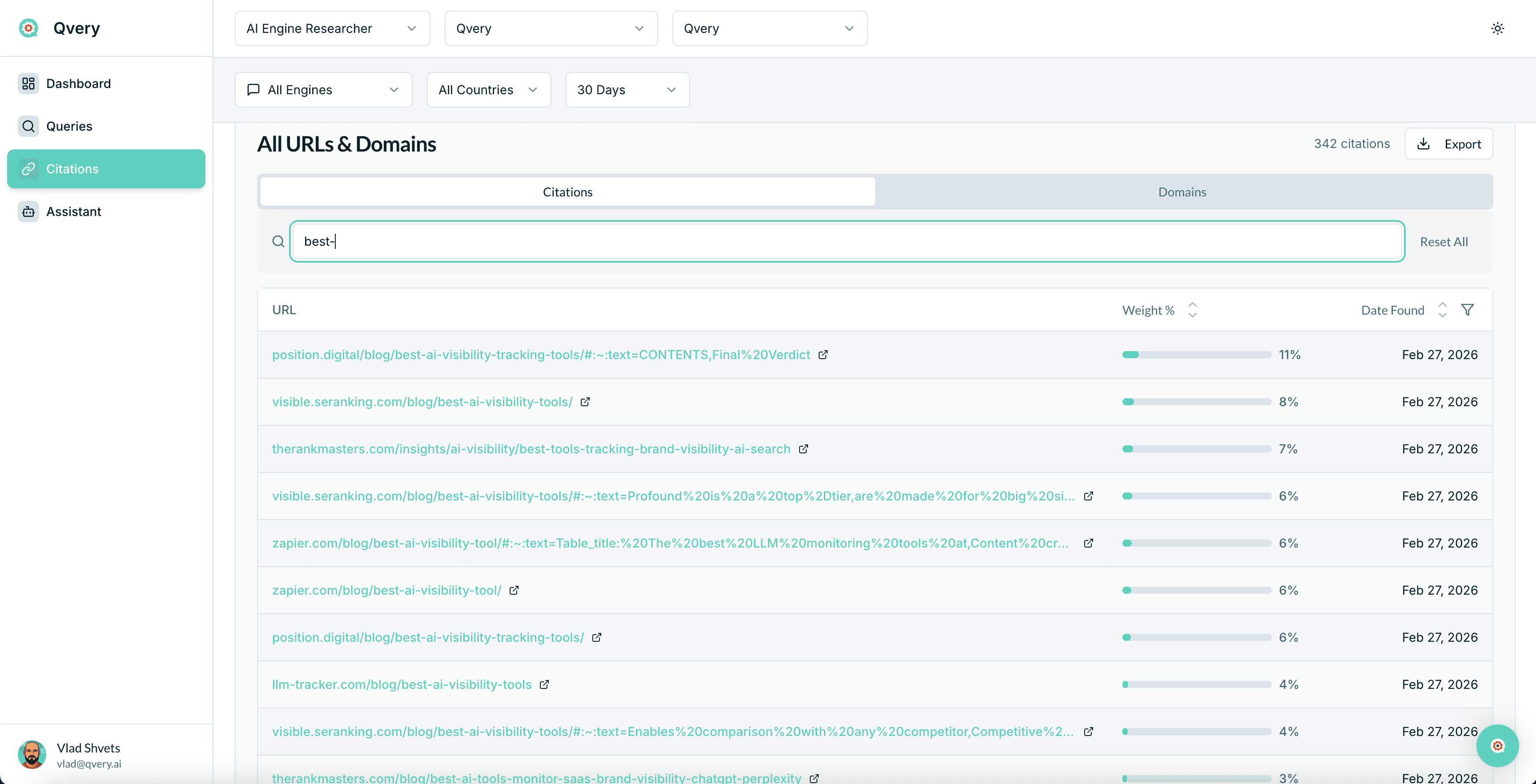

How Qvery Tracks Content Type Citations

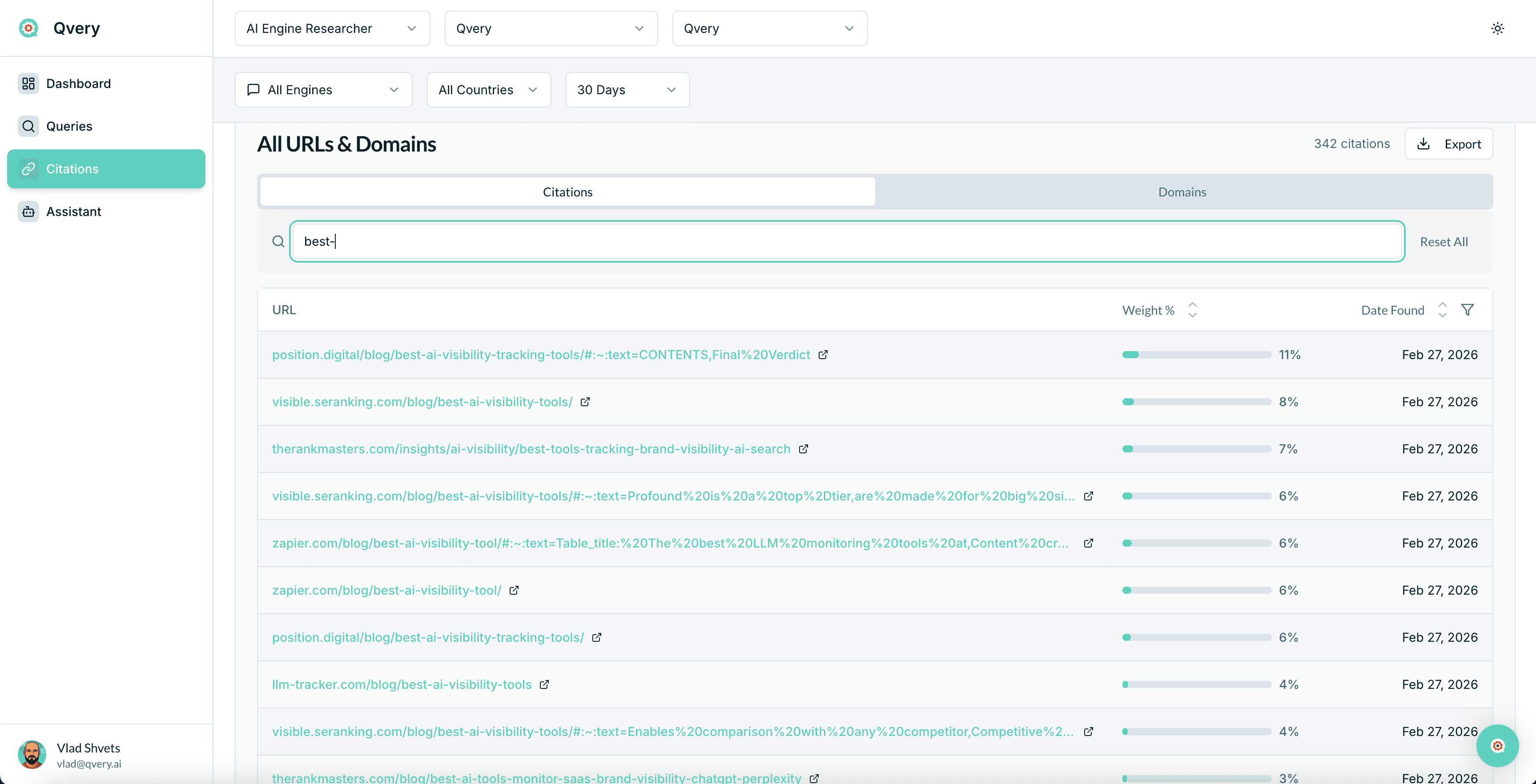

All the data in this post comes from Qvery's AI Engine Researcher.

Qvery can help you see whether your content or your competitors' content is getting cited, and what format it's in. If your competitor's listicle is getting cited and your detailed guide isn't, that's a signal. If discussion content on Reddit is outpacing your blog, that's a different signal.

Should You Actually Publish More Listicles?

Yes. But not the way you think.

The data makes one thing undeniable: AI engines, especially ChatGPT, disproportionately cite listicle-format content. If your content strategy includes zero listicles, you're voluntarily sitting out the most-cited content format in AI search. That's not a strategy. That's an oversight.

But here's what the data doesn't say: it doesn't say bad listicles work. The listicles that get cited aren't "Top 10 Best CRMs (That We Totally Didn't Rank Based on Affiliate Commission)."

They're well-structured, specific, and genuinely useful comparisons. The format works because AI engines need curated, ranked information to build their responses. The quality of the curation is what determines whether yours gets picked.

The format is the signal. The quality is the filter. AI engines want listicles, but only the ones worth citing.

A few principles if you're going to take this seriously:

Be honest about rankings. If your product isn't the best option for every use case, say so. AI engines are increasingly sophisticated at detecting self-serving content. Listing yourself as #1 while burying competitors with vague descriptions is the fastest way to get ignored. Objectivity is a competitive advantage.

Don't trash your competitors. This is counterproductive for two reasons. First, AI engines cross-reference sentiment. If your listicle is the only one badmouthing a competitor, it looks biased. Second, the competitor you trash today might be the brand the AI engine trusts more tomorrow. Be fair. Be specific. Let the product differences speak.

Create listicles on external domains. This is the strategy most brands miss entirely. Getting your brand featured in a third-party listicle on a trusted domain is often more valuable than publishing your own. Partner with industry publications, review sites, and niche blogs to create "Best X for Y" content where your brand earns a spot. When TechRadar or Zapier publishes a listicle that includes you, that citation carries more weight than your own blog saying you're great.

Match the format to the query intent. Not every topic needs a listicle. If someone is asking "how to set up Google Analytics," a tutorial is the right format. If someone is asking "best analytics tools for startups," a listicle is the right format. Build your content around the queries your audience actually asks, then pick the format that matches.

Update regularly. Stale listicles lose citation share. AI engines favor fresh, current content. If your "Best 10 Project Management Tools for 2025" still says 2025, you're already losing ground to someone who updated theirs.

Listicles Are a Structure Problem, Not a Quality Problem

Listicles dominate AI search citations not because AI engines are lazy, but because the format is structurally aligned with how AI generates recommendations. When someone asks "what are the best tools for X," the AI needs a ranked, curated list to build its answer. Listicles are that list, pre-built. The AI doesn't have to do the curation work. You already did it.

But this is still content marketing. The format gets you in the door. The quality determines whether you stay. Every data point in this post was pulled from Qvery's proprietary citation database, and the one constant across every trend, every platform comparison, and every industry split is this: the content that gets cited is the content that actually helps people. Listicles just happen to be the most popular shape for that help to take.

If you want to see how your content stacks up against competitors in AI search, start a free trial of Qvery.

Of all the content formats you could write, listicles are the one AI search engines cite the most. Not tutorials. Not reviews. Not in-depth how-to guides. Listicles.

The "Top 10 Best Whatever" posts that content marketers have been writing since 2009 are, apparently, exactly what ChatGPT and Google AI Mode want to reference when they answer a question.

We analyzed our proprietary citation data at Qvery across both ChatGPT and Google AI Mode, classified every citation by article type based on title patterns, and found something that surprised even us: listicles account for 45.8% of all classifiable AI engine citations. That's not a slight edge. That's nearly half.

The format your content strategy intern was told is "outdated" is the single most referenced content type in AI search. It beats reviews by 2.4x. It beats how-to guides by 7.6x. It beats news articles by 50x. (Awkward.)

Before we go further: our classification system uses title pattern matching to identify article types. Roughly 12% of all citations match known patterns (Listicle, Review, Reference, Discussion, How-To/Guide, News). The other 88% are brand pages, product pages, and niche content that don't follow recognizable title patterns. Everything in this post refers to that classifiable 12%, which represents hundreds of thousands of individual citations.

ChatGPT Is a Listicle Recommendation Engine

The platform split on this is wild. ChatGPT cites listicles at 8.62% of all its citations. Google AI Mode? Just 2.50%. That's a 3.4x difference.

It gets more extreme. ChatGPT produces 74.8% of all listicle citations despite generating only about 43% of total citation volume. Nearly 1 in 11 ChatGPT citations is a listicle. Add reviews to the mix and you get 12.49% of all ChatGPT citations being listicles or reviews. That's 1 in 8.

Stack on reference content and how-to guides and you reach 17.04% of all ChatGPT citations being structured, classifiable editorial content. ChatGPT isn't just a search engine. It's fundamentally a recommendation engine that loves curated, ranked content.

Google AI Mode takes the opposite approach. It prefers to synthesize ranked lists itself rather than cite external ones. Only 5.3% of Google AI citations match any classifiable article type, compared to ChatGPT's 19.0%. Google AI Mode has its own opinions, apparently, and doesn't need yours.

How Every Content Format Stacks Up

Here's the full breakdown of classifiable content types, ranked by share:

Listicles: 45.8% of classified content, 5.33% of all citations. The undisputed champion. "Top 10," "Best 5," "X Alternatives" titles dominate. ChatGPT drives 74.8% of the volume.

Reviews: 19.4% of classified, 2.25% of all. Comparisons, pricing breakdowns, "vs" articles, pros-and-cons content. ChatGPT drives 80.9%. Listicles are 2.4x more cited than reviews.

Discussion: 14.5% of classified, 1.68% of all. Reddit, Quora, StackOverflow. The only type that's roughly balanced between platforms: ChatGPT at 46.6%, Google AI at 53.4%.

Reference: ~13% of classified, ~1.5% of all. Wikipedia, "what is" articles, definitions. Almost exclusively a ChatGPT behavior. 96% of reference citations come from ChatGPT. Google AI barely touches encyclopedic content because it synthesizes definitions from its own knowledge graph.

How-To / Guide: 6.0% of classified, 0.69% of all. Tutorials, step-by-step content. ChatGPT cites these at 5.3x Google AI's rate. Instructional content is valued, just not as much as listicles.

News: 0.9% of classified, 0.11% of all. The rarest type. AI engines overwhelmingly prefer evergreen content. Breaking news is basically invisible.

The pattern is clear: both ChatGPT and Google AI Mode agree that listicles are their #1 classifiable type (45.3% on ChatGPT, 47.0% on Google AI). The difference is that ChatGPT actually cites structured content 3.6x more often.

Listicles Win on Volume, Not Position

Here's where the nuance lives. Listicles are the most cited content type. They are not the most prominently cited.

The average citation rank for listicles is 14.36 (median 13.0). That puts them in positions 8-18 of citation lists, which is solidly mid-pack. Compare that to reference content, which has an average rank of 5.65, meaning Wikipedia and definition pages consistently land near the top of responses.

This distinction matters. Listicles are a volume play. They show up everywhere, across a wide range of queries, reinforcing your brand's presence across the topics that matter to your category. Reference content is narrower but carries a different kind of weight: it gets cited when AI engines need to establish facts and definitions.

Think of it like this: reference content is the friend who shows up to three parties a year but everyone knows their name. Listicles are the friend who shows up to every party and talks to everyone in the room. Both build relationships. One goes deep. The other goes wide.

The point isn't that one position in the citation list is better than another. The point is that listicles get you into more conversations. And in AI search, being part of the conversation is the first thing that matters.

The Industry Gap Changes Everything

Citation patterns don't behave the same across industries.

In document and productivity tools industry, over 50% of all classifiable citations are listicles. In revenue intelligence and design and prototyping, it's above 42%. AI sales tools, HR/EOR platforms, e-commerce, and visual content tools all sit in the 30-40% range.

Then it falls off a cliff. Language learning drops to 6.5%. Arts and photography and food tours are at 2.3-2.4%. That's a 22x difference between the highest and lowest industries.

The pattern is clear: industries where buyers compare options get more listicles cited.

If your customers ask "what's the best X for Y?" AI engines look for ranked lists to build their answer. SaaS, productivity tools, and e-commerce are comparison-heavy categories. Food tours and arts aren't.

The implication for your content strategy is direct. If you're in a comparison-heavy vertical (SaaS, e-commerce, productivity), listicles on your own blog and on third-party sites are high-leverage because AI engines are actively looking for ranked content in your category. If you're in travel, tourism, or the arts, your citation mix will be dominated by review platforms, local directories, and social media instead. The format that works depends on the questions your buyers ask.

How Qvery Tracks Content Type Citations

All the data in this post comes from Qvery's AI Engine Researcher.

Qvery can help you see whether your content or your competitors' content is getting cited, and what format it's in. If your competitor's listicle is getting cited and your detailed guide isn't, that's a signal. If discussion content on Reddit is outpacing your blog, that's a different signal.

Should You Actually Publish More Listicles?

Yes. But not the way you think.

The data makes one thing undeniable: AI engines, especially ChatGPT, disproportionately cite listicle-format content. If your content strategy includes zero listicles, you're voluntarily sitting out the most-cited content format in AI search. That's not a strategy. That's an oversight.

But here's what the data doesn't say: it doesn't say bad listicles work. The listicles that get cited aren't "Top 10 Best CRMs (That We Totally Didn't Rank Based on Affiliate Commission)."

They're well-structured, specific, and genuinely useful comparisons. The format works because AI engines need curated, ranked information to build their responses. The quality of the curation is what determines whether yours gets picked.

The format is the signal. The quality is the filter. AI engines want listicles, but only the ones worth citing.

A few principles if you're going to take this seriously:

Be honest about rankings. If your product isn't the best option for every use case, say so. AI engines are increasingly sophisticated at detecting self-serving content. Listing yourself as #1 while burying competitors with vague descriptions is the fastest way to get ignored. Objectivity is a competitive advantage.

Don't trash your competitors. This is counterproductive for two reasons. First, AI engines cross-reference sentiment. If your listicle is the only one badmouthing a competitor, it looks biased. Second, the competitor you trash today might be the brand the AI engine trusts more tomorrow. Be fair. Be specific. Let the product differences speak.

Create listicles on external domains. This is the strategy most brands miss entirely. Getting your brand featured in a third-party listicle on a trusted domain is often more valuable than publishing your own. Partner with industry publications, review sites, and niche blogs to create "Best X for Y" content where your brand earns a spot. When TechRadar or Zapier publishes a listicle that includes you, that citation carries more weight than your own blog saying you're great.

Match the format to the query intent. Not every topic needs a listicle. If someone is asking "how to set up Google Analytics," a tutorial is the right format. If someone is asking "best analytics tools for startups," a listicle is the right format. Build your content around the queries your audience actually asks, then pick the format that matches.

Update regularly. Stale listicles lose citation share. AI engines favor fresh, current content. If your "Best 10 Project Management Tools for 2025" still says 2025, you're already losing ground to someone who updated theirs.

Listicles Are a Structure Problem, Not a Quality Problem

Listicles dominate AI search citations not because AI engines are lazy, but because the format is structurally aligned with how AI generates recommendations. When someone asks "what are the best tools for X," the AI needs a ranked, curated list to build its answer. Listicles are that list, pre-built. The AI doesn't have to do the curation work. You already did it.

But this is still content marketing. The format gets you in the door. The quality determines whether you stay. Every data point in this post was pulled from Qvery's proprietary citation database, and the one constant across every trend, every platform comparison, and every industry split is this: the content that gets cited is the content that actually helps people. Listicles just happen to be the most popular shape for that help to take.

If you want to see how your content stacks up against competitors in AI search, start a free trial of Qvery.

Of all the content formats you could write, listicles are the one AI search engines cite the most. Not tutorials. Not reviews. Not in-depth how-to guides. Listicles.

The "Top 10 Best Whatever" posts that content marketers have been writing since 2009 are, apparently, exactly what ChatGPT and Google AI Mode want to reference when they answer a question.

We analyzed our proprietary citation data at Qvery across both ChatGPT and Google AI Mode, classified every citation by article type based on title patterns, and found something that surprised even us: listicles account for 45.8% of all classifiable AI engine citations. That's not a slight edge. That's nearly half.

The format your content strategy intern was told is "outdated" is the single most referenced content type in AI search. It beats reviews by 2.4x. It beats how-to guides by 7.6x. It beats news articles by 50x. (Awkward.)

Before we go further: our classification system uses title pattern matching to identify article types. Roughly 12% of all citations match known patterns (Listicle, Review, Reference, Discussion, How-To/Guide, News). The other 88% are brand pages, product pages, and niche content that don't follow recognizable title patterns. Everything in this post refers to that classifiable 12%, which represents hundreds of thousands of individual citations.

ChatGPT Is a Listicle Recommendation Engine

The platform split on this is wild. ChatGPT cites listicles at 8.62% of all its citations. Google AI Mode? Just 2.50%. That's a 3.4x difference.

It gets more extreme. ChatGPT produces 74.8% of all listicle citations despite generating only about 43% of total citation volume. Nearly 1 in 11 ChatGPT citations is a listicle. Add reviews to the mix and you get 12.49% of all ChatGPT citations being listicles or reviews. That's 1 in 8.

Stack on reference content and how-to guides and you reach 17.04% of all ChatGPT citations being structured, classifiable editorial content. ChatGPT isn't just a search engine. It's fundamentally a recommendation engine that loves curated, ranked content.

Google AI Mode takes the opposite approach. It prefers to synthesize ranked lists itself rather than cite external ones. Only 5.3% of Google AI citations match any classifiable article type, compared to ChatGPT's 19.0%. Google AI Mode has its own opinions, apparently, and doesn't need yours.

How Every Content Format Stacks Up

Here's the full breakdown of classifiable content types, ranked by share:

Listicles: 45.8% of classified content, 5.33% of all citations. The undisputed champion. "Top 10," "Best 5," "X Alternatives" titles dominate. ChatGPT drives 74.8% of the volume.

Reviews: 19.4% of classified, 2.25% of all. Comparisons, pricing breakdowns, "vs" articles, pros-and-cons content. ChatGPT drives 80.9%. Listicles are 2.4x more cited than reviews.

Discussion: 14.5% of classified, 1.68% of all. Reddit, Quora, StackOverflow. The only type that's roughly balanced between platforms: ChatGPT at 46.6%, Google AI at 53.4%.

Reference: ~13% of classified, ~1.5% of all. Wikipedia, "what is" articles, definitions. Almost exclusively a ChatGPT behavior. 96% of reference citations come from ChatGPT. Google AI barely touches encyclopedic content because it synthesizes definitions from its own knowledge graph.

How-To / Guide: 6.0% of classified, 0.69% of all. Tutorials, step-by-step content. ChatGPT cites these at 5.3x Google AI's rate. Instructional content is valued, just not as much as listicles.

News: 0.9% of classified, 0.11% of all. The rarest type. AI engines overwhelmingly prefer evergreen content. Breaking news is basically invisible.

The pattern is clear: both ChatGPT and Google AI Mode agree that listicles are their #1 classifiable type (45.3% on ChatGPT, 47.0% on Google AI). The difference is that ChatGPT actually cites structured content 3.6x more often.

Listicles Win on Volume, Not Position

Here's where the nuance lives. Listicles are the most cited content type. They are not the most prominently cited.

The average citation rank for listicles is 14.36 (median 13.0). That puts them in positions 8-18 of citation lists, which is solidly mid-pack. Compare that to reference content, which has an average rank of 5.65, meaning Wikipedia and definition pages consistently land near the top of responses.

This distinction matters. Listicles are a volume play. They show up everywhere, across a wide range of queries, reinforcing your brand's presence across the topics that matter to your category. Reference content is narrower but carries a different kind of weight: it gets cited when AI engines need to establish facts and definitions.

Think of it like this: reference content is the friend who shows up to three parties a year but everyone knows their name. Listicles are the friend who shows up to every party and talks to everyone in the room. Both build relationships. One goes deep. The other goes wide.

The point isn't that one position in the citation list is better than another. The point is that listicles get you into more conversations. And in AI search, being part of the conversation is the first thing that matters.

The Industry Gap Changes Everything

Citation patterns don't behave the same across industries.

In document and productivity tools industry, over 50% of all classifiable citations are listicles. In revenue intelligence and design and prototyping, it's above 42%. AI sales tools, HR/EOR platforms, e-commerce, and visual content tools all sit in the 30-40% range.

Then it falls off a cliff. Language learning drops to 6.5%. Arts and photography and food tours are at 2.3-2.4%. That's a 22x difference between the highest and lowest industries.

The pattern is clear: industries where buyers compare options get more listicles cited.

If your customers ask "what's the best X for Y?" AI engines look for ranked lists to build their answer. SaaS, productivity tools, and e-commerce are comparison-heavy categories. Food tours and arts aren't.

The implication for your content strategy is direct. If you're in a comparison-heavy vertical (SaaS, e-commerce, productivity), listicles on your own blog and on third-party sites are high-leverage because AI engines are actively looking for ranked content in your category. If you're in travel, tourism, or the arts, your citation mix will be dominated by review platforms, local directories, and social media instead. The format that works depends on the questions your buyers ask.

How Qvery Tracks Content Type Citations

All the data in this post comes from Qvery's AI Engine Researcher.

Qvery can help you see whether your content or your competitors' content is getting cited, and what format it's in. If your competitor's listicle is getting cited and your detailed guide isn't, that's a signal. If discussion content on Reddit is outpacing your blog, that's a different signal.

Should You Actually Publish More Listicles?

Yes. But not the way you think.

The data makes one thing undeniable: AI engines, especially ChatGPT, disproportionately cite listicle-format content. If your content strategy includes zero listicles, you're voluntarily sitting out the most-cited content format in AI search. That's not a strategy. That's an oversight.

But here's what the data doesn't say: it doesn't say bad listicles work. The listicles that get cited aren't "Top 10 Best CRMs (That We Totally Didn't Rank Based on Affiliate Commission)."

They're well-structured, specific, and genuinely useful comparisons. The format works because AI engines need curated, ranked information to build their responses. The quality of the curation is what determines whether yours gets picked.

The format is the signal. The quality is the filter. AI engines want listicles, but only the ones worth citing.

A few principles if you're going to take this seriously:

Be honest about rankings. If your product isn't the best option for every use case, say so. AI engines are increasingly sophisticated at detecting self-serving content. Listing yourself as #1 while burying competitors with vague descriptions is the fastest way to get ignored. Objectivity is a competitive advantage.

Don't trash your competitors. This is counterproductive for two reasons. First, AI engines cross-reference sentiment. If your listicle is the only one badmouthing a competitor, it looks biased. Second, the competitor you trash today might be the brand the AI engine trusts more tomorrow. Be fair. Be specific. Let the product differences speak.

Create listicles on external domains. This is the strategy most brands miss entirely. Getting your brand featured in a third-party listicle on a trusted domain is often more valuable than publishing your own. Partner with industry publications, review sites, and niche blogs to create "Best X for Y" content where your brand earns a spot. When TechRadar or Zapier publishes a listicle that includes you, that citation carries more weight than your own blog saying you're great.

Match the format to the query intent. Not every topic needs a listicle. If someone is asking "how to set up Google Analytics," a tutorial is the right format. If someone is asking "best analytics tools for startups," a listicle is the right format. Build your content around the queries your audience actually asks, then pick the format that matches.

Update regularly. Stale listicles lose citation share. AI engines favor fresh, current content. If your "Best 10 Project Management Tools for 2025" still says 2025, you're already losing ground to someone who updated theirs.

Listicles Are a Structure Problem, Not a Quality Problem

Listicles dominate AI search citations not because AI engines are lazy, but because the format is structurally aligned with how AI generates recommendations. When someone asks "what are the best tools for X," the AI needs a ranked, curated list to build its answer. Listicles are that list, pre-built. The AI doesn't have to do the curation work. You already did it.

But this is still content marketing. The format gets you in the door. The quality determines whether you stay. Every data point in this post was pulled from Qvery's proprietary citation database, and the one constant across every trend, every platform comparison, and every industry split is this: the content that gets cited is the content that actually helps people. Listicles just happen to be the most popular shape for that help to take.

If you want to see how your content stacks up against competitors in AI search, start a free trial of Qvery.

Of all the content formats you could write, listicles are the one AI search engines cite the most. Not tutorials. Not reviews. Not in-depth how-to guides. Listicles.

The "Top 10 Best Whatever" posts that content marketers have been writing since 2009 are, apparently, exactly what ChatGPT and Google AI Mode want to reference when they answer a question.

We analyzed our proprietary citation data at Qvery across both ChatGPT and Google AI Mode, classified every citation by article type based on title patterns, and found something that surprised even us: listicles account for 45.8% of all classifiable AI engine citations. That's not a slight edge. That's nearly half.

The format your content strategy intern was told is "outdated" is the single most referenced content type in AI search. It beats reviews by 2.4x. It beats how-to guides by 7.6x. It beats news articles by 50x. (Awkward.)

Before we go further: our classification system uses title pattern matching to identify article types. Roughly 12% of all citations match known patterns (Listicle, Review, Reference, Discussion, How-To/Guide, News). The other 88% are brand pages, product pages, and niche content that don't follow recognizable title patterns. Everything in this post refers to that classifiable 12%, which represents hundreds of thousands of individual citations.

ChatGPT Is a Listicle Recommendation Engine

The platform split on this is wild. ChatGPT cites listicles at 8.62% of all its citations. Google AI Mode? Just 2.50%. That's a 3.4x difference.

It gets more extreme. ChatGPT produces 74.8% of all listicle citations despite generating only about 43% of total citation volume. Nearly 1 in 11 ChatGPT citations is a listicle. Add reviews to the mix and you get 12.49% of all ChatGPT citations being listicles or reviews. That's 1 in 8.

Stack on reference content and how-to guides and you reach 17.04% of all ChatGPT citations being structured, classifiable editorial content. ChatGPT isn't just a search engine. It's fundamentally a recommendation engine that loves curated, ranked content.

Google AI Mode takes the opposite approach. It prefers to synthesize ranked lists itself rather than cite external ones. Only 5.3% of Google AI citations match any classifiable article type, compared to ChatGPT's 19.0%. Google AI Mode has its own opinions, apparently, and doesn't need yours.

How Every Content Format Stacks Up

Here's the full breakdown of classifiable content types, ranked by share:

Listicles: 45.8% of classified content, 5.33% of all citations. The undisputed champion. "Top 10," "Best 5," "X Alternatives" titles dominate. ChatGPT drives 74.8% of the volume.

Reviews: 19.4% of classified, 2.25% of all. Comparisons, pricing breakdowns, "vs" articles, pros-and-cons content. ChatGPT drives 80.9%. Listicles are 2.4x more cited than reviews.

Discussion: 14.5% of classified, 1.68% of all. Reddit, Quora, StackOverflow. The only type that's roughly balanced between platforms: ChatGPT at 46.6%, Google AI at 53.4%.

Reference: ~13% of classified, ~1.5% of all. Wikipedia, "what is" articles, definitions. Almost exclusively a ChatGPT behavior. 96% of reference citations come from ChatGPT. Google AI barely touches encyclopedic content because it synthesizes definitions from its own knowledge graph.

How-To / Guide: 6.0% of classified, 0.69% of all. Tutorials, step-by-step content. ChatGPT cites these at 5.3x Google AI's rate. Instructional content is valued, just not as much as listicles.

News: 0.9% of classified, 0.11% of all. The rarest type. AI engines overwhelmingly prefer evergreen content. Breaking news is basically invisible.

The pattern is clear: both ChatGPT and Google AI Mode agree that listicles are their #1 classifiable type (45.3% on ChatGPT, 47.0% on Google AI). The difference is that ChatGPT actually cites structured content 3.6x more often.

Listicles Win on Volume, Not Position

Here's where the nuance lives. Listicles are the most cited content type. They are not the most prominently cited.

The average citation rank for listicles is 14.36 (median 13.0). That puts them in positions 8-18 of citation lists, which is solidly mid-pack. Compare that to reference content, which has an average rank of 5.65, meaning Wikipedia and definition pages consistently land near the top of responses.

This distinction matters. Listicles are a volume play. They show up everywhere, across a wide range of queries, reinforcing your brand's presence across the topics that matter to your category. Reference content is narrower but carries a different kind of weight: it gets cited when AI engines need to establish facts and definitions.

Think of it like this: reference content is the friend who shows up to three parties a year but everyone knows their name. Listicles are the friend who shows up to every party and talks to everyone in the room. Both build relationships. One goes deep. The other goes wide.

The point isn't that one position in the citation list is better than another. The point is that listicles get you into more conversations. And in AI search, being part of the conversation is the first thing that matters.

The Industry Gap Changes Everything

Citation patterns don't behave the same across industries.

In document and productivity tools industry, over 50% of all classifiable citations are listicles. In revenue intelligence and design and prototyping, it's above 42%. AI sales tools, HR/EOR platforms, e-commerce, and visual content tools all sit in the 30-40% range.

Then it falls off a cliff. Language learning drops to 6.5%. Arts and photography and food tours are at 2.3-2.4%. That's a 22x difference between the highest and lowest industries.

The pattern is clear: industries where buyers compare options get more listicles cited.

If your customers ask "what's the best X for Y?" AI engines look for ranked lists to build their answer. SaaS, productivity tools, and e-commerce are comparison-heavy categories. Food tours and arts aren't.

The implication for your content strategy is direct. If you're in a comparison-heavy vertical (SaaS, e-commerce, productivity), listicles on your own blog and on third-party sites are high-leverage because AI engines are actively looking for ranked content in your category. If you're in travel, tourism, or the arts, your citation mix will be dominated by review platforms, local directories, and social media instead. The format that works depends on the questions your buyers ask.

How Qvery Tracks Content Type Citations

All the data in this post comes from Qvery's AI Engine Researcher.

Qvery can help you see whether your content or your competitors' content is getting cited, and what format it's in. If your competitor's listicle is getting cited and your detailed guide isn't, that's a signal. If discussion content on Reddit is outpacing your blog, that's a different signal.

Should You Actually Publish More Listicles?

Yes. But not the way you think.

The data makes one thing undeniable: AI engines, especially ChatGPT, disproportionately cite listicle-format content. If your content strategy includes zero listicles, you're voluntarily sitting out the most-cited content format in AI search. That's not a strategy. That's an oversight.

But here's what the data doesn't say: it doesn't say bad listicles work. The listicles that get cited aren't "Top 10 Best CRMs (That We Totally Didn't Rank Based on Affiliate Commission)."

They're well-structured, specific, and genuinely useful comparisons. The format works because AI engines need curated, ranked information to build their responses. The quality of the curation is what determines whether yours gets picked.

The format is the signal. The quality is the filter. AI engines want listicles, but only the ones worth citing.

A few principles if you're going to take this seriously:

Be honest about rankings. If your product isn't the best option for every use case, say so. AI engines are increasingly sophisticated at detecting self-serving content. Listing yourself as #1 while burying competitors with vague descriptions is the fastest way to get ignored. Objectivity is a competitive advantage.

Don't trash your competitors. This is counterproductive for two reasons. First, AI engines cross-reference sentiment. If your listicle is the only one badmouthing a competitor, it looks biased. Second, the competitor you trash today might be the brand the AI engine trusts more tomorrow. Be fair. Be specific. Let the product differences speak.

Create listicles on external domains. This is the strategy most brands miss entirely. Getting your brand featured in a third-party listicle on a trusted domain is often more valuable than publishing your own. Partner with industry publications, review sites, and niche blogs to create "Best X for Y" content where your brand earns a spot. When TechRadar or Zapier publishes a listicle that includes you, that citation carries more weight than your own blog saying you're great.

Match the format to the query intent. Not every topic needs a listicle. If someone is asking "how to set up Google Analytics," a tutorial is the right format. If someone is asking "best analytics tools for startups," a listicle is the right format. Build your content around the queries your audience actually asks, then pick the format that matches.

Update regularly. Stale listicles lose citation share. AI engines favor fresh, current content. If your "Best 10 Project Management Tools for 2025" still says 2025, you're already losing ground to someone who updated theirs.

Listicles Are a Structure Problem, Not a Quality Problem

Listicles dominate AI search citations not because AI engines are lazy, but because the format is structurally aligned with how AI generates recommendations. When someone asks "what are the best tools for X," the AI needs a ranked, curated list to build its answer. Listicles are that list, pre-built. The AI doesn't have to do the curation work. You already did it.

But this is still content marketing. The format gets you in the door. The quality determines whether you stay. Every data point in this post was pulled from Qvery's proprietary citation database, and the one constant across every trend, every platform comparison, and every industry split is this: the content that gets cited is the content that actually helps people. Listicles just happen to be the most popular shape for that help to take.

If you want to see how your content stacks up against competitors in AI search, start a free trial of Qvery.

© 2026 Qvery AI OÜ